Model Context Protocol, usually shortened to MCP, is getting a lot of attention because it promises a cleaner way for AI systems to connect to tools, data sources, and services. The excitement is understandable. Teams are tired of building one-off integrations for every assistant, agent, and model wrapper they experiment with. A shared protocol sounds like a shortcut to interoperability.

That promise is real, but many teams are starting to talk about MCP as if standardizing the connection layer automatically solves trust, governance, and operational risk. It does not. MCP can make enterprise AI systems easier to compose and easier to extend. It does not remove the need to decide which tools should exist, who may use them, what data they can expose, how actions are approved, or how the whole setup is monitored.

MCP helps with integration consistency, which is a real problem

One reason enterprise AI projects stall is that every new assistant ends up with its own fragile tool wiring. A retrieval bot talks to one search system through custom code, a workflow assistant reaches a ticketing platform through a different adapter, and a third project invents its own approach for calling internal APIs. The result is repetitive platform work, uneven reliability, and a stack that becomes harder to audit every time another prototype shows up.

MCP is useful because it creates a more standard way to describe and invoke tools. That can lower the cost of experimentation and reduce duplicated glue code. Platform teams can build reusable patterns instead of constantly redoing the same plumbing for each project. From a software architecture perspective, that is a meaningful improvement.

A standard tool interface is not the same thing as a safe tool boundary

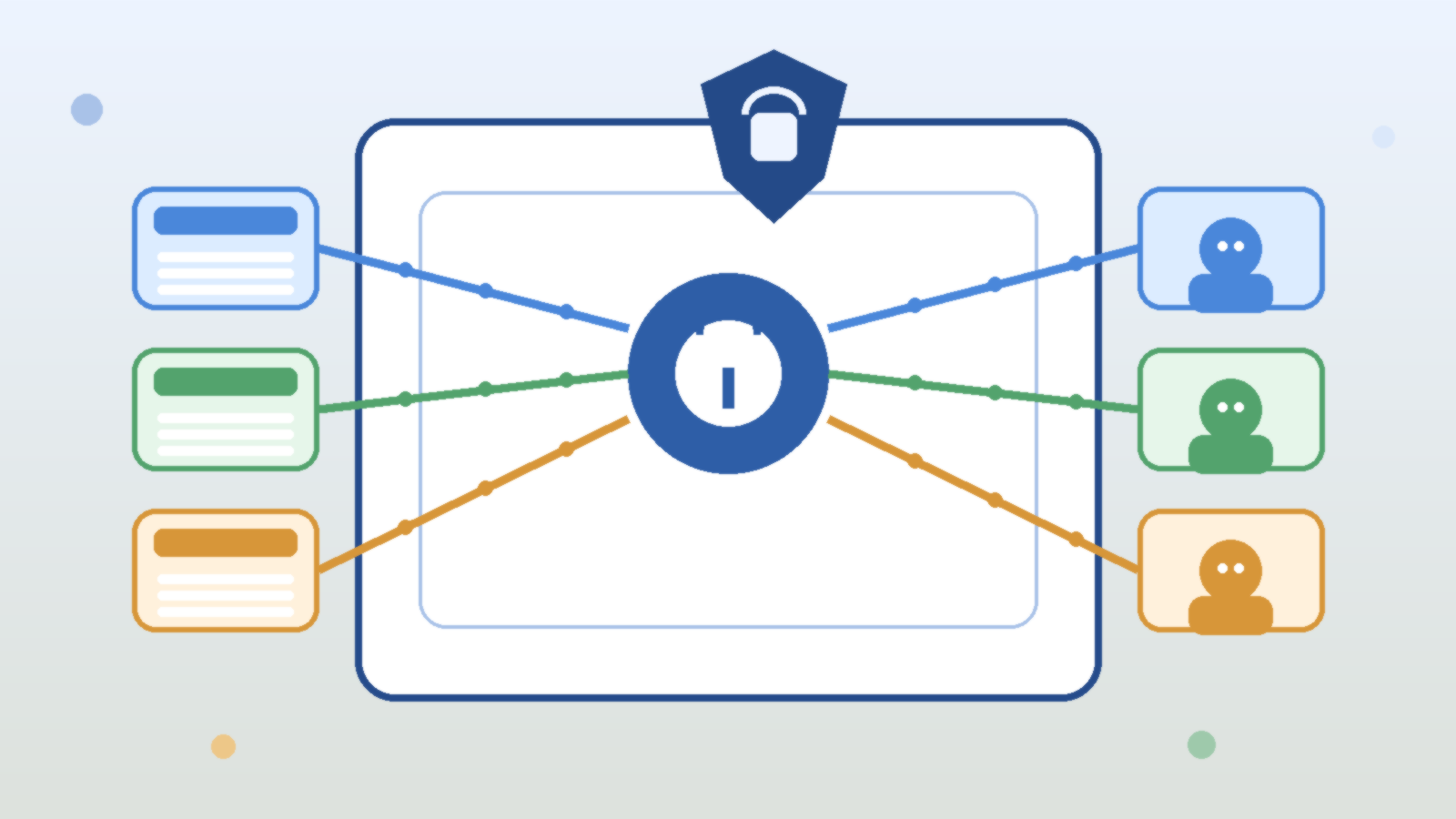

This is where some of the current enthusiasm gets sloppy. A tool being available through MCP does not make it safe to expose broadly. If an assistant can query internal files, trigger cloud automation, read a ticket system, or write to a messaging platform, the core risk is still about permissions, approval paths, and data exposure. The protocol does not make those questions disappear. It just gives the model a more standardized way to ask for access.

Enterprise teams should treat MCP servers the same way they treat any other privileged integration surface. Each server needs a defined trust level, an owner, a narrow scope, and a clear answer to the question of what damage it could do if misused. If that analysis has not happened, the existence of a neat protocol only makes it easier to scale bad assumptions.

The real design work is in authorization, not just connectivity

Many early AI integrations collapse all access into a single service account because it is quick. That is manageable for a toy demo and messy for almost anything else. Once assistants start operating across real systems, the important question is not whether the model can call a tool. It is whether the right identity is being enforced when that tool is called, with the right scope and the right audit trail.

If your organization adopts MCP, spend more time on authorization models than on protocol enthusiasm. Decide whether tools run with user-scoped permissions, service-scoped permissions, delegated approvals, or staged execution patterns. Decide which actions should be read-only, which require confirmation, and which should never be reachable from a conversational flow at all. That is the difference between a useful integration layer and an avoidable incident.

MCP can improve portability, but operations still need discipline

Another reason teams like MCP is the hope that tools become more portable across model vendors and agent frameworks. In practice, that can help. A standardized description of tools reduces vendor lock-in pressure and makes platform choices less painful over time. That is good for enterprise teams that do not want their entire integration strategy tied to one rapidly changing stack.

But portability on paper does not guarantee clean operations. Teams still need versioning, rollout control, usage telemetry, error handling, and ownership boundaries. If a tool definition changes, downstream assistants can break. If a server becomes slow or unreliable, user trust drops immediately. If a tool exposes too much data, the protocol will not save you. Standardization helps, but it does not replace platform discipline.

Observability matters more once tools become easier to attach

One subtle effect of MCP is that it can make tool expansion feel cheap. That is useful for innovation, but it also means the number of reachable capabilities may grow faster than the team’s visibility into what the assistant is actually doing. A model that can browse five internal systems through a tidy protocol is still a model making decisions about when and how to invoke those systems.

That means logging needs to capture more than basic API failures. Teams need to know which tool was offered, which tool was selected, what identity it ran under, what high-level action occurred, how often it failed, and whether sensitive paths were touched. The easier it becomes to connect tools, the more important it is to make tool use legible to operators and reviewers.

The practical enterprise question is where MCP fits in your control model

The best way to evaluate MCP is not to ask whether it is good or bad. Ask where it belongs in your architecture. For many teams, it makes sense as a standard integration layer inside a broader control model that already includes identity, policy, network boundaries, audit logging, and approval rules for risky actions. In that role, MCP can be genuinely helpful.

What it should not become is an excuse to skip those controls because the protocol feels modern and clean. Enterprises do not get safer because tools are easier for models to discover. They get safer because the surrounding system makes the easy path a well-governed path.

Final takeaway

Model Context Protocol changes the integration conversation in a useful way. It can reduce custom connector work, improve reuse, and make AI tooling less fragmented across teams. That is worth paying attention to.

What it does not change is the hard part of enterprise AI: deciding what an assistant should be allowed to touch, how those actions are governed, and how risk is contained when something behaves unexpectedly. If your team treats MCP as a clean integration standard inside a larger control framework, it can be valuable. If you treat it as a substitute for that framework, you are just standardizing your way into the same old problems.

Leave a Reply