Many organizations start their AI platform journey by wiring applications straight to a model endpoint and promising themselves they will add governance later. That works for a pilot, but it breaks down quickly once multiple teams, models, environments, and approval boundaries show up. Suddenly every app has its own authentication pattern, logging format, retry logic, and ad hoc content controls.

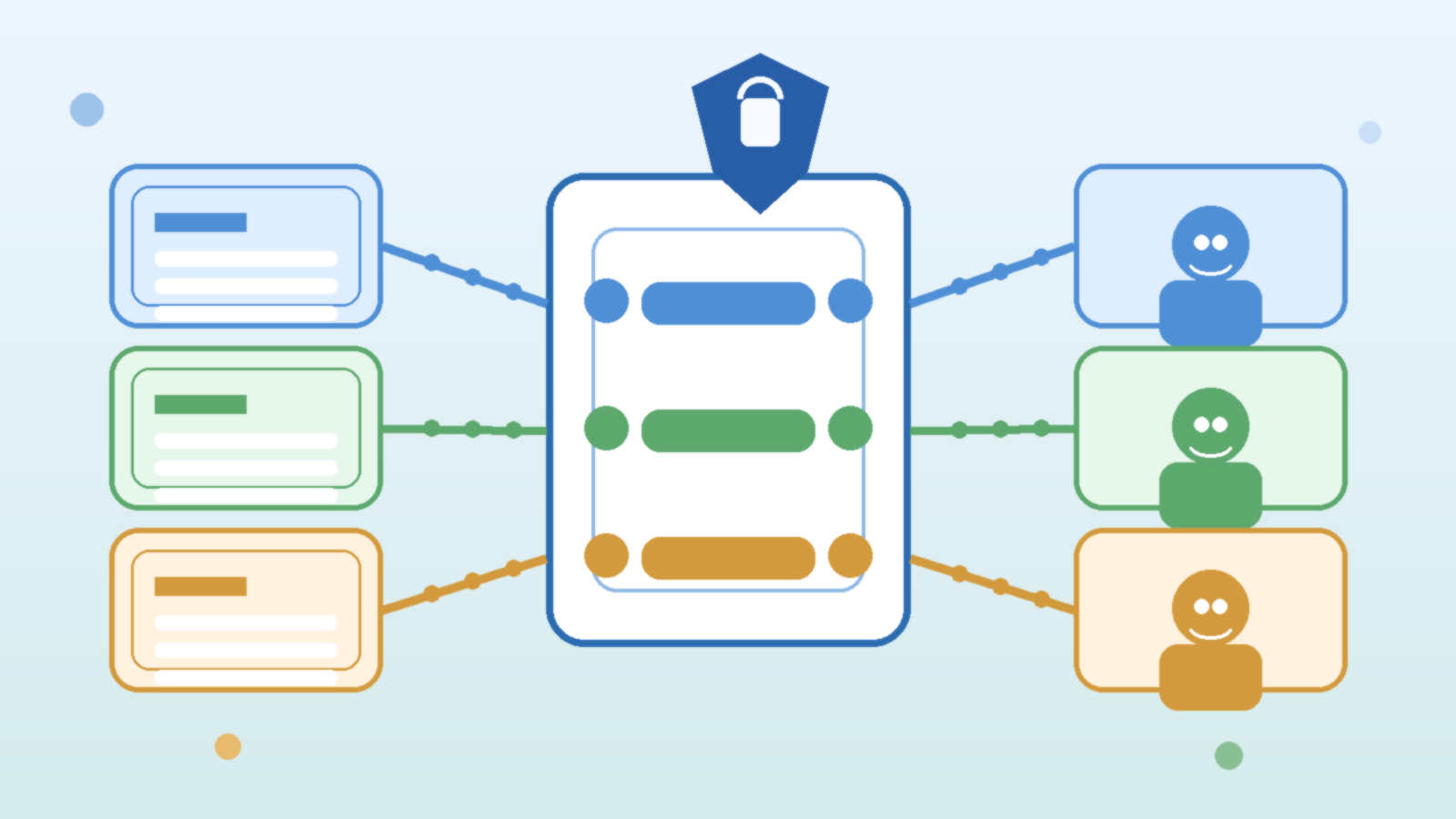

Azure API Management can help clean that up, but only if it is treated as an AI control plane rather than a basic pass-through proxy. The goal is not to add bureaucracy between developers and models. The goal is to centralize the policies that should be consistent anyway, while letting teams keep building on top of a stable interface.

Start With a Stable Front Door Instead of Per-App Model Wiring

When each application connects directly to Azure OpenAI or another model provider, every team ends up solving the same platform problems on its own. One app may log prompts, another may not. One team may rotate credentials correctly, another may leave secrets in a pipeline variable for months. The more AI features spread, the more uneven that operating model becomes.

A stable API Management front door gives teams one integration pattern for authentication, quotas, headers, observability, and policy enforcement. That does not eliminate application ownership, but it does remove a lot of repeated plumbing. Developers can focus on product behavior while the platform team handles the cross-cutting controls that should not vary from app to app.

Put Model Routing Rules in Policy, Not in Scattered Application Code

Model selection tends to become messy fast. A chatbot might use one deployment for low-cost summarization, another for tool calling, and a fallback model during regional incidents. If every application embeds that routing logic separately, you create a maintenance problem that looks small at first and expensive later.

API Management policies give you a cleaner place to express routing decisions. You can steer traffic by environment, user type, request size, geography, or service health without editing six applications every time a model version changes. This also helps governance teams understand what is actually happening, because the routing rules live in one visible control layer instead of being hidden across repos and release pipelines.

Use the Gateway to Enforce Cost and Rate Guardrails Early

Cost surprises in AI platforms rarely come from one dramatic event. They usually come from many normal requests that were never given a sensible ceiling. A gateway layer is a practical place to apply quotas, token budgeting, request size constraints, and workload-specific rate limits before usage gets strange enough to trigger a finance conversation.

This matters even more in internal platforms where success spreads by imitation. If one useful AI feature ships without spending controls, five more teams may copy the same pattern within a month. A control plane lets you set fair limits once and improve them deliberately instead of treating cost governance as a cleanup project.

Centralize Identity and Secret Handling Without Hiding Ownership

One of the least glamorous benefits of API Management is also one of the most important: it reduces the number of places where model credentials and backend connection details need to live. Managed identity, Key Vault integration, and policy-based authentication flows are not exciting talking points, but they are exactly the kind of boring consistency that keeps an AI platform healthy.

That does not mean application teams lose accountability. They still own their prompts, user experiences, data handling choices, and business logic. The difference is that the platform team can stop secret sprawl and normalize backend access patterns before they become a long-term risk.

Log the Right AI Signals, Not Just Generic API Metrics

Traditional API telemetry is helpful, but AI workloads need additional context. It is useful to know more than latency and status code. Teams usually need visibility into which model deployment handled the request, whether content filters fired, which policy branch routed the call, what quota bucket applied, and whether a fallback path was used.

When API Management sits in front of your model estate, it becomes a natural place to enrich logs and forward them into your normal monitoring stack. That makes platform reviews, incident response, and capacity planning much easier because AI traffic is described in operational terms rather than treated like an opaque blob of HTTP requests.

Keep the Control Plane Thin Enough That Developers Do Not Fight It

There is a trap here: once a gateway becomes central, it is tempting to cram every idea into it. If the control plane becomes slow, hard to version, or impossible to debug, teams will look for a way around it. Good platform design means putting shared policy in the gateway while leaving product-specific behavior in the application where it belongs.

A useful rule is to centralize what should be consistent across teams, such as authentication, quotas, routing, basic safety checks, and observability. Leave conversation design, retrieval strategy, business workflow decisions, and user-facing behavior to the teams closest to the product. That balance protects the platform without turning it into a bottleneck.

Final Takeaway

Azure API Management is not the whole AI governance story, but it is a strong place to anchor the parts that benefit from consistency. Used well, it gives developers a predictable front door, gives platform teams a durable policy layer, and gives leadership a clearer answer to the question of how AI traffic is being controlled.

If you want AI teams to move quickly without rebuilding governance from scratch in every repo, treat API Management as an AI control plane. Keep the policies visible, keep the developer experience sane, and keep the shared rules centralized enough that scaling does not turn into drift.

Leave a Reply