Most AI pilot projects begin with excitement and speed. A team wants to test a chatbot, summarize support tickets, draft internal content, or search across documents faster than before. The technical work starts quickly because modern tools make it easy to stand something up in days instead of months.

What usually lags behind is a decision about retention. People ask whether the model is accurate, how much the service costs, and whether the pilot should connect to internal data. Far fewer teams stop to ask a simple operational question: how long should prompts, uploaded files, generated outputs, and usage logs actually live?

That gap matters because retention is not just a legal concern. It shapes privacy exposure, security review, troubleshooting, incident response, and user trust. If a pilot stores more than the team expects, or keeps it longer than anyone intended, the project can quietly drift from a safe experiment into a governance problem.

AI Pilots Accumulate More Data Than Teams Expect

An AI pilot rarely consists of only a prompt and a response. In practice, there are uploaded files, retrieval indexes, conversation history, feedback labels, exception traces, browser logs, and often a copy of generated output pasted somewhere else for later use. Even when each piece looks harmless on its own, the combined footprint becomes much richer than the team planned for.

This is why a retention policy should exist before launch, not after the first success story. Once people start using a helpful pilot, the data trail expands fast. It becomes harder to untangle what is essential for product improvement versus what is simply leftover operational residue that nobody remembered to clean up.

Prompts and Outputs Deserve Different Rules

Many teams treat all AI data as one category, but that is usually too blunt. Raw prompts may contain sensitive context, copied emails, internal notes, or customer fragments. Generated outputs may be safer to retain in some cases, especially when they become part of an approved business workflow. System logs may need a shorter window, while audit events may need a longer one.

Separating these categories makes the policy more practical. Instead of saying “keep AI data for 90 days,” a stronger rule might say that prompt bodies expire quickly, approved outputs inherit the retention of the destination system, and security-relevant audit records follow the organization’s existing control standards.

Retention Decisions Shape Security Exposure

Every extra day of stored AI interaction data extends the window in which that information can be misused, leaked, or pulled into discovery work nobody anticipated. A pilot that feels harmless in week one may become more sensitive after users realize it can answer real work questions and begin pasting in richer material.

Retention is therefore a security control, not just housekeeping. Shorter storage windows reduce blast radius. Clear deletion behavior reduces ambiguity during incident response. Defined storage locations make it easier to answer basic questions like who can read the data, what gets backed up, and whether the team can actually honor a delete request.

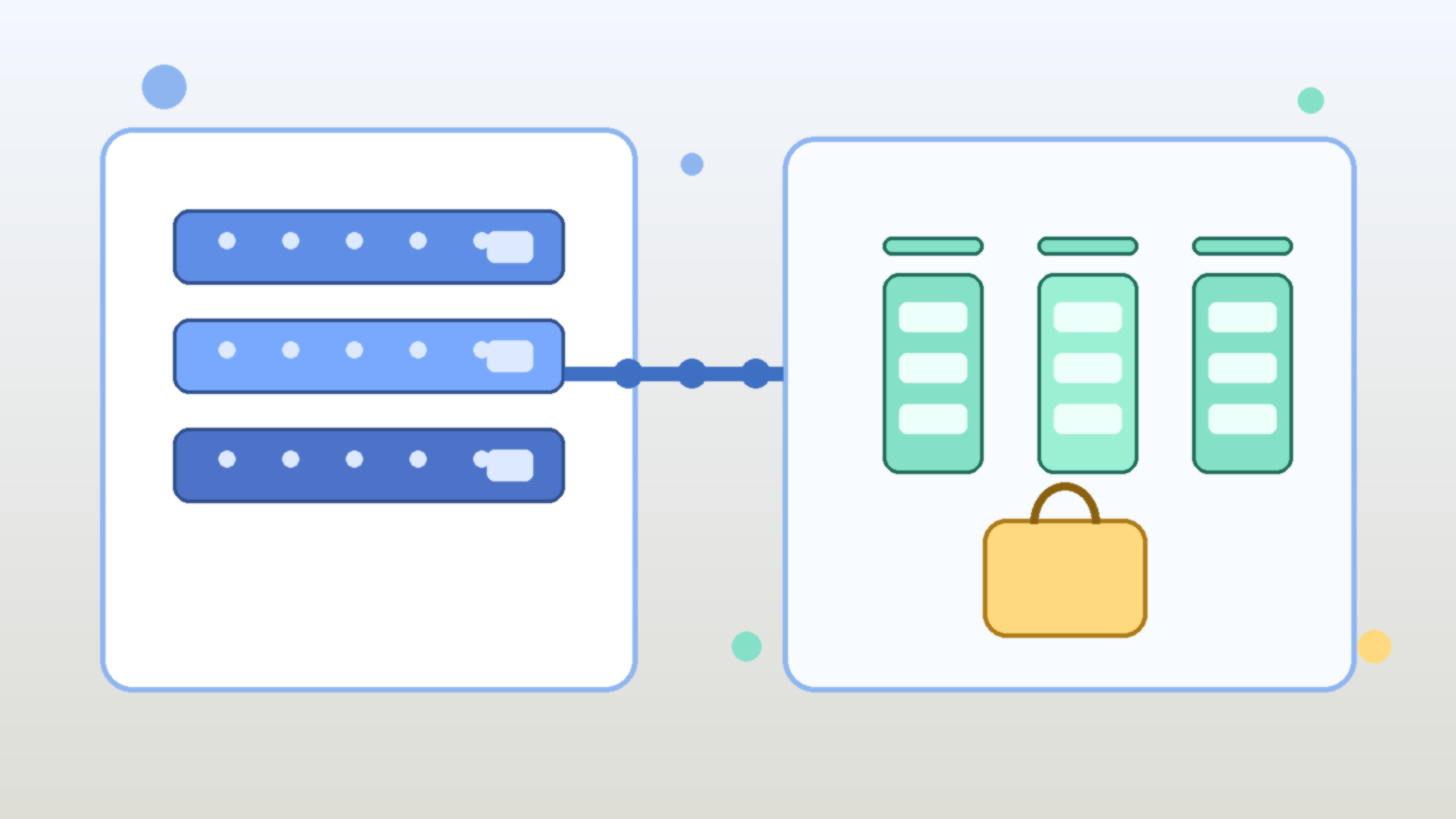

Vendors and Internal Systems Create Split Responsibility

AI pilots often span a vendor platform plus one or more internal systems. A team might use a hosted model, store logs in a cloud workspace, send analytics into another service, and archive approved outputs in a document repository. If retention is only defined in one layer, the overall policy is incomplete.

That is where teams get surprised. They disable one history feature and assume the data is gone, while another copy still exists in telemetry, exports, or downstream collaboration tools. A launch-ready retention policy should name each storage point clearly enough that operations and security teams can verify the behavior instead of guessing.

A Good Pilot Policy Should Be Boring and Specific

The best retention policies are not dramatic. They are clear, narrow, and easy to execute. They define what data is stored, where it lives, how long it stays, who can access it, and what event triggers deletion or review. They also explain what the pilot should not accept, such as regulated records, source secrets, or customer data that has no business purpose in the test.

Specificity beats slogans here. “We take privacy seriously” does not help an engineer decide whether prompt logs should expire after seven days or ninety. A simple table in an internal design note, backed by actual configuration, is far more valuable than broad policy language nobody can operationalize.

Final Takeaway

An AI pilot is not low risk just because it is temporary. Temporary projects often have the weakest controls because everyone assumes they will be cleaned up later. If the pilot is useful, later usually never arrives on its own.

That is why retention belongs in the launch checklist. Decide what will be stored, separate prompts from outputs, map vendor and internal copies, and set deletion rules early. Teams that do this before users pile in tend to move faster with fewer surprises once the pilot starts succeeding.

Leave a Reply