Teams love to talk about what an AI agent can do, but production trouble usually starts with what the agent is allowed to do. An agent that reads dashboards, opens tickets, updates records, triggers workflows, and calls external tools can accumulate real operational power long before anyone formally acknowledges it.

That is why serious deployments need a permission budget before the agent ever touches production. A permission budget is a practical limit on what the system may read, write, trigger, approve, and expose by default. It forces the team to design around bounded authority instead of discovering the boundary after the first near miss.

Capability Growth Usually Outruns Governance

Most agent programs start with a narrow, reasonable use case. Maybe the first version summarizes alerts, drafts internal updates, or recommends next actions to a human operator. Then the obvious follow-up requests arrive. Can it reopen incidents automatically? Can it restart a failed job? Can it write back to the CRM? Can it call the cloud API directly when confidence is high?

Each one sounds efficient in isolation. Together, they create a system whose real authority is much broader than the original design. If the team never defines an explicit budget for access, production permissions expand through convenience and one-off exceptions instead of through deliberate architecture.

A Permission Budget Makes Access a Design Decision

Budgeting permissions sounds restrictive, but it actually speeds up healthy delivery. The team agrees on the categories of access the agent can have in its current stage: read-only telemetry, limited ticket creation, low-risk configuration reads, or a narrow set of workflow triggers. Everything else stays out of scope until the team can justify it.

That creates a cleaner operating model. Product owners know what automation is realistic. Security teams know what to review. Platform engineers know which credentials, roles, and tool connectors are truly required. Instead of debating every new capability from scratch, the budget becomes the reference point for whether a request belongs in the current release.

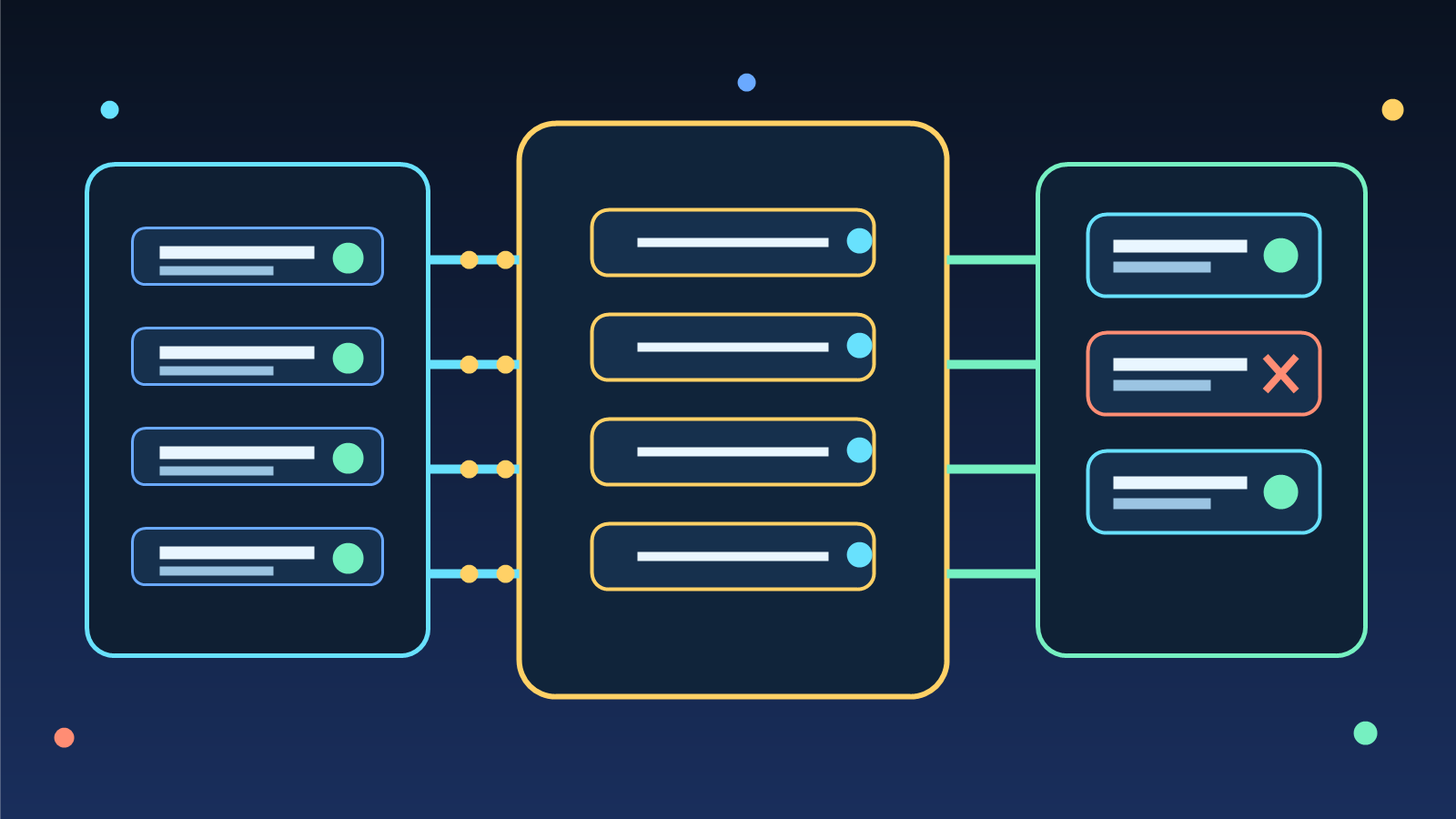

Read, Write, Trigger, and Approve Should Be Treated Differently

One reason agent permissions get messy is that teams bundle very different powers together. Reading a runbook is not the same as changing a firewall rule. Creating a draft support response is not the same as sending that response to a customer. Triggering a diagnostic workflow is not the same as approving a production change.

A useful permission budget breaks these powers apart. Read access should be scoped by data sensitivity. Write access should be limited by object type and blast radius. Trigger rights should be limited to reversible workflows where audit trails are strong. Approval rights should usually stay human-controlled unless the action is narrow, low-risk, and fully observable.

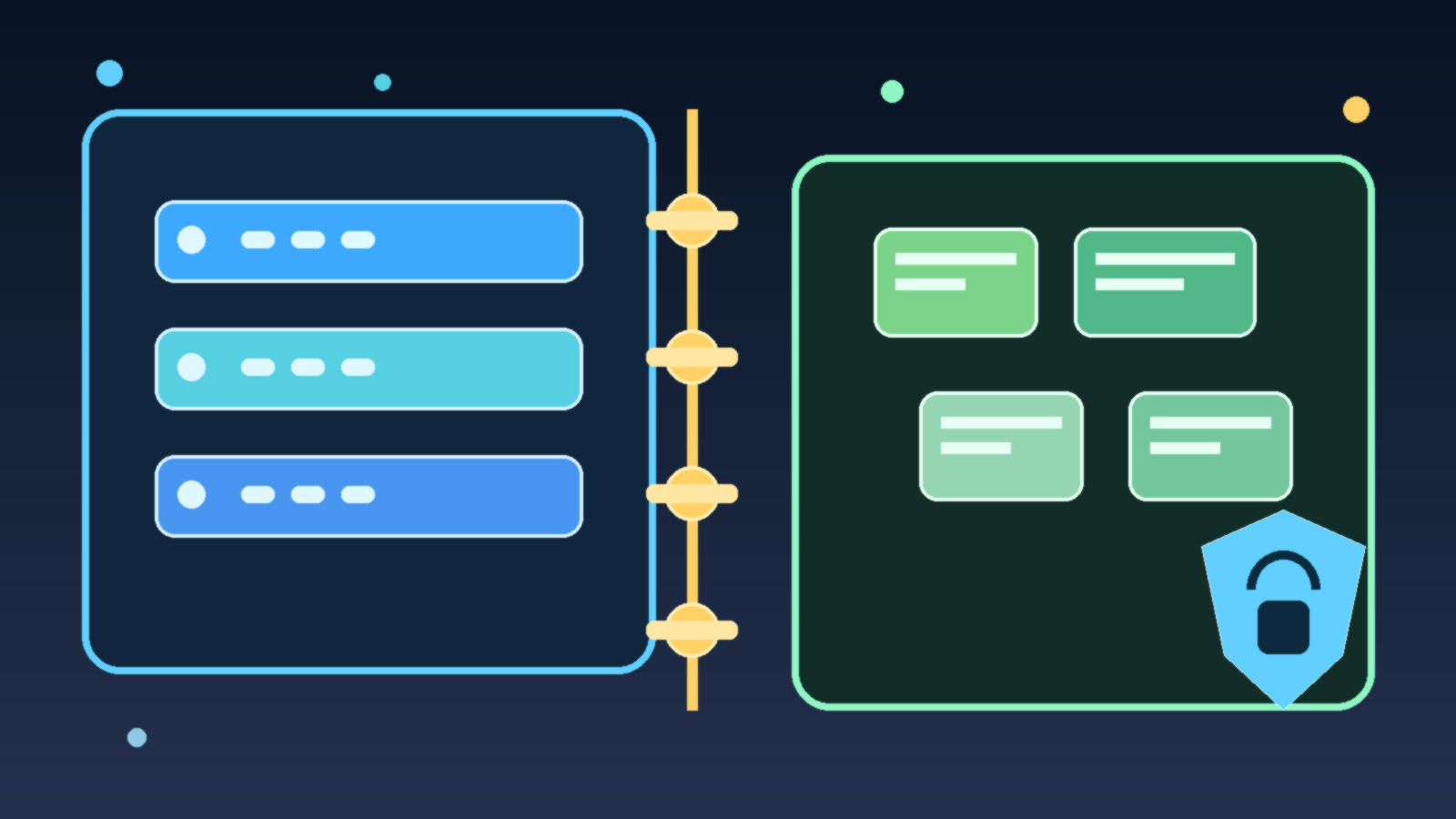

Budgets Need Technical Guardrails, Not Just Policy Language

A slide deck that says “least privilege” is not a control. The budget needs technical enforcement. That can mean separate service principals for separate tools, environment-specific credentials, allowlisted actions, scoped APIs, row-level filtering, approval gates, and time-bound tokens instead of long-lived secrets.

It also helps to isolate the dangerous paths. If an agent can both observe a problem and execute the fix, the execution path should be narrower, more logged, and easier to disable than the observation path. Production systems fail more safely when the powerful operations are few, explicit, and easy to audit.

Escalation Rules Matter More Than Confidence Scores

Teams often focus on model confidence when deciding whether an agent should act. Confidence has value, but it is a weak substitute for escalation design. A highly confident agent can still act on stale context, incomplete data, or a flawed tool result. A permission budget works better when it is paired with rules for when the system must stop, ask, or hand off.

For example, an agent may be allowed to create a draft remediation plan, collect diagnostics, or execute a rollback in a sandbox. The moment it touches customer-facing settings, identity boundaries, billing records, or irreversible actions, the workflow should escalate to a human. That threshold should exist because of risk, not because the confidence score fell below an arbitrary number.

Auditability Is Part of the Budget

An organization does not really control an agent if it cannot reconstruct what the agent read, what tools it invoked, what it changed, and why the action appeared allowed at the time. Permission budgets should therefore include logging expectations. If an action cannot be tied back to a request, a credential, a tool call, and a resulting state change, it probably should not be production-eligible yet.

This is especially important when multiple systems are involved. AI platforms, orchestration layers, cloud roles, and downstream applications may each record a different fragment of the story. The budget conversation should include how those fragments are correlated during reviews, incident response, and postmortems.

Start Small Enough That You Can Expand Intentionally

The best early agent deployments are usually a little boring. They summarize, classify, draft, collect, and recommend before they mutate production state. That is not a failure of ambition. It is a way to build trust with evidence. Once the team sees the agent behaving well under real conditions, it can expand the budget one category at a time with stronger tests and better telemetry.

That expansion path matters because production access is sticky. Once a workflow depends on a broad permission set, it becomes politically and technically hard to narrow it later. Starting with a tight budget is easier than trying to claw back authority after the organization has grown comfortable with risky automation.

Final Takeaway

If an AI agent is heading toward production, the right question is not just whether it works. The harder and more useful question is what authority it should be allowed to accumulate at this stage. A permission budget gives teams a shared language for answering that question before convenience becomes policy.

Agents can be powerful without being over-privileged. In most organizations, that is the difference between an automation program that matures safely and one that spends the next year explaining preventable exceptions.