Teams love the idea of AI provider portability. It sounds prudent to say a gateway can route between multiple model vendors, fail over during an outage, and keep applications running without a major rewrite. That flexibility is useful, but too many programs stop at the routing story. They wire up model endpoints, prove that prompts can move from one provider to another, and declare the architecture resilient.

The problem is that a traffic switch is not the same thing as a control plane. If one provider path has prompt logging disabled, another path stores request history longer, and a third path allows broader plugin or tool access, then failover can quietly change the security and compliance posture of the application. The business thinks it bought resilience. In practice, it may have bought inconsistent policy enforcement that only shows up when something goes wrong.

Routing continuity is only one part of operational continuity

Engineering teams often design AI failover around availability. If provider A slows down or returns errors, route requests to provider B. That is a reasonable starting point, but it is incomplete. An AI platform also has to preserve the controls around those requests, not just the success rate of the API call.

That means asking harder questions before the failover demo looks impressive. Will the alternate provider keep data in the same region? Are the same retention settings available? Does the backup path expose the same model family to the same users, or will it suddenly allow features that the primary route blocks? If the answer is different across providers, then the organization is not really failing over one governed service. It is switching between services with different rules.

A resilience story that ignores policy equivalence is the kind of architecture that looks mature in a slide deck and fragile during an audit.

Define the nonnegotiable controls before you define the fallback order

The cleanest way to avoid drift is to decide what must stay true no matter where the request goes. Those controls should be documented before anyone configures weighted routing or health-based failover.

For many organizations, the nonnegotiables include data residency, retention limits, request and response logging behavior, customer-managed access patterns, content filtering expectations, and whether tool or retrieval access is allowed. Some teams also need prompt redaction, approval gates for sensitive workloads, or separate policies for internal versus customer-facing use cases.

Once those controls are defined, each provider route can be evaluated against the same checklist. A provider that fails an important requirement may still be useful for isolated experiments, but it should not sit in the automatic production failover chain. That line matters. Not every technically reachable model endpoint deserves equal operational trust.

The hidden problem is often metadata, not just model output

When teams compare providers, they usually focus on model quality, token pricing, and latency. Those matter, but governance problems often appear in the surrounding metadata. One provider may log prompts for debugging. Another may keep richer request traces. A third may attach different identifiers to sessions, users, or tool calls.

That difference can create a mess for retention and incident response. Imagine a regulated workflow where the primary path keeps minimal logs for a short period, but the failover path stores additional request context for longer because that is how the vendor debugging feature works. The application may continue serving users correctly while silently creating a broader data footprint than the risk team approved.

That is why provider reviews should include the entire data path: prompts, completions, cached content, system instructions, tool outputs, moderation events, and operational logs. The model response is only one part of the record.

Treat failover eligibility like a policy certification

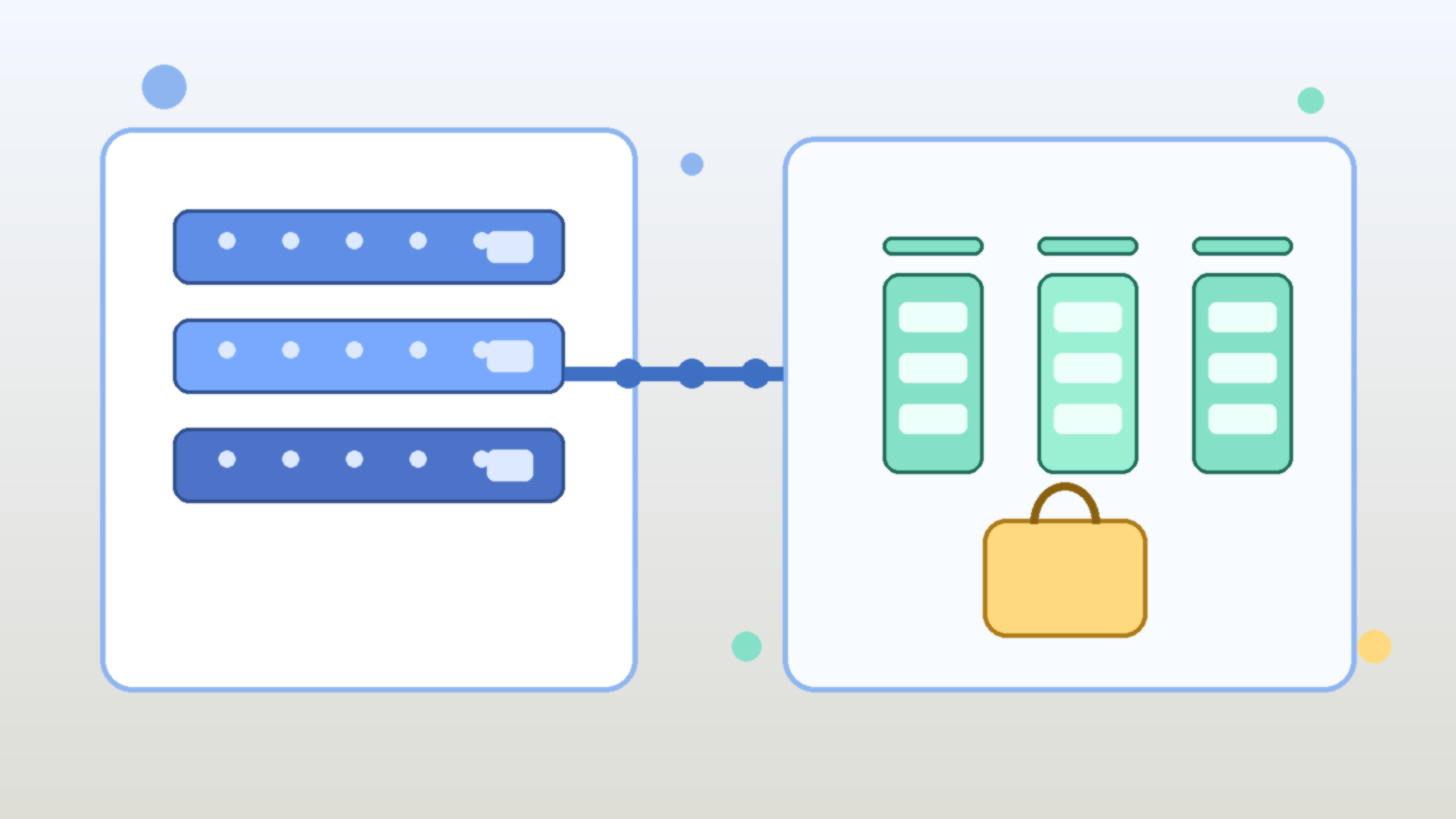

A strong pattern is to certify each provider route before it becomes eligible for automatic failover. Certification should be more than a connectivity test. It should prove that the route meets the minimum control standard for the workload it may serve.

For example, a low-risk internal drafting assistant may allow multiple providers with roughly similar settings. A customer support assistant handling sensitive account context may have a narrower list because residency, retention, and review requirements are stricter. The point is not to force every workload into the same vendor strategy. The point is to prevent the gateway from making governance decisions implicitly during an outage.

A practical certification review should cover:

- allowed data types for the route

- approved regions and hosting boundaries

- retention and logging behavior

- moderation and safety control parity

- tool, plugin, or retrieval permission differences

- incident-response visibility and auditability

- owner accountability for exceptions and renewals

That list is not glamorous, but it is far more useful than claiming portability without defining what portable means.

Separate failover for availability from failover for policy exceptions

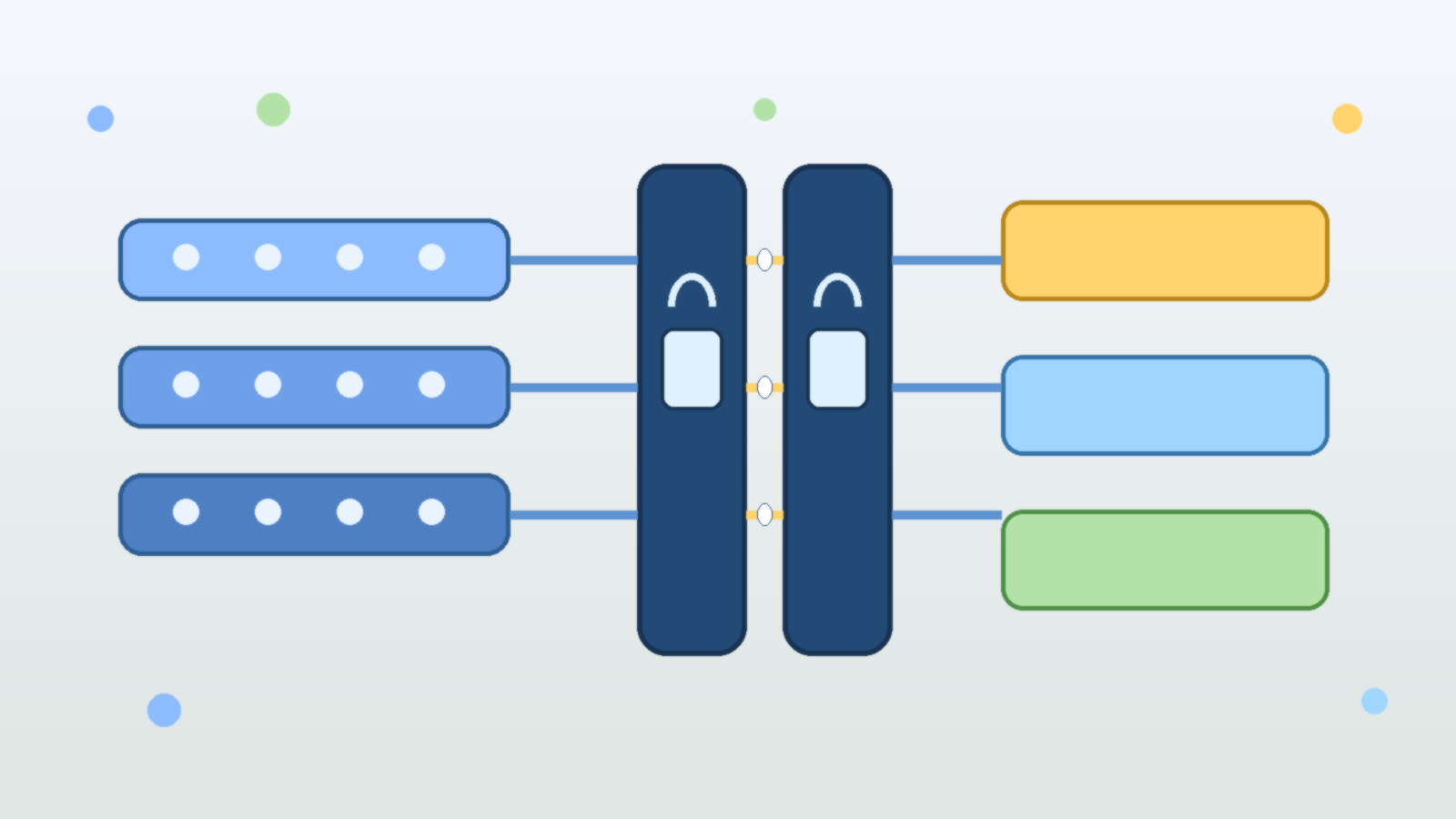

Another common mistake is bundling every exception into the same routing mechanism. A team may say, "If the primary path fails, use the backup provider," while also using that same backup path for experiments that need broader features. That sounds efficient, but it creates confusion because the exact same route serves two different governance purposes.

A better design separates emergency continuity from deliberate exceptions. The continuity route should be boring and predictable. It exists to preserve service under stress while staying within the approved policy envelope. Exception routes should be explicit, approved, and usually manual or narrowly scoped.

This separation makes reviews much easier. Auditors and security teams can understand which paths are part of the standard operating model and which ones exist for temporary or special-case use. It also reduces the temptation to leave a broad backup path permanently enabled just because it helped once during a migration.

Test the policy outcome, not just the failover event

Most failover exercises are too shallow. Teams simulate a provider outage, verify that traffic moves, and stop there. That test proves only that routing works. It does not prove that the routed traffic still behaves within policy.

A better exercise inspects what changed after failover. Did the logs land in the expected place? Did the same content controls trigger? Did the same headers, identities, and approval gates apply? Did the same alerts fire? Could the security team still reconstruct the transaction path afterward?

Those are the details that separate operational resilience from operational surprise. If nobody checks them during testing, the organization learns about control drift during a real incident, which is exactly when people are least equipped to reason carefully.

Build provider portability as a governance feature, not just an engineering feature

Provider portability is worth having. No serious platform team wants a brittle single-vendor dependency for critical AI workflows. But portability should be treated as a governance feature as much as an engineering one.

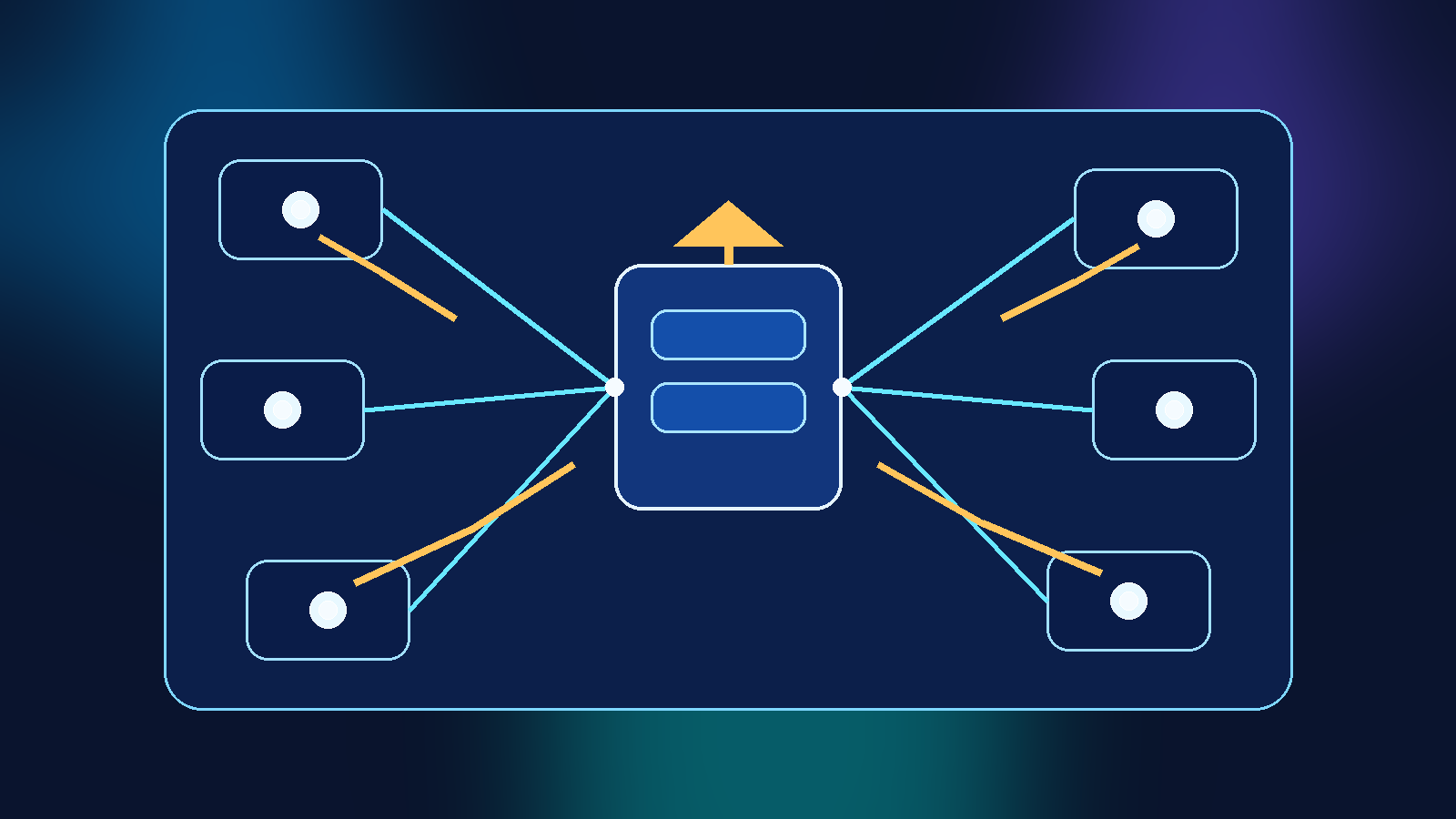

That means the gateway should carry policy with the request instead of assuming every endpoint is interchangeable. Route selection should consider workload classification, approved regions, tool access limits, logging rules, and exception status. If the platform cannot preserve those conditions automatically, then failover should narrow to the routes that can.

In other words, the best AI gateway is not the one with the most model connectors. It is the one that can switch paths without changing the organization’s risk posture by accident.

Start with one workload and prove policy equivalence end to end

Teams do not need to solve this across every application at once. Start with one workload that matters, map the control requirements, and compare the primary and backup provider paths in a disciplined way. Document what is truly equivalent, what is merely similar, and what requires an exception.

That exercise usually reveals the real maturity of the platform. Sometimes the backup path is not ready for automatic failover yet. Sometimes the organization needs better logging normalization or tighter route-level policy tags. Sometimes the architecture is already in decent shape and simply needs clearer documentation.

Either way, the result is useful. AI gateway failover becomes a conscious operating model instead of a comforting but vague promise. That is the difference between resilience you can defend and resilience you only hope will hold up when the primary provider goes dark.