Teams usually adopt AI safety controls with the right intent and the wrong operating model. They turn on filtering, add a human review step for anything that looks uncertain, and assume the process will stay manageable. Then the first popular internal copilot launches, false positives pile up, and reviewers become the slowest part of the system.

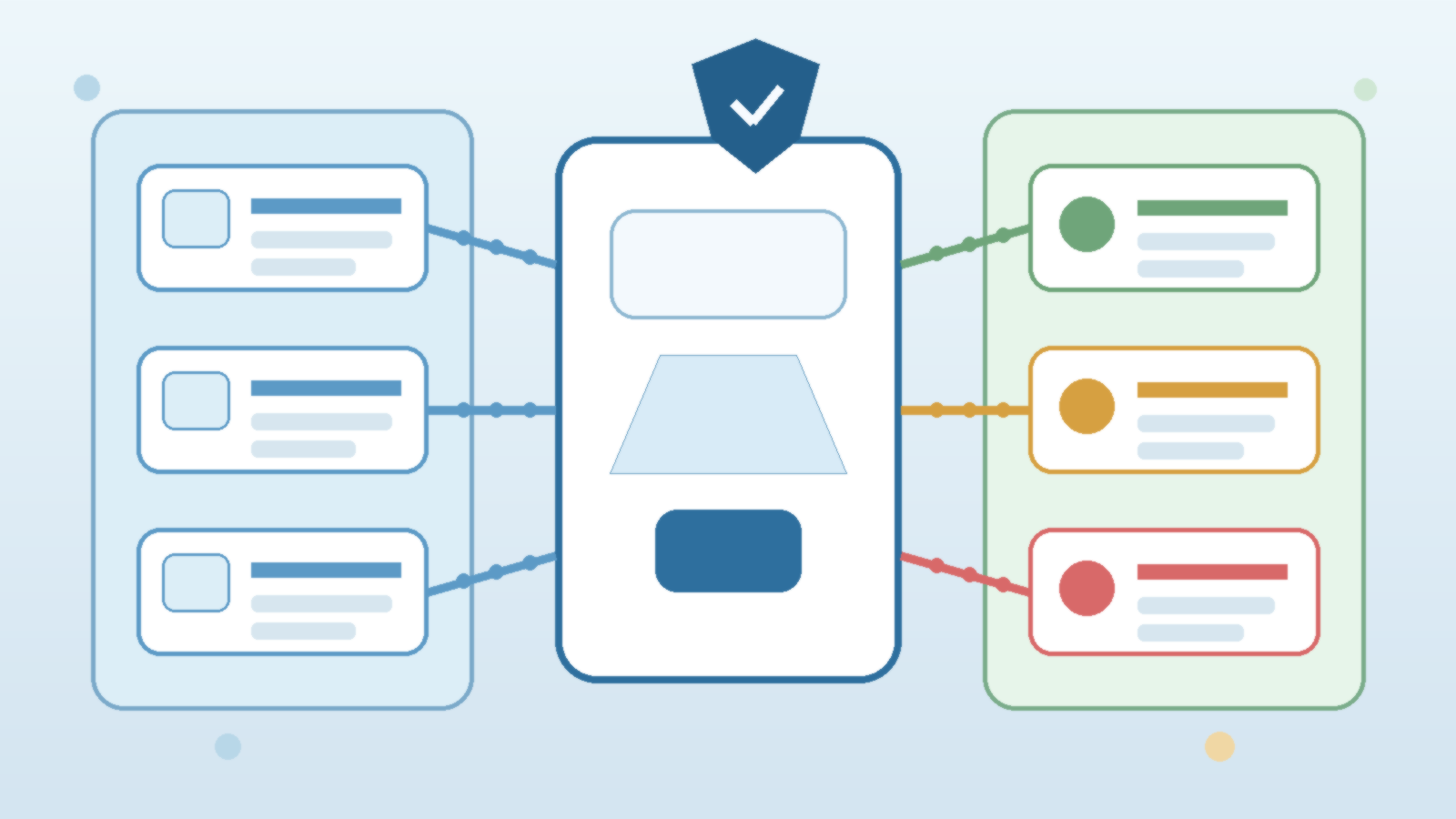

Azure AI Content Safety can help, but only if you design around triage rather than treating moderation as a single yes or no decision. The goal is to stop genuinely risky content, route ambiguous cases intelligently, and keep low-risk traffic moving without training users to hate the platform. That means thinking about thresholds, context, ownership, and workflow design before the first review queue appears.

Start With Risk Tiers Instead of One Global Moderation Rule

Not every AI workload deserves the same moderation posture. An internal summarization tool for policy documents is not the same as a public-facing assistant that lets users upload free-form content. If both applications inherit one shared threshold and one identical escalation path, you will either over-block the safer workload or under-govern the riskier one.

A better pattern is to define a few risk tiers up front. Low-risk internal tools can use tighter automation with minimal human review. Medium-risk tools may need selective escalation for certain categories or confidence bands. High-risk workflows may require stronger prompt restrictions, richer logging, and explicit operational ownership. Azure AI Content Safety becomes more useful when it supports a portfolio of moderation profiles instead of one rigid default.

Use Confidence Bands to Decide What Really Needs Human Attention

One of the easiest ways to create an endless review queue is to send every flagged request to a person. That feels safe on day one, but it scales badly and usually produces a backlog of harmless edge cases. Reviewers end up spending their time on content that was only mildly ambiguous while the business starts pressuring the platform team to relax controls.

Confidence bands are a more practical approach. High-confidence harmful content can be blocked automatically. Low-confidence benign content can proceed with logging. The middle band is where human review or stronger fallback handling belongs. This keeps reviewers focused on the cases where judgment actually matters and stops the moderation system from becoming an expensive second inbox.

Separate Safety Escalation From General Support Work

Many organizations accidentally route AI moderation issues into a generic help desk queue. That usually creates two problems at once. First, support teams do not have the policy context needed to interpret borderline cases. Second, truly sensitive reviews get buried beside password resets, printer tickets, and unrelated app requests.

If moderation exceptions matter, they need a dedicated ownership path. That does not have to mean a large formal team. It can be a small rotation with documented decision criteria, expected response times, and a clear escalation path to legal, compliance, HR, or security when required. The point is to make moderation a governed workflow, not an accidental byproduct of general IT support.

Give Reviewers the Context They Need to Make Fast Decisions

A review queue gets slow when each item arrives stripped of useful context. Seeing only a content score and a fragment of text is rarely enough. Reviewers usually need to know which application submitted the request, what type of user interaction triggered it, whether the request came from an internal or external audience, and what policy profile was active at the time.

That context should be assembled automatically. If a reviewer has to hunt through logs, ask product teams for screenshots, or reconstruct the prompt chain manually, your process is already too fragile. Good moderation design pairs Azure AI Content Safety signals with application metadata so review decisions are fast, explainable, and consistent enough to turn into better rules later.

Track False Positives as an Operations Problem, Not a Complaints Problem

When users say the AI tool is over-blocking harmless work, it is tempting to treat those messages as anecdotal grumbling. That is a mistake. False positives are operational data. They tell you where thresholds are too aggressive, where prompts are structured badly, or where specific applications need a more tailored moderation policy.

If you do not measure false positives deliberately, the pressure to loosen controls will arrive before the evidence does. Track appeal rates, frequent trigger patterns, and queue outcomes by workload. Over time, that lets you refine the decision bands and reduce unnecessary review volume without turning safety into guesswork.

Design the Escape Hatch Before a Sensitive Incident Forces One

There will be cases where a human needs to intervene quickly, whether because a blocking rule is disrupting a critical workflow or because a serious content issue requires urgent containment. If the only path is an ad hoc admin override buried in a chat thread, you have created a governance problem of your own.

Define the override process early. Decide who can approve exceptions, how long they last, what gets logged, and how the change is reviewed afterward. A good escape hatch is narrow, time-bound, and auditable. It exists to preserve business continuity without silently teaching every team that policy can be bypassed whenever the queue gets annoying.

Final Takeaway

Azure AI Content Safety is most effective when it helps teams route decisions intelligently instead of pushing every uncertain case onto a person. The difference between a durable moderation program and an endless review backlog is usually operating design, not the model alone.

If you want safety controls that users respect and operators can sustain, build around risk tiers, confidence bands, contextual review, and measurable false positives. That turns moderation from a bottleneck into a managed system that can grow with the platform.