Teams often start with the fastest path: wire one application directly to one model provider, ship a feature, and promise to clean it up later. That works for a prototype, but it usually turns into a brittle operating model. Pricing changes, model behavior shifts, compliance requirements grow, and suddenly a simple integration becomes a dependency that is hard to unwind.

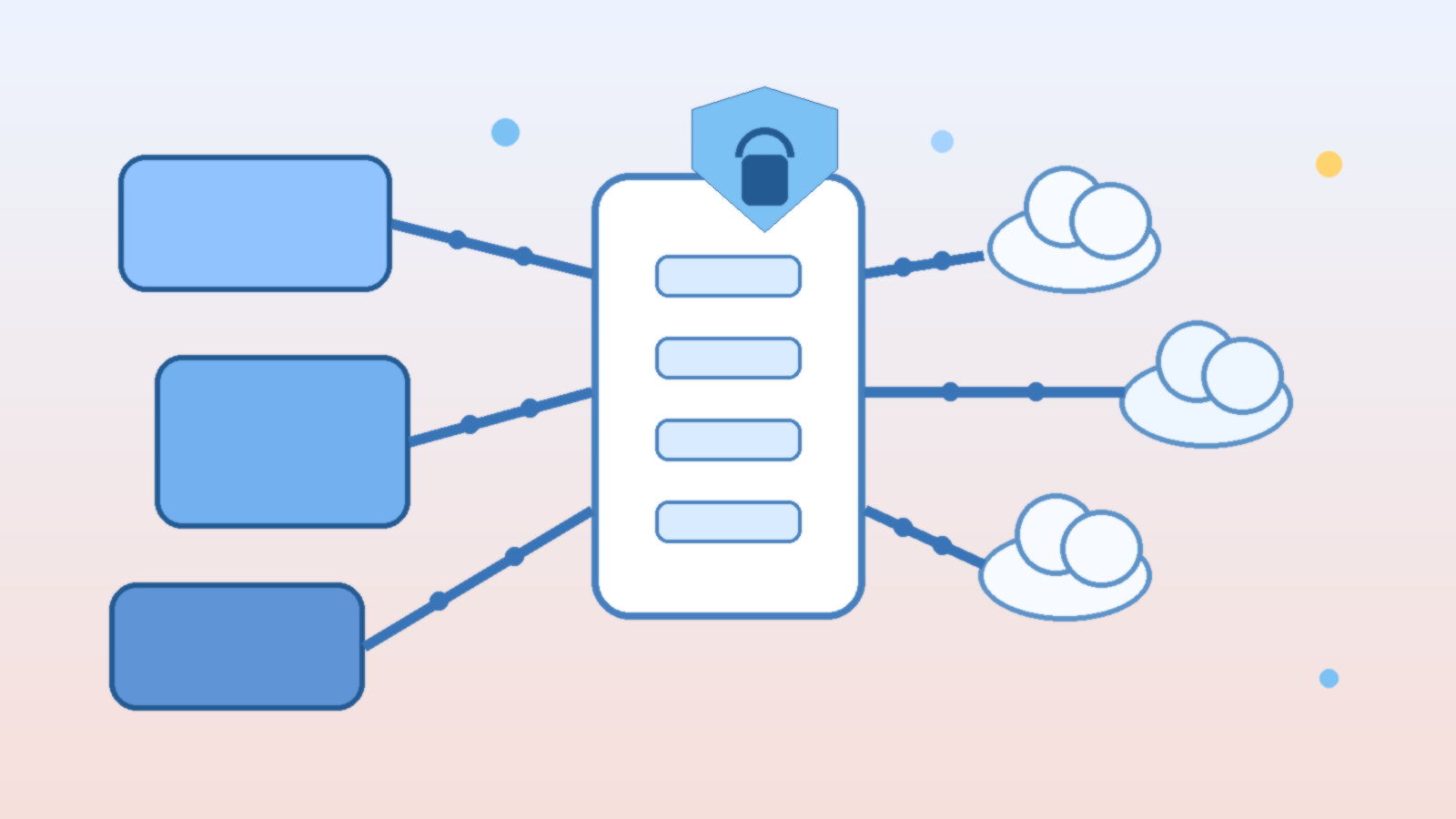

An AI gateway layer gives teams a cleaner boundary. Instead of every app talking to every provider in its own custom way, the gateway becomes the control point for routing, policy, observability, and fallback behavior. The mistake is treating that layer like a glorified pass-through. If it only forwards requests, it adds latency without adding much value. If it becomes a disciplined platform boundary, it can make the rest of the stack easier to change.

Start With the Contract, Not the Vendor List

The first job of an AI gateway is to define a stable contract for internal consumers. Applications should know how to ask for a task, pass context, declare expected response shape, and receive traceable results. They should not need to know whether the answer came from Azure OpenAI, another hosted model, or a future internal service.

That contract should include more than the prompt payload. It should define timeout behavior, retry policy, error categories, token accounting, and any structured output expectations. Once those rules are explicit, swapping providers becomes a controlled engineering exercise instead of a scavenger hunt through half a dozen apps.

Centralize Policy Where It Can Actually Be Enforced

Many organizations talk about AI policy, but enforcement still lives inside application code written by different teams at different times. That usually means inconsistent logging, uneven redaction, and a lot of trust in good intentions. A gateway is the natural place to standardize the controls that should not vary from one workflow to another.

For example, the gateway can apply request classification, strip fields that should never leave the environment, attach tenant or project metadata, and block model access that is outside an approved policy set. That approach does not eliminate application responsibility, but it does remove a lot of duplicated security plumbing from the edges.

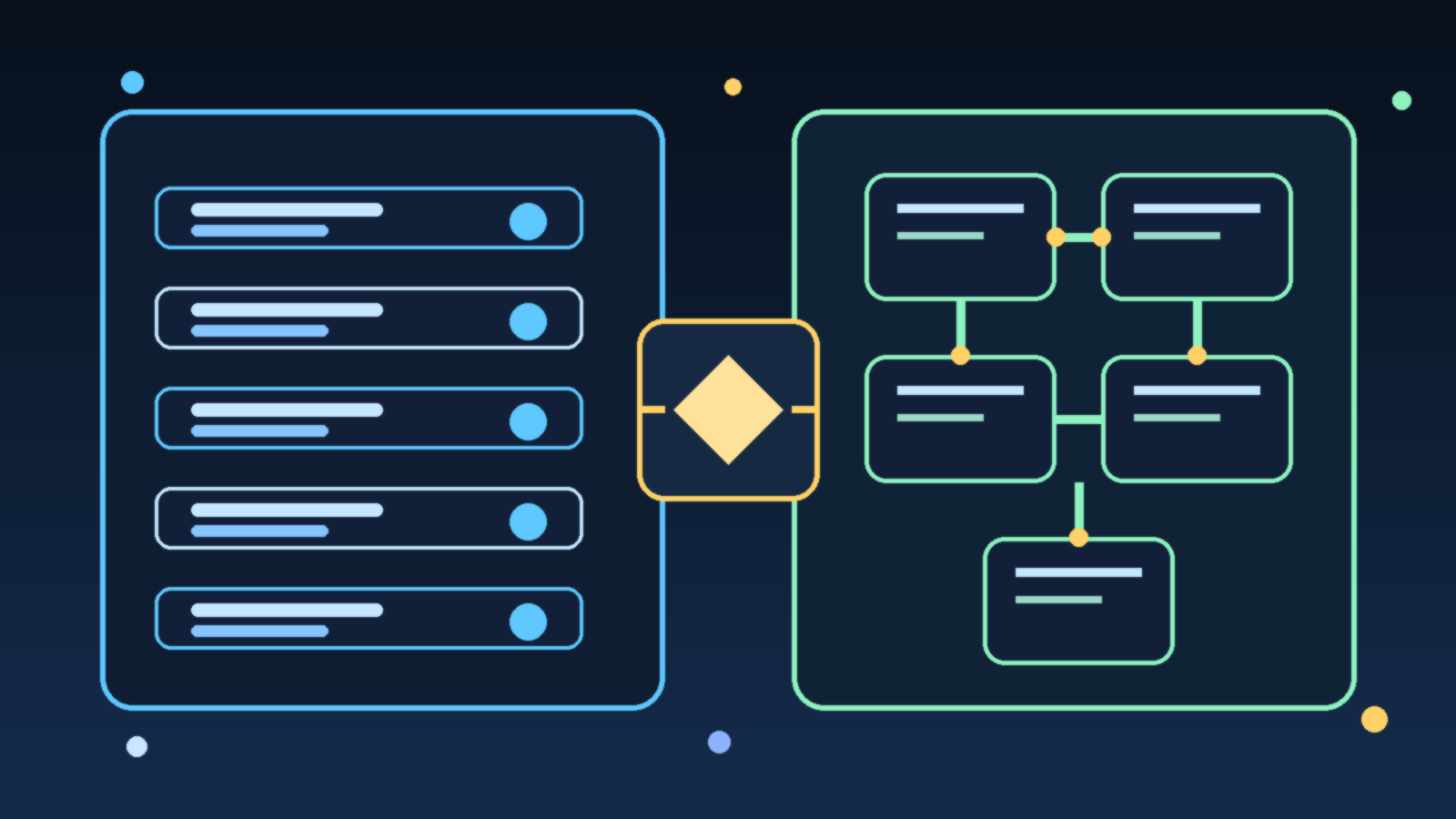

Make Routing a Product Decision, Not a Secret Rule Set

Provider routing tends to get messy when it evolves through one-off exceptions. One team wants the cheapest model for summarization, another wants the most accurate model for extraction, and a third wants a regional endpoint for data handling requirements. Those are all valid needs, but they should be expressed as routing policy that operators can understand, review, and change deliberately.

A good gateway supports explicit routing criteria such as task type, latency target, sensitivity class, geography, or approved model tier. That makes the system easier to govern and much easier to explain during incident review. If nobody can tell why a request went to a given provider, the platform is already too opaque.

Observability Has To Include Cost and Behavior

Normal API monitoring is not enough for AI traffic. Teams need to see token usage, response quality drift, fallback rates, blocked requests, and structured failure modes. Otherwise the gateway becomes a black box that hides the real health of the platform behind a simple success code.

Cost visibility matters just as much. An AI gateway should make it easy to answer practical questions: which workflows are consuming the most tokens, which teams are driving retries, and which provider choices are no longer justified by the value they deliver. Without those signals, multi-provider flexibility can quietly become multi-provider waste.

Design for Graceful Degradation Before You Need It

Provider independence sounds strategic until the first outage, quota cap, or model regression lands in production. That is when the gateway either proves its worth or exposes its shortcuts. If every internal workflow assumes one model family and one response pattern, failover will be more theoretical than real.

Graceful degradation means identifying which tasks can fail over cleanly, which can use a cheaper backup path, and which should stop rather than produce unreliable output. The gateway should carry those rules in configuration and runbooks, not in tribal memory. That way operators can respond quickly without improvising under pressure.

Keep the Gateway Thin Enough to Evolve

There is a real danger on the other side: a gateway that becomes so ambitious it turns into a monolith. If the platform owns every prompt template, every orchestration step, every evaluation flow, and every application-specific quirk, teams will just recreate tight coupling at a different layer.

The healthier model is a thin but opinionated platform. Let the gateway own shared concerns like contracts, policy, routing, auditability, and telemetry. Let product teams keep application logic and domain-specific behavior close to the product. That split gives the organization leverage without turning the platform into a bottleneck.

Final Takeaway

An AI gateway is not valuable because it makes diagrams look tidy. It is valuable because it gives teams a stable internal contract while the external model market keeps changing. When designed well, it reduces lock-in, improves governance, and makes operations calmer. When designed poorly, it becomes one more opaque hop in an already complicated stack.

The practical goal is simple: keep application teams moving without letting every workflow hard-code today’s provider assumptions into tomorrow’s architecture. That is the difference between an integration shortcut and a real platform capability.