The AI landscape has split into two lanes. In one lane: standard large language models (LLMs) that respond quickly, cost a fraction of a cent per call, and handle the vast majority of text tasks without breaking a sweat. In the other: reasoning models such as OpenAI o3, Anthropic Claude with extended thinking, and Google Gemini with Deep Research, that slow down deliberately, chain their way through intermediate steps, and charge multiples more for the privilege.

Choosing between them is not just a technical question. It is a cost-benefit decision that depends heavily on what you are asking the model to do.

What Reasoning Models Actually Do Differently

A standard LLM generates tokens in a single forward pass through its neural network. Given a prompt, it predicts the most probable next word, then the one after that, all the way to a completed response. It does not backtrack. It does not re-evaluate. It is fast because it is essentially doing one shot at the answer.

Reasoning models break this pattern. Before producing a final response, they allocate compute to an internal scratchpad, sometimes called a thinking phase, where they work through sub-problems, consider alternatives, and catch contradictions. OpenAI describes o3 as spending additional compute at inference time to solve complex tasks. Anthropic frames extended thinking as giving Claude space to reason through hard problems step by step before committing to an answer.

The result is measurably better performance on tasks that require multi-step logic, but at a real cost in both time and money. O3-mini is roughly 10 to 20 times more expensive per output token than GPT-4o-mini. Extended thinking in Claude Sonnet is significantly pricier than standard mode. Those numbers matter at scale.

Where Reasoning Models Shine

The category where reasoning models justify their cost is problems with many interdependent constraints, where getting one step wrong cascades into a wrong answer and where checking your own work actually helps.

Complex Code Generation and Debugging

Writing a function that calls an API is well within a standard LLM capability. Designing a correct, edge-case-aware implementation of a distributed locking algorithm, or debugging why a multi-threaded system deadlocks under a specific race condition, is a different matter. Reasoning models are measurably better at catching their own logic errors before they show up in the output. In benchmark evaluations like SWE-bench, o3-level models outperform standard models by wide margins on difficult software engineering tasks.

Math and Quantitative Analysis

Standard LLMs are notoriously inconsistent at arithmetic and symbolic reasoning. They will get a simple percentage calculation wrong, or fumble unit conversions mid-problem. Reasoning models dramatically close this gap. If your pipeline involves financial modeling, data analysis requiring multi-step derivations, or scientific computations, the accuracy gain often makes the cost irrelevant compared to the cost of a wrong answer.

Long-Horizon Planning and Strategy

Tasks like designing a migration plan for moving Kubernetes workloads from on-premises to Azure AKS require holding many variables in mind simultaneously, making tradeoffs, and maintaining consistency across a long output. Standard LLMs tend to lose coherence on these tasks, contradicting themselves between sections or missing constraints mentioned early in the prompt. Reasoning models are significantly better at planning tasks with high internal consistency requirements.

Agentic Workflows Requiring Reliable Tool Use

If you are building an agent that uses tools such as searching databases, running queries, calling APIs, and synthesizing results into a coherent action plan, a reasoning model’s ability to correctly sequence steps and handle unexpected intermediate results is a meaningful advantage. Agentic reliability is one of the biggest selling points for o3-level models in enterprise settings.

Where Standard LLMs Are the Right Call

Reasoning models win on hard problems, but most real-world AI workloads are not hard problems. They are repetitive, well-defined, and tolerant of minor imprecision. In these cases, a fast, inexpensive standard model is the right architectural choice.

Content Generation at Scale

Writing product descriptions, generating email drafts, summarizing documents, translating text: these tasks are well within standard LLM capability. Running them through a reasoning model adds cost and latency without any meaningful quality improvement. GPT-4o or Claude Haiku handle these reliably.

Retrieval-Augmented Generation Pipelines

In most RAG setups, the hard work is retrieval: finding the right documents and constructing the right context. The generation step is typically straightforward. A standard model with well-constructed context will answer accurately. Reasoning overhead here adds latency without a real benefit.

Classification, Extraction, and Structured Output

Sentiment classification, named entity extraction, JSON generation from free text, intent detection: these are classification tasks dressed up as generation tasks. Standard models with a good system prompt and schema validation handle them reliably and cheaply. Reasoning models will not improve accuracy here; they will just slow things down.

High-Throughput, Latency-Sensitive Applications

If your product requires real-time response such as chat interfaces, live code completions, or interactive voice agents, the added thinking time of a reasoning model becomes a user experience problem. Standard models under two seconds are expected by users. Reasoning models can take 10 to 60 seconds on complex problems. That trade is only acceptable when the task genuinely requires it.

A Practical Decision Framework

A useful mental model: ask whether the task has a verifiable correct answer with intermediate dependencies. If yes, such as debugging a specific bug, solving a constraint-heavy optimization problem, or generating a multi-component architecture with correct cross-references, a reasoning model earns its cost. If no, use the fastest and cheapest model that meets your quality bar.

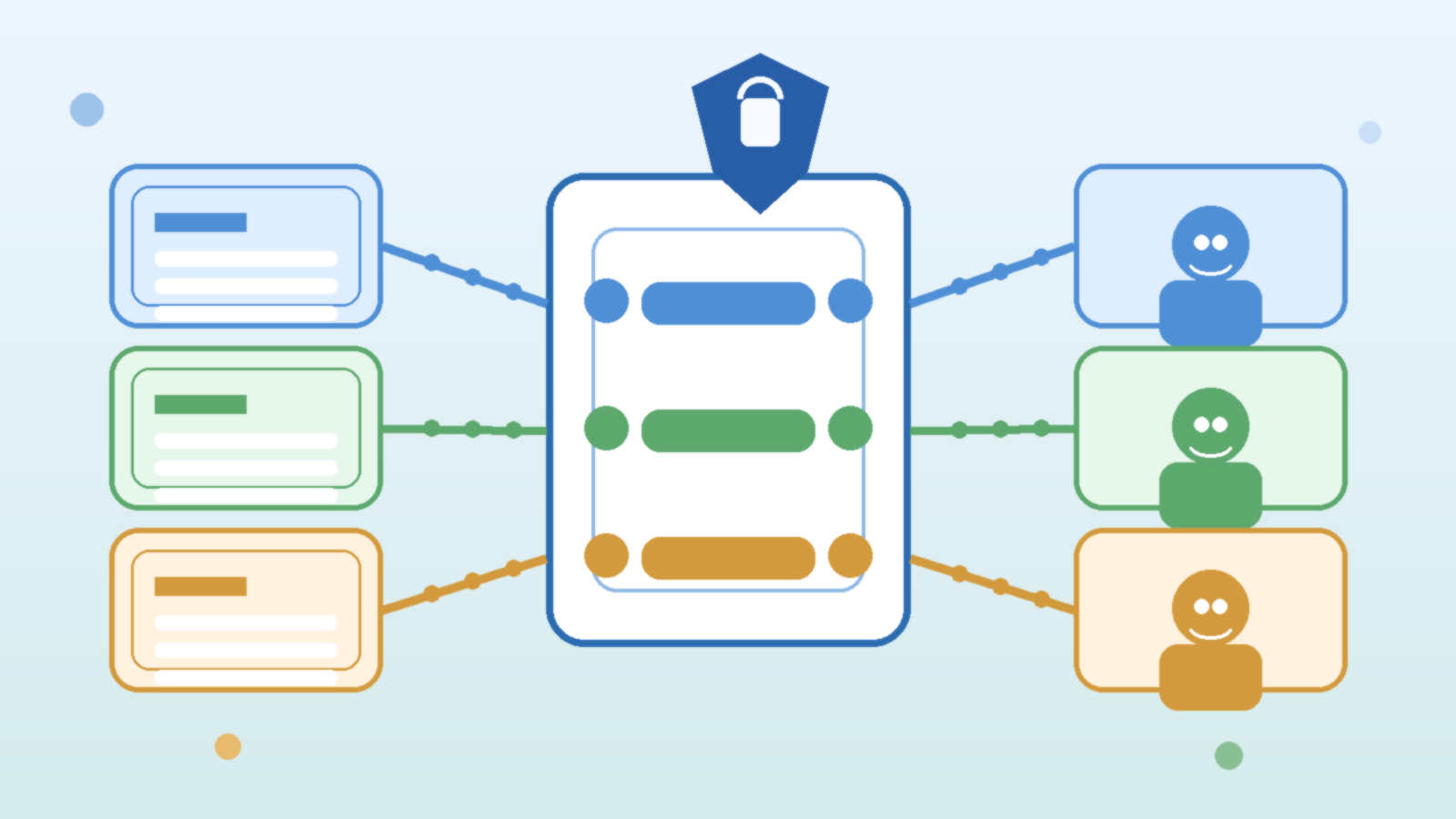

Many teams route by task type. A lightweight classifier or simple rule-based router sends complex analytical and coding tasks to the reasoning tier, while standard generation, summarization, and extraction go to the cheaper tier. This hybrid architecture keeps costs reasonable while unlocking reasoning-model quality where it actually matters.

Watch the Benchmarks With Appropriate Skepticism

Benchmark comparisons between reasoning and standard models can be misleading. Reasoning models are specifically optimized for the kinds of problems that appear in benchmarks: math competitions, coding challenges, logic puzzles. Real-world tasks often do not look like benchmark problems. A model that scores ten points higher on GPQA might not produce noticeably better customer support responses or marketing copy.

Before committing to a reasoning model for your use case, run your own evaluations on representative tasks from your actual workload. The benchmark spread between model tiers often narrows considerably when you move from synthetic test cases to production-representative data.

The Cost Gap Is Narrowing But Not Gone

Model pricing trends consistently downward, and reasoning model costs are falling alongside the rest of the market. OpenAI o4-mini is substantially cheaper than o3 while preserving most of the reasoning advantage. Anthropic Claude Haiku with thinking is affordable for many use cases where the full Sonnet extended thinking budget is too expensive. The gap between standard and reasoning tiers is narrower than it was in 2024.

But it is not zero, and at high call volumes the difference remains significant. A workload running 10 million calls per month at a 15x cost differential between tiers is a hard budget conversation. Plan for it before you are surprised by it.

The Bottom Line

Reasoning models are genuinely better at genuinely hard tasks. They are not better at everything: they are better at tasks where thinking before answering actually helps. The discipline is identifying which tasks those are and routing accordingly. Use reasoning models for complex code, multi-step analysis, hard math, and reliability-critical agentic workflows. Use standard models for everything else. Neither tier should be your default for all workloads. The right answer is almost always a deliberate choice based on what the task actually requires.