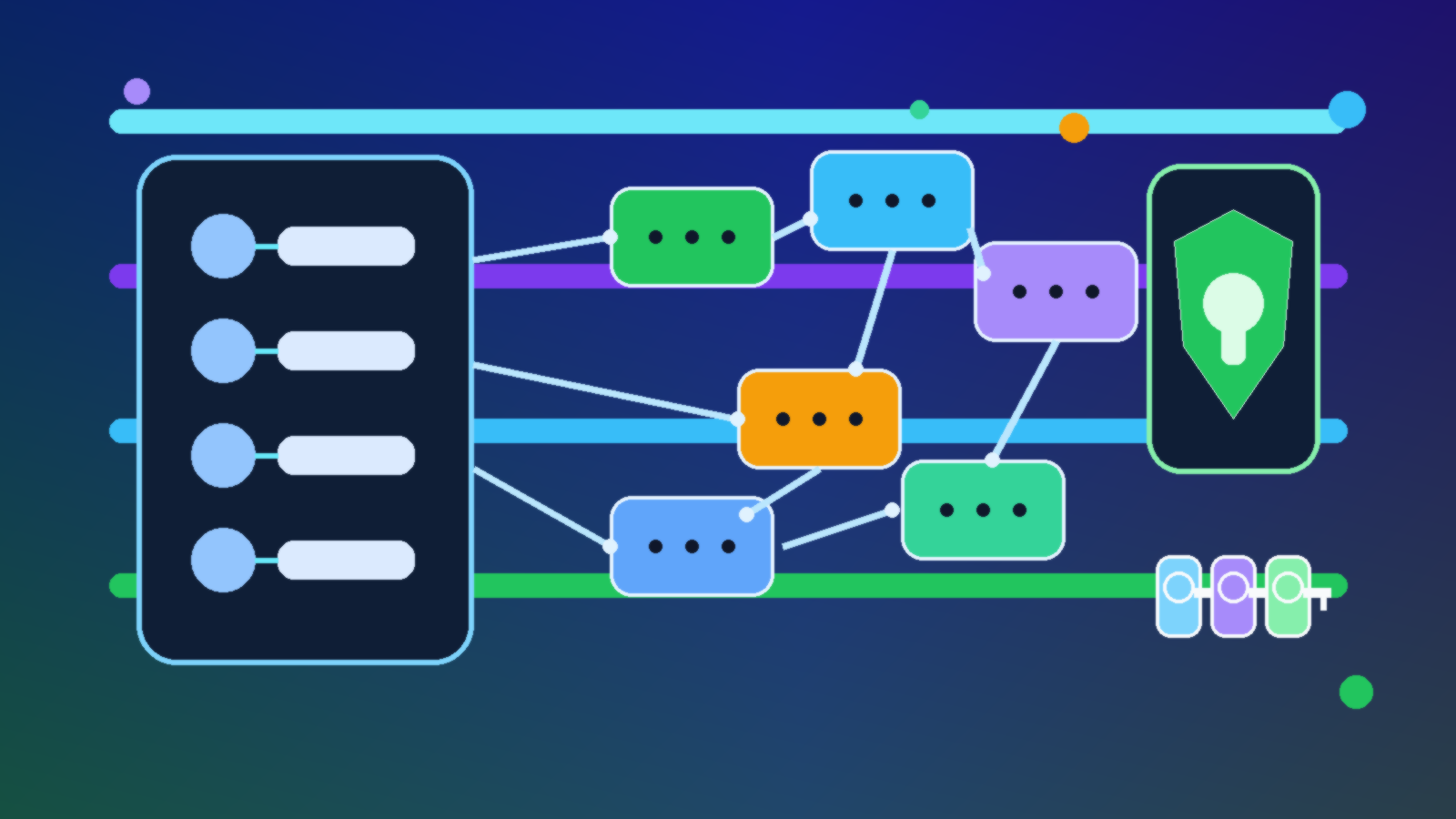

AI inference pipelines look simple on architecture slides. A request comes in, a service calls a model, maybe a retrieval layer joins the flow, and the response goes back out. In production, though, that pipeline usually depends on a stack of credentials: API keys for third-party tools, storage secrets, certificates, and connection details for downstream systems. If those secrets are handled loosely, the pipeline becomes a quiet privilege expansion project.

This is where Azure Key Vault RBAC helps, but only if teams use it with intention. The goal is not merely to move secrets into a vault. The goal is to make sure each workload identity can access only the specific secret operations it actually needs, with ownership, auditing, and separation of duties built into the design.

Why AI Pipelines Accumulate Secret Risk So Quickly

AI systems tend to grow by integration. A proof of concept starts with one model endpoint, then adds content filtering, vector storage, telemetry, document processing, and business-system connectors. Each addition introduces another credential boundary. Under time pressure, teams often solve that by giving one identity broad vault permissions so every component can keep moving.

That shortcut works until it does not. A single over-privileged managed identity can become the access path to multiple environments and multiple downstream systems. The blast radius is larger than most teams realize because the inference pipeline is often positioned in the middle of the application, not at the edge. If it can read everything in the vault, it can quietly inherit more trust than the rest of the platform intended.

Use RBAC Instead of Legacy Access Policies as the Default Pattern

Azure Key Vault supports both legacy access policies and Azure RBAC. For modern AI platforms, RBAC is usually the better default because it aligns vault access with the rest of Azure authorization. That means clearer role assignments, better consistency across subscriptions, and easier review through the same governance processes used for other resource permissions.

More importantly, RBAC makes it easier to think in terms of workload identities and narrowly-scoped roles rather than one-off secret exceptions. If your AI gateway, batch evaluation job, and document enrichment worker all use the same vault, they still do not need the same rights inside it.

Separate Secret Readers From Secret Managers

A healthy Key Vault design draws a hard line between identities that consume secrets and humans or automation that manage them. An inference workload may need permission to read a specific secret at runtime. It usually does not need permission to create new secrets, update existing ones, or change access configuration. When those capabilities are blended together, operational convenience starts to look a lot like standing administration.

That separation matters for incident response too. If a pipeline identity is compromised, you want the response to be “rotate the few secrets that identity could read” rather than “assume the identity could tamper with the entire vault.” Cleaner privilege boundaries reduce both risk and recovery time.

Scope Access to the Smallest Useful Identity Boundary

The most practical pattern is to assign a distinct managed identity to each major AI workload boundary, then grant that identity only the Key Vault role it genuinely needs. A front-door API, an offline evaluation job, and a retrieval indexer should not all share one catch-all identity if they have different data paths and different operational owners.

That design can feel slower at first because it forces teams to be explicit. In reality, it prevents future chaos. When each workload has its own identity, access review becomes simpler, logging becomes more meaningful, and a broken component is less likely to expose unrelated secrets.

Map the Vault Role to the Runtime Need

Most inference workloads need less than teams first assume. A service that retrieves an API key at startup may only need read access to secrets. A certificate automation job may need a more specialized role. The right question is not “what can Key Vault allow?” but “what must this exact runtime path do?”

- Online inference APIs: usually need read access to a narrow set of runtime secrets

- Evaluation or batch jobs: may need separate access because they touch different tools, models, or datasets

- Platform automation: may need controlled secret write or rotation rights, but should live outside the main inference path

That kind of role-to-runtime mapping keeps the design understandable. It also gives security reviewers something concrete to validate instead of a generic claim that the pipeline needs “vault access.”

Keep Environment Boundaries Real

One of the easiest mistakes to make is letting dev, test, and production workloads read from the same vault. Teams often justify this as temporary convenience, especially when the AI service is moving quickly. The result is that lower-trust environments inherit visibility into production-grade credentials, which defeats the point of having separate environments in the first place.

If the environments are distinct, the vault boundary should be distinct too, or at minimum the permission scope must be clearly isolated. Shared vaults with sloppy authorization are one of the fastest ways to turn a non-production system into a path toward production impact.

Use Logging and Review to Catch Privilege Drift

Even a clean initial design will drift if nobody checks it. AI programs evolve, new connectors are added, and temporary troubleshooting permissions have a habit of surviving long after the incident ends. Key Vault diagnostic logs, Azure activity history, and periodic access reviews help teams see when an identity has gained access beyond its original purpose.

The goal is not to create noisy oversight for every secret read. The goal is to make role changes visible and intentional. When an inference pipeline suddenly gains broader vault rights, someone should have to explain why that happened and whether the change is still justified a month later.

What Good Looks Like in Practice

A strong setup is not flashy. Each AI workload has its own managed identity. The identity receives the narrowest practical Key Vault RBAC assignment. Secret rotation automation is handled separately from runtime secret consumption. Environment boundaries are respected. Review and logging make privilege drift visible before it becomes normal.

That approach does not eliminate every risk around AI inference pipelines, but it removes one of the most common and avoidable ones: treating secret access as an all-or-nothing convenience problem. In practice, the difference between a resilient platform and a fragile one is often just a handful of authorization choices made early and reviewed often.

Final Takeaway

Moving secrets into Azure Key Vault is only the starting point. The real control comes from using RBAC to keep AI inference identities narrow, legible, and separate from operational administration. If your pipeline can read every secret because it was easier than modeling access well, the platform is carrying more trust than it should. Better scope now is much cheaper than untangling a secret sprawl problem later.