Internal AI applications are moving from demos to real business workflows. Teams are building chat interfaces for knowledge search, copilots for operations, and internal assistants that connect to documents, tickets, dashboards, and automation tools. That is useful, but it also changes the identity risk profile. The AI app itself may look simple, yet the data and actions behind it can become sensitive very quickly.

That is why Conditional Access should be part of the design from the beginning. Too many teams wait until an internal AI tool becomes popular, then add blunt access controls after people depend on it. The result is usually frustration, exceptions, and pressure to weaken the policy. A better approach is to design Conditional Access around the app’s actual risk so you can protect the tool without making it miserable to use.

Start with the access pattern, not the policy template

Conditional Access works best when it matches how the application is really used. An internal AI app is not just another web portal. It may be accessed by employees, administrators, contractors, and service accounts. It may sit behind a reverse proxy, call APIs on behalf of users, or expose data differently depending on the prompt, the plugin, or the connected source.

If a team starts by cloning a generic policy template, it often misses the most important question: what kind of session are you protecting? A chat app that surfaces internal documentation has a different risk profile than an AI assistant that can create tickets, summarize customer records, or trigger automation in production systems. The right Conditional Access design begins with those differences, not with a default checkbox list.

Separate normal users from elevated workflows

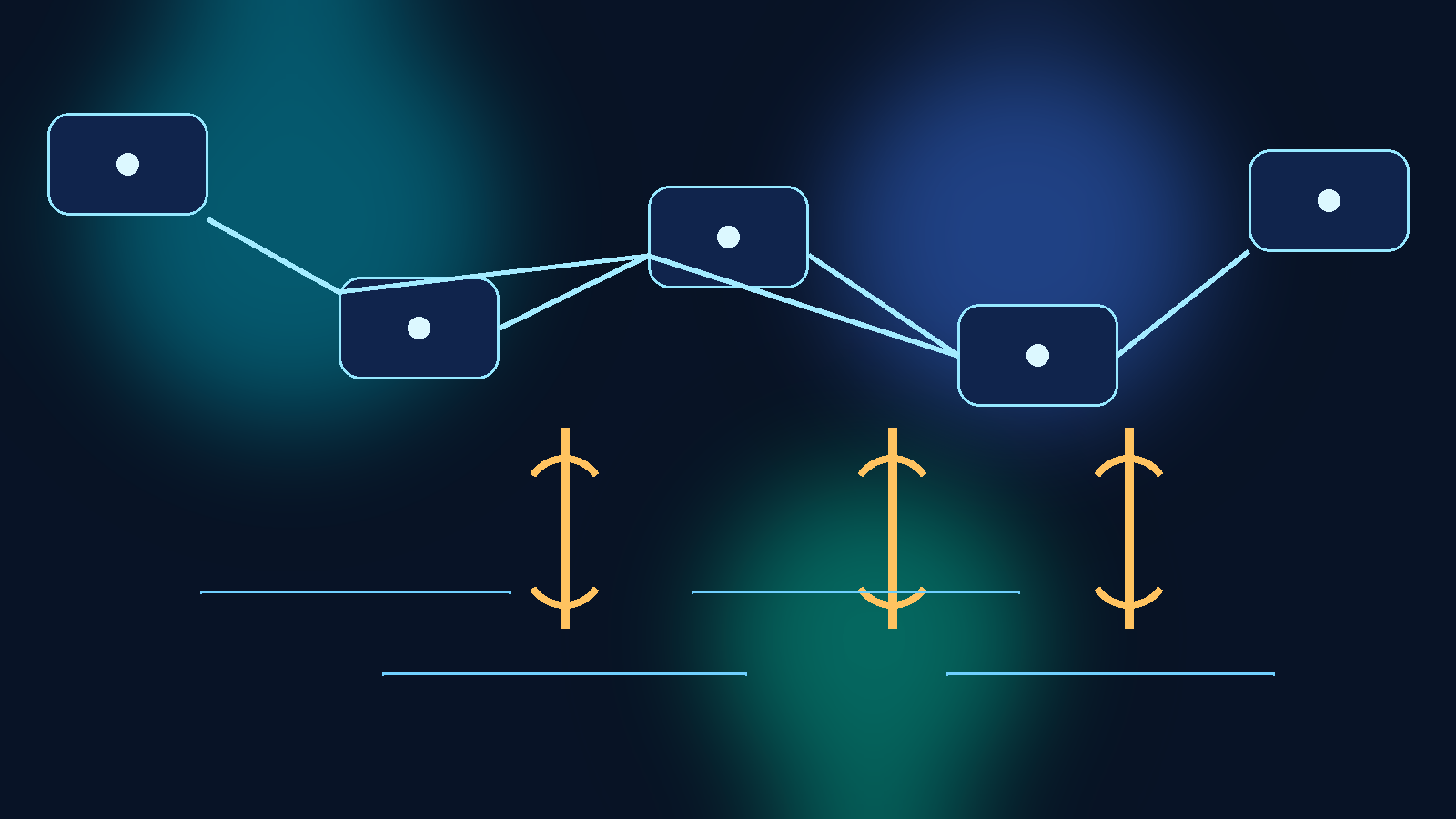

One of the most common mistakes is forcing every user through the same access path regardless of what they can do inside the tool. If the AI app has both general-use features and elevated administrative controls, those paths should not share the same policy assumptions.

A standard employee who can query approved internal knowledge might only need sign-in from a managed device with phishing-resistant MFA. An administrator who can change connectors, alter retrieval scope, approve plugins, or view audit data should face a stricter path. That can include stronger device trust, tighter sign-in risk thresholds, privileged role requirements, or session restrictions tied specifically to the administrative surface.

When teams split those workflows early, they avoid the trap of either over-securing routine use or under-securing privileged actions.

Device trust matters because prompts can expose real business context

Many internal AI tools are approved because they do not store data permanently or because they sit behind corporate identity. That is not enough. The prompt itself can contain sensitive business context, and the response can reveal internal information that should not be exposed on unmanaged devices.

Conditional Access helps here by making device trust part of the access decision. Requiring compliant or hybrid-joined devices for high-context AI applications reduces the chance that sensitive prompts and outputs are handled in weak environments. It also gives security teams a more defensible story when the app is later connected to finance, HR, support, or engineering data.

This is especially important for browser-based AI tools, where the session may look harmless while the underlying content is not. If the app can summarize internal documents, expose customer information, or query operational systems, the device posture needs to be treated as part of data protection, not just endpoint hygiene.

Use session controls to limit the damage from convenient access

A lot of teams think of Conditional Access only as an allow or block decision. That leaves useful control on the table. Session controls can reduce risk without pushing users into total denial.

For example, a team may allow broad employee access to an internal AI portal from managed devices while restricting download behavior, limiting access from risky sign-ins, or forcing reauthentication for sensitive workflows. If the AI app is integrated with SharePoint, Microsoft 365, or other Microsoft-connected services, those controls can become an important middle layer between full access and complete rejection.

This matters because the real business pressure is usually convenience. People want the app available in the flow of work. Session-aware control lets an organization preserve that convenience while still narrowing how far a compromised or weak session can go.

Treat external identities and contractors as a separate design problem

Internal AI apps often expand quietly beyond employees. A pilot starts with one team, then a contractor group gets access, then a vendor needs limited use for support or operations. If those external users land inside the same Conditional Access path as employees, the control model gets messy fast.

External identities should usually be placed on a separate policy track with clearer boundaries. That might mean limiting access to a smaller app surface, requiring stronger MFA, narrowing trusted device assumptions, or constraining which connectors and data sources are available. The important point is to avoid pretending that all authenticated users carry the same trust level just because they can sign in through Entra ID.

This is where many AI app rollouts drift into accidental overexposure. The app feels internal, but the identity population using it is no longer truly internal.

Break-glass and service scenarios need rules before the first incident

If the AI application participates in real operations, someone will eventually ask for an exception. A leader wants emergency access from a personal device. A service account needs to run a connector refresh. A support team needs temporary elevated access during an outage. If those scenarios are not designed up front, the fastest path in the moment usually becomes the permanent path afterward.

Conditional Access should include clear exception handling before the tool is widely adopted. Break-glass paths should be narrow, logged, and owned. Service principals and background jobs should not inherit human-oriented assumptions. Emergency access should be rare enough that it stands out in review instead of blending into daily behavior.

That discipline keeps the organization from weakening the entire control model every time operations get uncomfortable.

Review policy effectiveness with app telemetry, not just sign-in success

A policy that technically works can still fail operationally. If users are constantly getting blocked in the wrong places, they will look for workarounds. If the policy is too loose, risky sessions may succeed without anyone noticing. Measuring only sign-in success rates is not enough.

Teams should review Conditional Access outcomes alongside AI app telemetry and audit logs. Which user groups are hitting friction most often? Which workflows trigger step-up requirements? Which connectors or admin surfaces are accessed from higher-risk contexts? That combined view helps security and platform teams tune the policy based on how the tool is really used instead of how they imagined it would be used.

For internal AI apps, identity control is not a one-time launch task. It is part of the operating model.

Good Conditional Access design protects adoption instead of fighting it

The goal is not to make internal AI tools difficult. The goal is to let people use them confidently without turning every prompt into a possible policy failure. Strong Conditional Access design supports adoption because it makes the boundaries legible. Users know what is expected. Administrators know where elevated controls begin. Security teams can explain why the policy exists in plain language.

When that happens, the AI app feels like a governed internal product instead of a risky experiment held together by hope. That is the right outcome. Protection should make the tool more sustainable, not less usable.