AI platforms become much harder to govern once every team starts asking for a new connector, plugin, webhook, or data source. On paper, each request sounds reasonable. A sales team wants the assistant to read CRM notes. A support team wants ticket summaries pushed into chat. A finance team wants a workflow that can pull reports from a shared drive and send alerts when numbers move. None of that sounds dramatic in isolation, but connector sprawl is how many internal AI programs drift from controlled enablement into shadow integration territory.

The problem is not that connectors are bad. The problem is that every connector quietly expands trust. It creates a new path for prompts, context, files, tokens, and automated actions to cross system boundaries. If that path is approved casually, the organization ends up with an AI estate that is technically useful but operationally messy. Reviewing connector requests well is less about saying no and more about making sure each new integration is justified, bounded, and observable before it becomes normal.

Start With the Business Action, Not the Connector Name

Many review processes begin too late in the stack. Teams ask whether a SharePoint connector, Slack app, GitHub integration, or custom webhook should be allowed, but they skip the more important question: what business action is the connector actually supposed to support? That distinction matters because the same connector can represent very different levels of risk depending on what the AI system will do with it.

Reading a controlled subset of documents for retrieval is one thing. Writing comments, updating records, triggering deployments, or sending data into another system is another. A solid review starts by defining whether the request is for read access, write access, administrative actions, scheduled automation, or some mix of those capabilities. Once that is clear, the rest of the control design gets easier because the conversation is grounded in operational intent instead of vendor branding.

Map the Data Flow Before You Debate the Tooling

Connector reviews often get derailed into product debates. People compare features, ease of setup, and licensing before anyone has clearly mapped where the data will move. That is backwards. Before approving an integration, document what enters the AI system, what leaves it, where it is stored, what logs are created, and which human or service identity is responsible for each step.

This data-flow view usually reveals the hidden risk. A connector that looks harmless may expose internal documents to a model context window, write generated summaries into a downstream system, or keep tokens alive longer than the requesting team expects. Even when the final answer is yes, the organization is better off because the integration boundary is visible instead of implied.

Separate Retrieval Access From Action Permissions

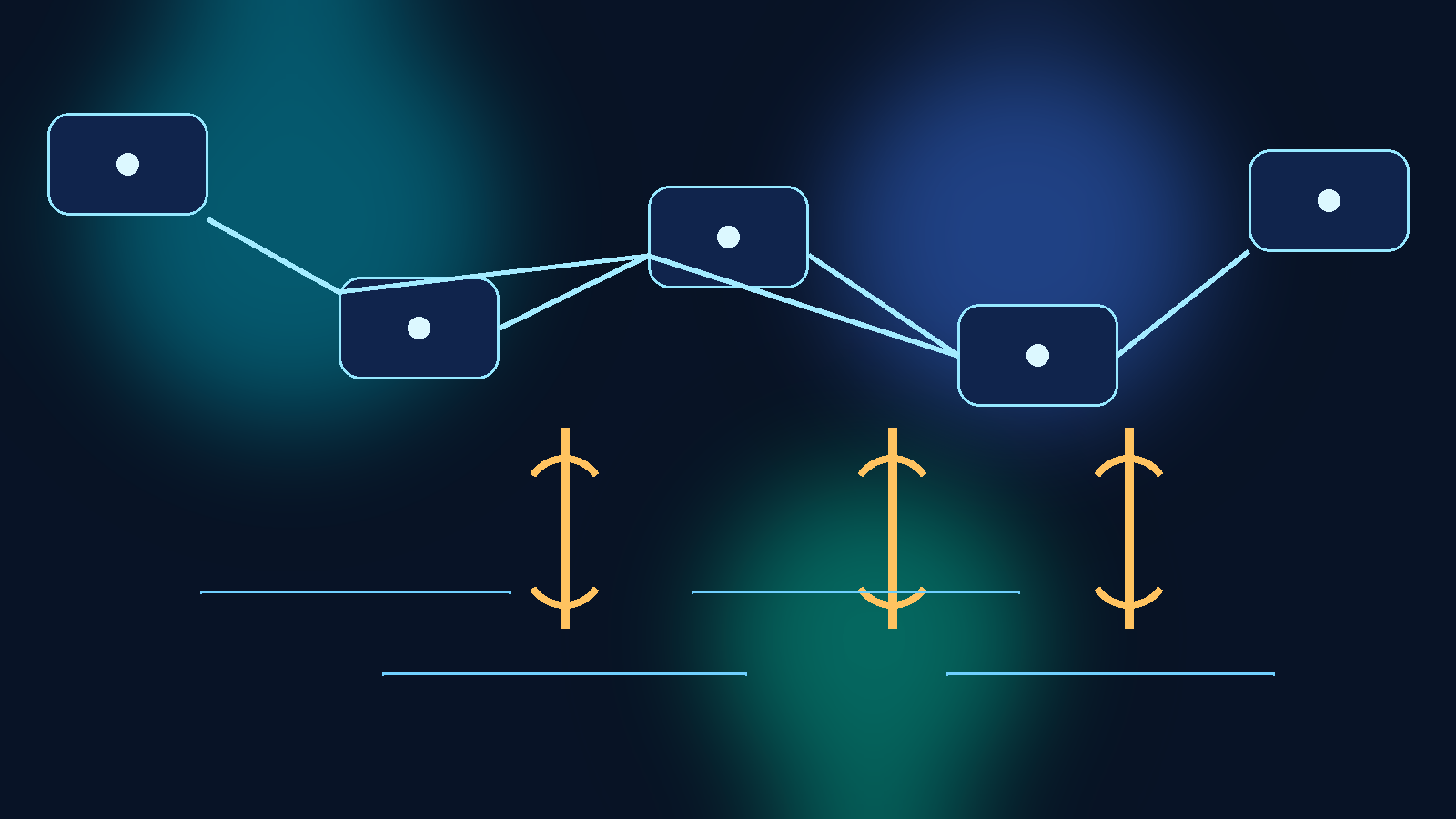

One of the most common connector mistakes is bundling retrieval and action privileges together. Teams want an assistant that can read system state and also take the next step, so they grant a single integration broad permissions for convenience. That makes troubleshooting harder and raises the blast radius when the workflow misfires.

A better design separates passive context gathering from active change execution. If the assistant needs to read documentation, tickets, or dashboards, give it a read-scoped path that is isolated from write-capable automations. If a later step truly needs to update data or trigger a workflow, treat that as a separate approval and identity decision. This split does not eliminate risk, but it makes the control boundary much easier to reason about and much easier to audit.

Review Whether the Connector Creates a New Trust Shortcut

A connector request should trigger one simple but useful question: does this create a shortcut around an existing control? If the answer is yes, the request deserves more scrutiny. Many shadow integrations do not look like security exceptions at first. They look like productivity improvements that happen to bypass queueing, peer review, role approval, or human sign-off.

For example, a connector might let an AI workflow pull documents from a repository that humans can access only through a governed interface. Another might let generated content land in a production system without the normal validation step. A third might quietly centralize access through a service account that sees more than any individual requester should. These patterns are dangerous because the integration becomes the easiest path through the environment, and the easiest path tends to become the default path.

Make Owners Accountable for Lifecycle, Not Just Setup

Connector approvals often focus on initial setup and ignore the long tail. That is how stale integrations stay alive long after the original pilot ends. Every approved connector should have a clearly named owner, a business purpose, and a review point that forces the team to justify why the integration still exists.

This is especially important for AI programs because experimentation moves quickly. A connector that made sense during a proof of concept may no longer fit the architecture six weeks later, but it remains in place because nobody wants to untangle it. Requiring an owner and a review date changes that habit. It turns connector approval from a one-time permission event into a maintained responsibility.

Require Logging That Explains the Integration, Not Just That It Ran

Basic activity logs are not enough for connector governance. Knowing that an API call happened is useful, but it does not tell reviewers why the integration exists, what scope it was supposed to have, or whether the current behavior still matches the original approval. Good connector governance needs enough logging and metadata to explain intent as well as execution.

That usually means preserving the requesting team, approved use case, identity scope, target systems, and review history alongside the technical logs. Without that context, investigators end up reconstructing decisions after an incident from scattered tickets and half-remembered assumptions. With that context, unusual activity stands out faster because reviewers can compare the current behavior to a defined operating boundary.

Standardize a Small Review Checklist So Speed Does Not Depend on Memory

The healthiest connector programs do not rely on one security person or one platform architect remembering every question to ask. They use a small repeatable checklist. The checklist does not need to be bureaucratic to be effective. It just needs to force the team to answer the same core questions every time.

A practical checklist usually covers the business purpose, read versus write scope, data sensitivity, token storage method, logging expectations, expiration or review date, owner, fallback behavior, and whether the connector bypasses an existing control path. That is enough structure to catch bad assumptions without slowing every request to a halt. If the integration is genuinely low risk, the checklist makes approval easier. If the integration is not low risk, the gaps show up early.

Final Takeaway

AI connector sprawl is rarely caused by one reckless decision. It usually grows through a long series of reasonable-sounding approvals that nobody revisits. That is why connector governance should focus on trust boundaries, data flow, action scope, and lifecycle ownership instead of treating each request as a simple tooling choice.

If you review connector requests by business action, separate retrieval from execution, watch for new trust shortcuts, and require visible ownership over time, you can keep AI integrations useful without letting them become a shadow architecture. The goal is not to block every connector. The goal is to make sure every approved connector still makes sense when someone looks at it six months later.