AI agents are moving from demos into real business processes, and that creates a new kind of operational problem. Teams want faster execution, but leaders still need confidence that sensitive actions, data access, and production changes happen with the right level of review. A weak approval pattern slows everything down. No approval pattern at all creates risk that will eventually land on security, compliance, or executive leadership.

The answer is not to force every agent action through a human checkpoint. The better approach is to design approval workflows that are selective, observable, and tied to real business impact. When done well, approvals become part of the system design instead of a manual bottleneck glued on at the end.

Start with action tiers, not a single approval rule

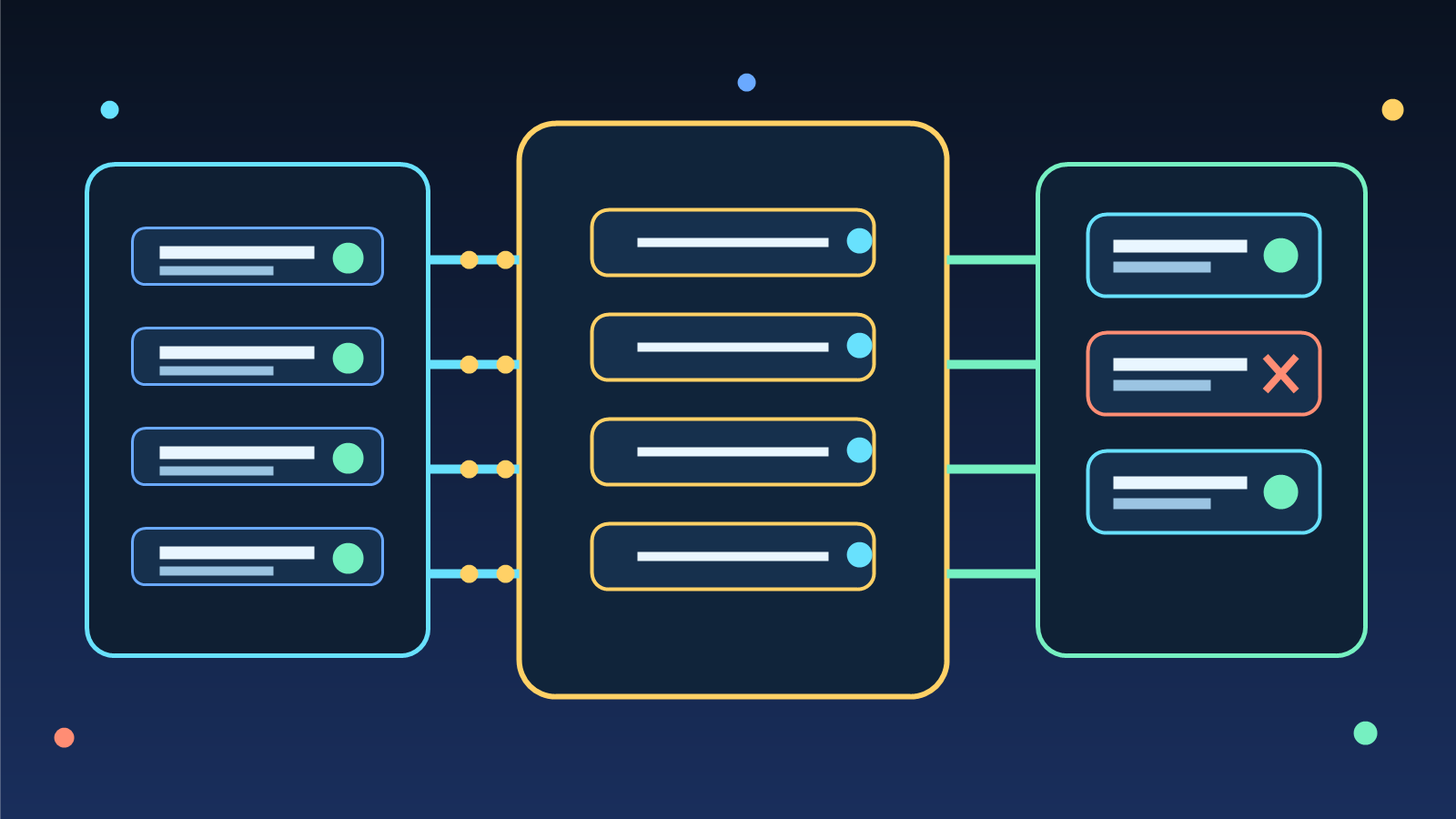

One of the most common mistakes in enterprise AI programs is treating every agent action as equally risky. Reading a public knowledge base article is not the same as changing a firewall rule, approving a refund, or sending a customer-facing message. If every action requires the same approval path, people will either abandon the automation or start looking for ways around it.

A better model is to define action tiers. Low-risk actions can run automatically with logging. Medium-risk actions can require lightweight approval from a responsible operator. High-risk actions should demand stronger controls, such as named approvers, step-up authentication, or two-person review. This structure gives teams a way to move quickly on safe work while preserving friction where it actually matters.

Make the approval request understandable in under 30 seconds

Approval systems often fail because the reviewer cannot tell what the agent is trying to do. A vague prompt like “Approve this action” is not governance. It is a recipe for either blind approval or constant rejection. Reviewers need a short, structured summary of the proposed action before they can make a meaningful decision.

Strong approval payloads usually include the requested action, the target system, the business reason, the expected impact, the relevant inputs, and the rollback path if something goes wrong. If a person can understand the request quickly, they are more likely to make a good decision quickly. Good approval UX is not cosmetic. It is a control that directly affects operational speed and risk quality.

Use policy to decide when humans must be involved

Human review should be triggered by policy, not by guesswork. That means teams need explicit conditions that determine when an agent can proceed automatically and when it must pause. These conditions might include data sensitivity, financial thresholds, user impact, production environment scope, customer visibility, or whether the action crosses a system boundary.

Policy-driven approval also creates consistency. Without it, one team might allow autonomous changes in production while another blocks harmless internal tasks. A shared policy model gives security, platform, and product teams a common language for discussing acceptable automation. That makes governance more scalable and much easier to audit.

Design for fast rejection, clean escalation, and safe retries

An approval workflow is more than a yes or no button. Mature systems support rejection reasons, escalation paths, and controlled retries. If an approver denies an action, the agent should know whether to stop, ask for more context, or prepare a safer alternative. If no one responds within the expected time window, the request should escalate instead of quietly sitting in a queue.

Retries matter too. If an agent simply resubmits the same request without changes, the workflow becomes noisy and people stop trusting it. A better pattern is to require the agent to explain what changed before a rejected action can be presented again. That keeps the review process focused and reduces repetitive approval fatigue.

Treat observability as part of the control plane

Many teams log the final outcome of an agent action but ignore the approval path that led there. That is a mistake. For governance and incident response, the approval trail is often as important as the action itself. You want to know what the agent proposed, what policy was evaluated, who approved or denied it, what supporting evidence was shown, and what eventually happened in the target system.

When approval telemetry is captured cleanly, it becomes useful beyond compliance. Operations teams can identify where approvals slow delivery, security teams can find risky patterns, and platform teams can improve policies based on real usage. Observability turns approval from a static gate into a feedback loop for better automation design.

Keep the default path narrow and the exception path explicit

Approval workflows get messy when every edge case becomes a special exception hidden in code or scattered across internal chat threads. Instead, define a narrow default path for the majority of requests and document a small number of formal exception paths. That makes both system behavior and accountability much easier to understand.

For example, a standard path might allow preapproved internal content updates, while a separate exception path handles customer-visible messaging or production infrastructure changes. The point is not to eliminate flexibility. It is to make flexibility intentional. When exceptions are explicit, they can be reviewed, improved, and governed instead of becoming invisible operational debt.

The best approval workflow is one people trust enough to keep using

Enterprise AI adoption does not stall because teams lack model access. It stalls when the surrounding controls feel unreliable, confusing, or too slow. An effective approval workflow protects the business without forcing humans to become full-time traffic controllers for software agents. That balance comes from risk-based action tiers, policy-driven checkpoints, clear reviewer context, and strong telemetry.

Teams that get this right will move faster because they can automate more with confidence. Teams that get it wrong will either create approval theater or disable guardrails under pressure. If your organization is putting agents into real workflows this year, approval design is not a side topic. It is one of the core architectural decisions that will shape whether the rollout succeeds.