Enterprise teams are moving from simple chat assistants to AI agents that can call tools, read internal data, open tickets, generate code, and trigger workflows. That shift is useful, but it changes the risk profile. An assistant that only answers questions is one thing. An agent that can act inside your environment is closer to a junior operator with a very large blast radius.

That is why sandboxing should sit inside your cloud governance model instead of living as an afterthought in an AI pilot. If an agent can reach production systems, sensitive documents, or shared credentials without strong boundaries, then your cloud controls are already being tested by automation whether your governance process acknowledges it or not.

Sandboxing Changes the Conversation From Trust to Containment

Many AI governance discussions still revolve around model safety, prompt filtering, and human review. Those controls matter, but they do not replace execution boundaries. Sandboxing matters because it assumes agents will eventually make a bad call, encounter malicious input, or receive access they should not keep forever.

A good sandbox does not pretend the model is flawless. It limits what the agent can touch, how long it can keep access, what network paths are available, and what happens when something unusual is requested. That design turns inevitable mistakes into containable incidents instead of cross-system failures.

Identity Scope Is the First Boundary, Not the Last

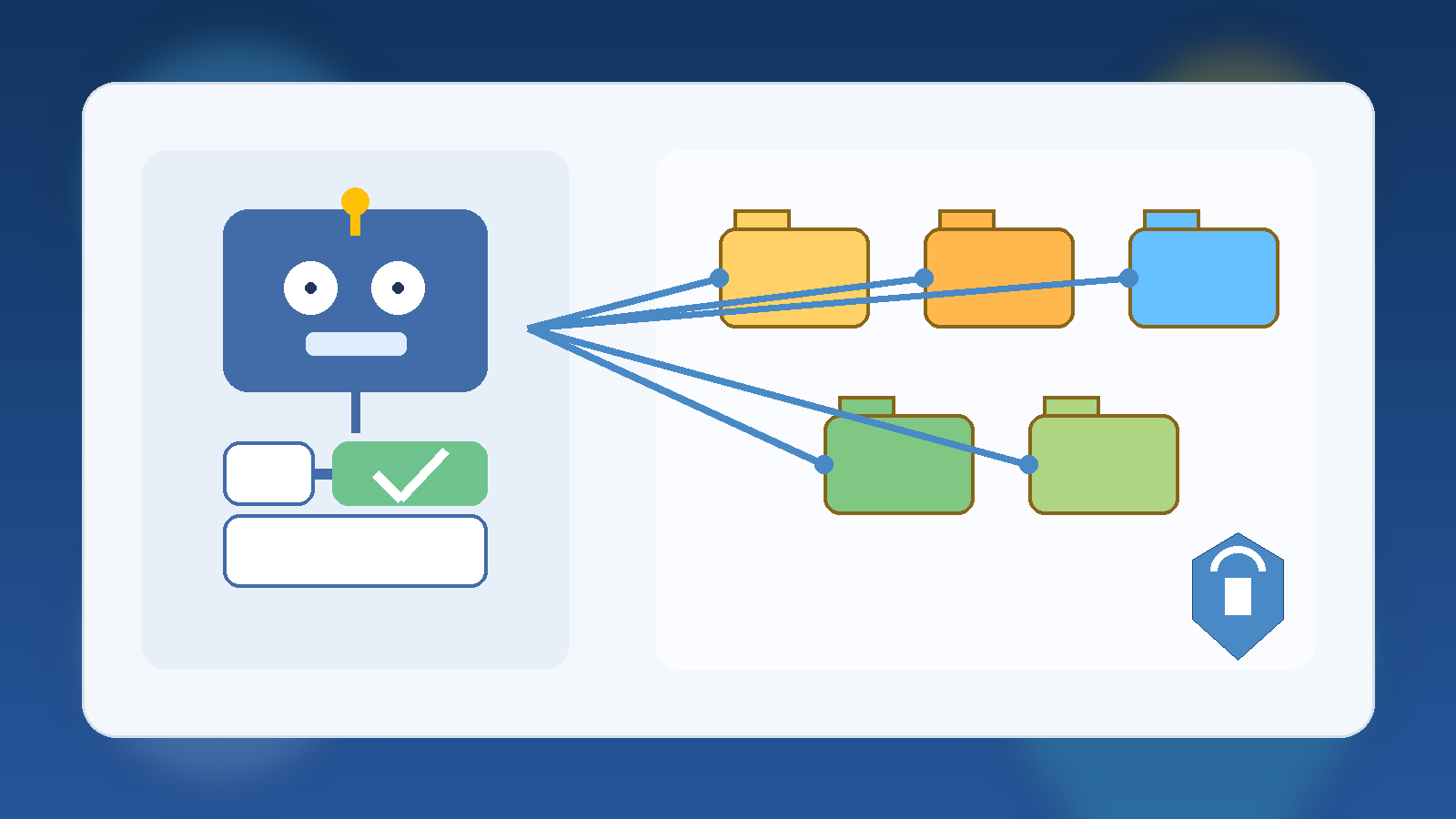

Too many deployments start with broad service credentials because they are fast to wire up. The result is an AI agent that inherits more privilege than any human operator would receive for the same task. In cloud environments, that is a governance smell. Agents should get narrow identities, purpose-built roles, and explicit separation between read, write, and approval paths.

When teams treat identity as the first sandbox layer, they gain several advantages at once. Access reviews become clearer, audit logs become easier to interpret, and rollback decisions become less chaotic because the agent never had universal reach in the first place.

Network and Runtime Isolation Matter More Once Tools Enter the Picture

As soon as an agent can browse, run code, connect to APIs, or pull files from storage, runtime isolation becomes a practical control instead of a theoretical one. Separate execution environments help prevent one compromised task from becoming a pivot point into broader infrastructure. They also let teams apply environment-specific egress rules, storage limits, and expiration windows.

This is especially important in cloud estates where AI features are layered on top of existing automation. If the same runtime can touch internal documentation, deployment systems, and customer data sources, your governance model is relying on luck. Segmented runtimes give you a cleaner answer when someone asks which agent could access what, under which conditions, and for how long.

Approval Gates Should Match Business Impact

Not every agent action deserves the same friction. Reading internal knowledge articles is not the same as rotating secrets, approving invoices, or changing production policy. Sandboxing works best when it is paired with action tiers. Low-risk actions can run automatically inside a narrow lane. Medium-risk actions may require confirmation. High-risk actions should cross a human approval boundary before the agent can continue.

That structure makes governance feel operational instead of bureaucratic. Teams can move quickly where the risk is low while still preserving deliberate oversight where a mistake would be expensive, public, or hard to reverse.

Logging Needs Context, Not Just Volume

AI agent logging often becomes noisy fast. A flood of tool calls is not the same as meaningful auditability. Governance teams need to know which identity was used, which data source was accessed, which policy allowed the action, whether a human approved anything, and what outputs left the sandbox boundary.

Context-rich logs make incident response far more realistic. They also support healthier reviews with security, compliance, and platform teams because discussions can focus on concrete behavior rather than vague assurances that the agent is “mostly restricted.”

Start With a Small Operating Model, Then Expand Carefully

The strongest first move is not a massive autonomous platform. It is a narrow operating model that defines which agent classes exist, which tasks they may perform, which environments they may run in, and which data classes they are allowed to touch. From there, teams can add more capability without losing track of the original safety assumptions.

That approach is more sustainable than retrofitting controls after several enthusiastic teams have already connected agents to everything. Governance rarely fails because nobody cared. It usually fails because convenience expanded faster than the control model that was supposed to shape it.

Final Takeaway

AI agent sandboxing is not just a security feature. It is a governance decision about scope, accountability, and failure containment. In cloud environments, those questions already exist for workloads, service principals, automation accounts, and data platforms. Agents should not get a special exemption just because the interface feels conversational.

If your organization wants agentic AI without creating invisible operational risk, put sandboxing in the model early. Define identities narrowly, isolate runtimes, tier approvals, and log behavior with enough context to defend your decisions later. That is what responsible scale actually looks like.