AI agents become dramatically more useful once they can do more than answer questions. The moment an assistant can search internal systems, update a ticket, trigger a workflow, or call a cloud API, it stops being a clever interface and starts becoming an operational actor. That is where many organizations discover an awkward truth: tool access matters more than the model demo.

When teams rush that part, they often create two bad options. Either the agent gets broad permissions because nobody wants to model the access cleanly, or every tool call becomes such a bureaucratic event that the system is not worth using. Good governance is the middle path. It gives the agent enough reach to be helpful while keeping access boundaries, approval rules, and audit trails clear enough that security teams do not have to treat every deployment like a special exception.

Tool Access Is Really a Permission Design Problem

It is tempting to frame agent safety as a prompting problem, but tool use changes the equation. A weak answer can be annoying. A weak action can change data, trigger downstream automation, or expose internal systems. Once tools enter the picture, governance needs to focus on what the agent is allowed to touch, under which conditions, and with what level of independence.

That means teams should stop asking only whether the model is capable and start asking whether the permission model matches the real risk. Reading a knowledge base article is not the same as changing a billing record. Drafting a support response is not the same as sending it. Looking up cloud inventory is not the same as deleting a resource group. If all of those actions live in the same trust bucket, the design is already too loose.

Define Access Tiers Before You Wire Up More Tools

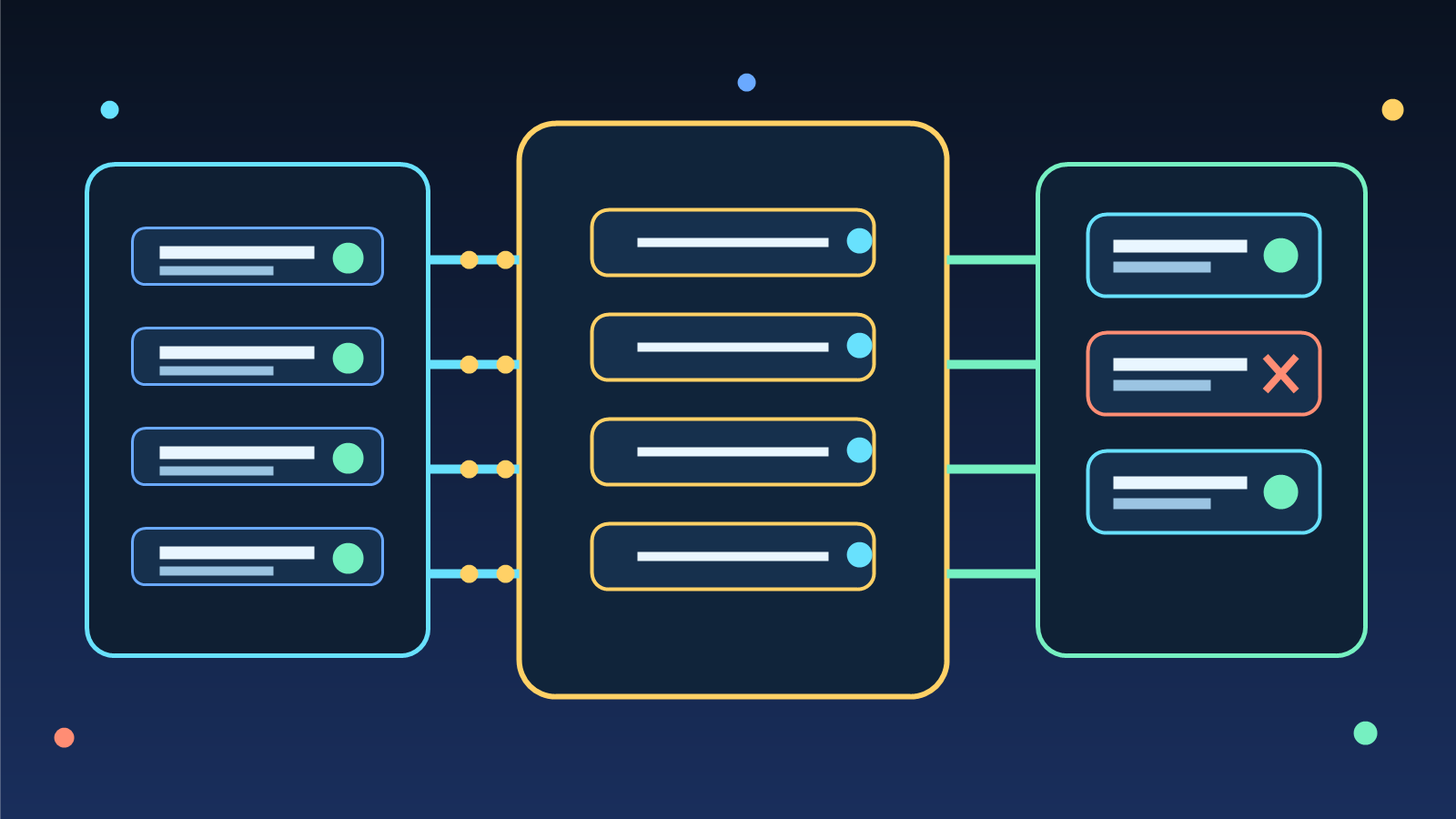

The safest way to scale agent capability is to sort tools into clear access tiers. A low-risk tier might include read-only search, documentation retrieval, and other reversible lookups. A middle tier might allow the agent to prepare drafts, create suggested changes, or open tickets that a human can review. A high-risk tier should include anything that changes permissions, edits production systems, sends external communications, or creates hard-to-reverse side effects.

This tiering matters because it creates a standard pattern instead of endless one-off debates. Developers gain a more predictable way to integrate tools, operators know where approvals belong, and security teams can review the control model once instead of reinventing it for every new use case. Governance works better when it behaves like infrastructure rather than a collection of exceptions.

Separate Drafting Power From Execution Power

One of the most useful design moves is splitting preparation from execution. An agent may be allowed to gather data, build a proposed API payload, compose a ticket update, or assemble a cloud change plan without automatically being allowed to carry out the final step. That lets the system do the expensive thinking and formatting work while preserving a deliberate checkpoint for actions with real consequence.

This pattern also improves adoption. Teams are usually far more comfortable trialing an agent that can prepare good work than one that starts making changes on day one. Once the draft quality and observability prove trustworthy, some tasks can graduate into higher autonomy based on evidence instead of optimism.

Use Context-Aware Approval Instead of Blanket Approval

Blanket approval looks simple, but it usually fails in one of two ways. If every tool invocation needs a human click, the agent becomes slow theater. If teams preapprove entire tool families just to reduce friction, they quietly eliminate the main protection they were trying to keep. The better approach is context-aware approval that keys off risk, target system, and expected blast radius.

For example, read-only inventory queries can often run freely, creating a change ticket may only need a lightweight review, and modifying live permissions may require a stronger human checkpoint with the exact command or API payload visible. Approval becomes much more defensible when it reflects consequence instead of habit.

Audit Trails Need to Capture Intent, Not Just Outcome

Standard application logging is not enough for agent tool access. Teams need to know what the agent tried to do, what evidence it relied on, which tool it chose, which parameters it prepared, and whether a human approved or blocked the action. Without that record, post-incident review becomes a guessing exercise and routine debugging becomes far more painful than it needs to be.

Intent logging is also good politics. Security and operations teams are much more willing to support agent rollouts when they can see a transparent chain of reasoning and control. The point is not to make the system feel mysterious and powerful. The point is to make it accountable enough that people trust where it is allowed to operate.

Governance Should Create a Reusable Road, Not a Permanent Roadblock

Poor governance slows teams down because it relies on repeated manual review, unclear ownership, and vague exceptions. Strong governance does the opposite. It defines standard tool classes, approval paths, audit requirements, and revocation controls so new agent workflows can launch on known patterns. That is how organizations avoid turning every agent project into a bespoke policy argument.

In practice, that may mean publishing a small internal standard for read-only integrations, draft-only actions, and execution-capable actions. It may mean requiring service identities that can be revoked independently of a human account. It may also mean establishing visible boundaries for public-facing tasks, customer data access, and production changes. None of that is glamorous, but it is what lets teams scale tool-enabled AI without creating an expanding pile of security debt.

Final Takeaway

AI tool access should not force a choice between reckless autonomy and unusable red tape. The strongest designs recognize that tool use is a permission problem first. They define access tiers, separate drafting from execution, require approval where impact is real, and preserve enough logging to explain what the agent intended to do.

If your team wants agents that help in production without becoming the next security exception, start by governing tools like a platform capability instead of a one-off shortcut. That discipline is what makes higher autonomy sustainable.