Model Context Protocol servers are quickly becoming one of the most interesting ways to extend AI tools. They let a model reach beyond the chat box and interact with files, databases, ticket systems, cloud resources, and internal apps through a standardized interface. That convenience is exactly why teams like them. It is also why security and governance teams should review them carefully before broad approval.

The mistake is to treat an MCP server like a harmless feature add-on. In practice, it is closer to a new trust bridge. It can expose sensitive context to prompts, let the model retrieve data from systems it did not previously touch, and sometimes trigger actions in external platforms. If approval happens too casually, an organization can end up with AI tooling that looks modern on the surface but quietly creates new pathways for data leakage and uncontrolled automation.

Start by Reviewing the Data Boundary, Not the Demo

MCP server reviews often go wrong because the first conversation is about what the integration can do instead of what data boundary it crosses. A polished demo makes almost any server look useful. It can summarize tickets, search a knowledge base, or draft updates from project data in a few seconds. That is the easy part.

The harder question is what new information becomes available once the server is connected. A server that can read internal documentation may sound low risk until reviewers realize those documents include customer escalation notes, incident timelines, or architecture diagrams. A server that queries a project board may appear safe until someone notices it also exposes HR-related work items or private executive planning. Approval should begin with the reachable data set, not the marketing pitch.

Separate Read Access From Action Access

One of the cleanest ways to reduce risk is to refuse the idea that every useful integration needs write capability. Many teams only need a model to read and summarize information, yet the requested server is configured to create tickets, update records, send messages, or trigger workflows as well. That is unnecessary blast radius.

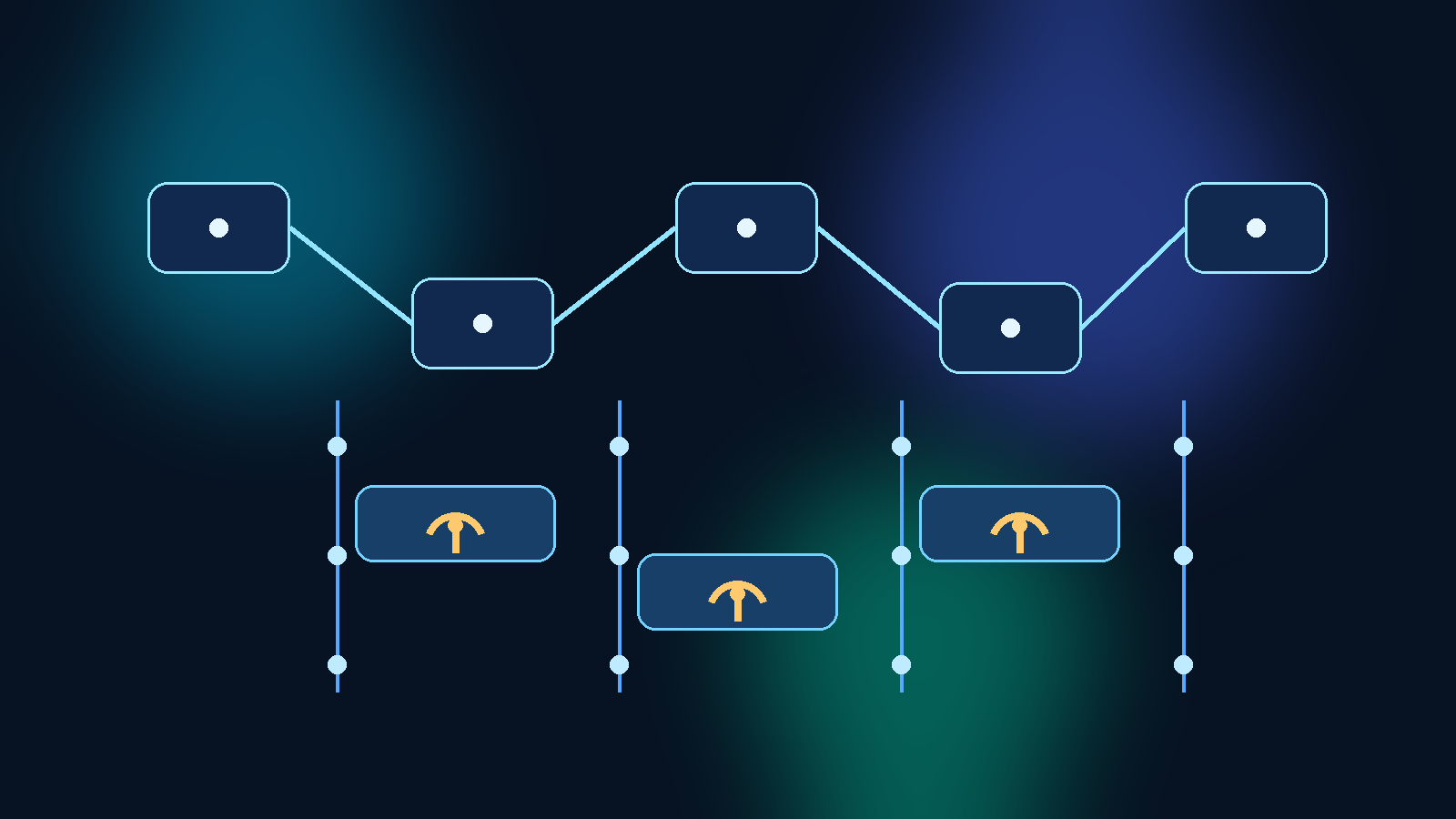

A stronger review pattern is to split the request into distinct capability layers. Read-only retrieval, draft generation, and action execution should not be bundled into one broad approval. If a team wants the model to prepare a change request, that does not automatically justify allowing the same server to submit the change. Keeping action scopes separate preserves human review points and makes incident investigation much simpler later.

Require a Named Owner for Every Server Connection

Shadow AI infrastructure often starts with an integration that technically works but socially belongs to no one. A developer sets it up, a pilot team starts using it, and after a few weeks everyone assumes someone else is watching it. That is how stale credentials, overly broad scopes, and orphaned endpoints stick around in production.

Every approved MCP server should have a clearly named service owner. That owner should be responsible for scope reviews, credential rotation, change approval, incident response coordination, and retirement decisions. Ownership matters because an integration is not finished when it connects successfully. It needs maintenance, and systems without owners tend to drift until they become invisible risk.

Review Tool Outputs as an Exfiltration Channel

Teams naturally focus on what an MCP server can read, but they should spend equal time on what it can return to the model. Output is not neutral. If a server can package large result sets, hidden metadata, internal URLs, or raw file contents into a response, that output may be copied into chats, logs, summaries, or downstream prompts. A leak does not require malicious intent if the workflow itself moves too much information.

This is why output shaping matters. Good MCP governance asks whether the server can minimize fields, redact sensitive attributes, enforce row limits, and deny broad wildcard queries. It also asks whether the client or gateway logs tool responses by default. A server that retrieves the right system but returns too much detail can still create a quiet exfiltration path.

Treat Environment Scope as a First-Class Approval Question

A common failure mode is approving an MCP server for a useful development workflow and then quietly allowing the same pattern in production. That shortcut feels efficient, but it erases the distinction between experimentation and operational trust. The fact that a server is safe enough for a sandbox does not mean it is safe enough for regulated data or live customer systems.

Reviewers should ask which environments the server may touch and keep those approvals explicit. Development, test, and production access should be separate decisions with separate credentials and separate logging expectations. If a team cannot explain why a production connection is necessary, the safest answer is to keep the server out of production until the case is real and bounded.

Add Monitoring That Matches the Way the Server Will Actually Be Used

Too many AI integration reviews stop after initial approval. That is backwards. The real risk emerges once users start improvising with a tool in everyday work. A server that looks safe in a controlled demo may behave very differently when dozens of prompts hit it with inconsistent wording, unusual edge cases, and deadline-driven shortcuts.

Monitoring should reflect that reality. Reviewers should ensure there is enough telemetry to answer practical questions later: who used the server, which systems were queried, what kinds of operations were attempted, how often results were truncated, and whether denied actions are increasing. Good monitoring is not just about catching abuse. It also reveals when a supposedly narrow integration is slowly becoming a broad operational dependency.

Build an Approval Path That Encourages Better Requests

If the approval process is vague, request quality stays vague too. Teams submit one-line justifications, reviewers guess at the real need, and decisions become inconsistent. A better pattern is to make requesters describe the business task, reachable data, required operations, expected users, environment scope, and fallback plan if the server is unavailable.

That kind of structure improves both speed and quality. Reviewers can see what is actually being requested, and teams learn to think in terms of trust boundaries instead of feature checklists. Over time, the process becomes less about blocking innovation and more about helping useful integrations arrive with cleaner assumptions from the start.

Approval Should Create a Controlled Path, Not a Permanent Exception

The goal of MCP server governance is not to prevent teams from extending AI tools. The goal is to make sure each extension is intentional, limited, and observable. When an MCP server is reviewed as a trust bridge instead of a convenient plugin, organizations make better choices about scope, ownership, and operational controls.

That is the difference between enabling AI safely and accumulating integration debt. Approving the right server with the right boundaries can make an AI platform more useful. Approving it casually can create a data exfiltration problem that no one notices until the wrong prompt pulls the wrong answer from the wrong system.