Azure AI Search can make internal knowledge dramatically easier to find, but it can also create a quiet data exposure problem when teams index broadly and authorize loosely. The platform is fast enough that people often focus on relevance, latency, and chunking strategy before they slow down to ask a more important question: who should be able to retrieve which documents after they have been indexed?

That question matters because a search layer can become a shortcut around the controls that existed in the source systems. A SharePoint library might have careful permissions. A storage account might be segmented by team. A data repository might have obvious ownership. Once everything flows into a shared search service, the wrong access model can flatten those boundaries and make one index feel like a universal answer engine.

Why search becomes a governance problem faster than people expect

Many teams start with the right intent. They want a useful internal copilot, a better document search experience, or an AI assistant that can ground answers in company knowledge. The first pilot often works because the dataset is small and the stakeholders are close to the project. Then the service gains momentum, more connectors are added, and suddenly the same index is being treated as a shared enterprise layer.

That is where trouble starts. If access is enforced only at the application layer, every new app, plugin, or workflow must reimplement the same authorization logic correctly. If one client gets it wrong, the search tier may still return content the user should never have seen. A strong design assumes that retrieval boundaries need to survive beyond a single front end.

Use RBAC to separate platform administration from content access

The first practical step is to stop treating administrative access and content access as the same thing. Azure roles that let someone manage the service are not the same as rules that determine what content a user should retrieve. Platform teams need enough privilege to operate the search service, but they should not automatically become broad readers of every indexed dataset unless the business case truly requires it.

This separation matters operationally too. When a service owner can create indexes, manage skillsets, and tune performance, that does not mean they should inherit unrestricted visibility into HR files, finance records, or sensitive legal material. Distinct role boundaries reduce the blast radius of routine operations and make reviews easier later.

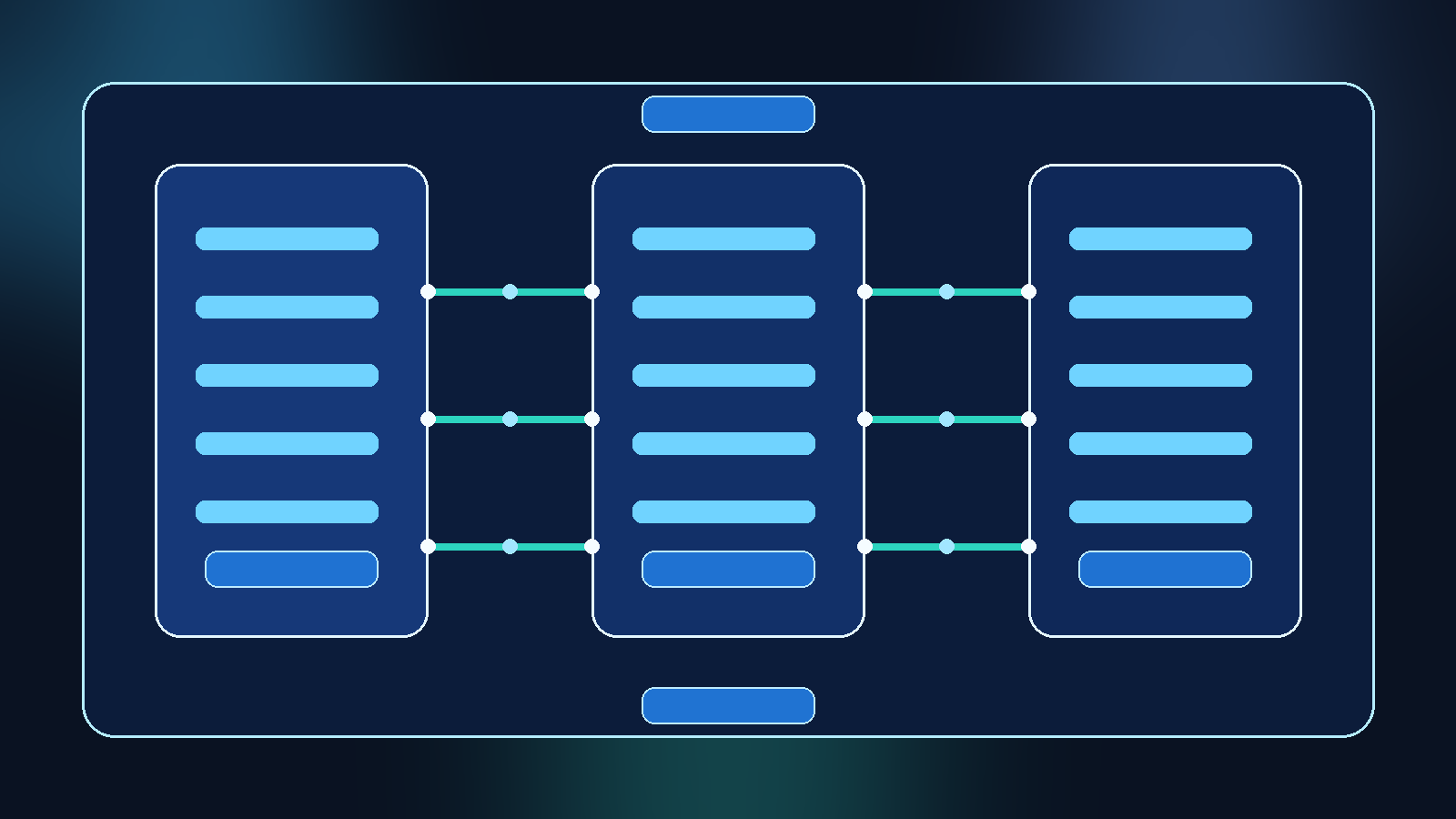

Keep indexes aligned to real data ownership boundaries

One of the most common design mistakes is building a giant shared index because it feels efficient at the start. In practice, the better pattern is usually to align indexes with a real ownership boundary such as business unit, sensitivity tier, or workload purpose. That creates a structure that mirrors how people already think about access.

A separate index strategy is not always required for every team, but the default should lean toward intentional segmentation instead of convenience-driven aggregation. When content with different sensitivity levels lands in the same retrieval pool, exceptions multiply and governance gets harder. Smaller, purpose-built indexes often produce cleaner operations than one massive index with fragile filtering rules.

Apply document-level filtering only when the metadata is trustworthy

Sometimes teams do need shared infrastructure with document-level filtering. That can work, but only when the security metadata is accurate, complete, and maintained as part of the indexing pipeline. If a document loses its group mapping, keeps a stale entitlement value, or arrives without the expected sensitivity label, the retrieval layer may quietly drift away from the source-of-truth permissions.

This is why security filtering should be treated as a data quality problem as much as an authorization problem. The index must carry the right access attributes, the ingestion process must validate them, and failures should be visible instead of silently tolerated. Trusting filters without validating the underlying metadata is how teams create a false sense of safety.

Design for group-based access, not one-off exceptions

Search authorization becomes brittle when it is built around hand-maintained exceptions. A handful of manual allowlists may seem manageable during a pilot, but they turn into cleanup debt as the project grows. Group-based access, ideally mapped to identity systems people already govern, gives teams a model they can audit and explain.

The discipline here is simple: if a person should see a set of documents, that should usually be because they belong to a governed group or role, not because someone patched them into a custom rule six months ago. The more access control depends on special cases, the less confidence you should have in the retrieval layer over time.

Test retrieval boundaries the same way you test relevance

Search teams are usually good at testing whether a document can be found. They are often less disciplined about testing whether a document is hidden from the wrong user. Both matter. A retrieval system that is highly relevant for the wrong audience is still a failure.

A practical review process includes negative tests for sensitive content, role-based test accounts, and sampled queries that try to cross known boundaries. If an HR user, a finance user, and a general employee all ask overlapping questions, the returned results should reflect their actual entitlements. This kind of testing should happen before launch and after any indexing or identity changes.

Make auditability part of the design, not an afterthought

If a search service supports an internal AI assistant, someone will eventually ask why a result was returned. Good teams plan for that moment early. They keep enough logging to trace which index responded, which filters were applied, which identity context was used, and which connector supplied the content.

That does not mean keeping reckless amounts of sensitive query data forever. It means retaining enough evidence to review incidents, validate policy, and prove that access controls are doing what the design says they should do. Without auditability, every retrieval issue becomes an argument instead of an investigation.

Final takeaway

Azure AI Search is powerful precisely because it turns scattered content into something accessible. That same strength can become a weakness if teams treat retrieval as a neutral utility instead of a governed access path. The safest pattern is to keep platform roles separate from content permissions, align indexes to real ownership boundaries, validate security metadata, and test who cannot see results just as aggressively as you test who can.

A search index should make knowledge easier to reach, not easier to overshare. If the RBAC model cannot explain why a result is visible, the design is not finished yet.