Zero Trust is one of those security terms that sounds more complicated than it needs to be. At its core, Zero Trust means this: never assume a request is safe just because it comes from inside your network. Every user, device, and service has to prove it belongs — every time.

For cloud-native teams, this is not just a philosophy. It’s an operational reality. Traditional perimeter-based security doesn’t map cleanly onto microservices, multi-cloud architectures, or remote workforces. If your security model still relies on “inside the firewall = trusted,” you have a problem.

This guide walks through how to implement Zero Trust in a cloud-native environment — what the pillars are, where to start, and how to avoid the common traps.

What Zero Trust Actually Means

Zero Trust was formalized by NIST in Special Publication 800-207, but the concept predates the document. The core idea is that no implicit trust is ever granted to a request based on its network location alone. Instead, access decisions are made continuously based on verified identity, device health, context, and the least-privilege principle.

In practice, this maps to three foundational questions every access decision should answer:

- Who is making this request? (Identity — human or machine)

- From what? (Device posture — is the device healthy and managed?)

- To what? (Resource — what is being accessed, and is it appropriate?)

If any of those answers are missing or fail verification, access is denied. Period.

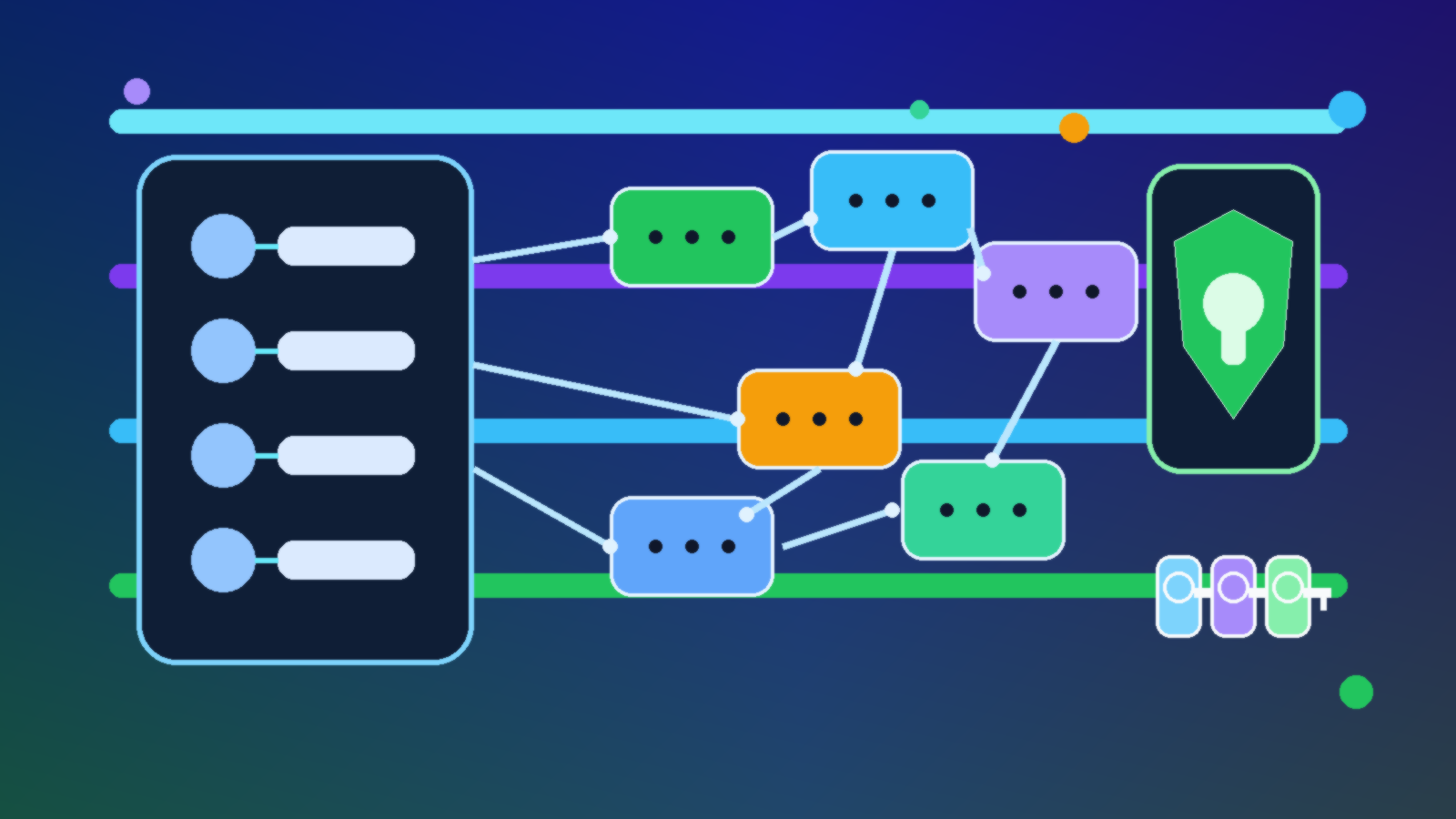

The Five Pillars of a Zero Trust Architecture

CISA and NIST both describe Zero Trust in terms of pillars — the key areas where trust decisions are made. Here is a practical breakdown for cloud-native teams.

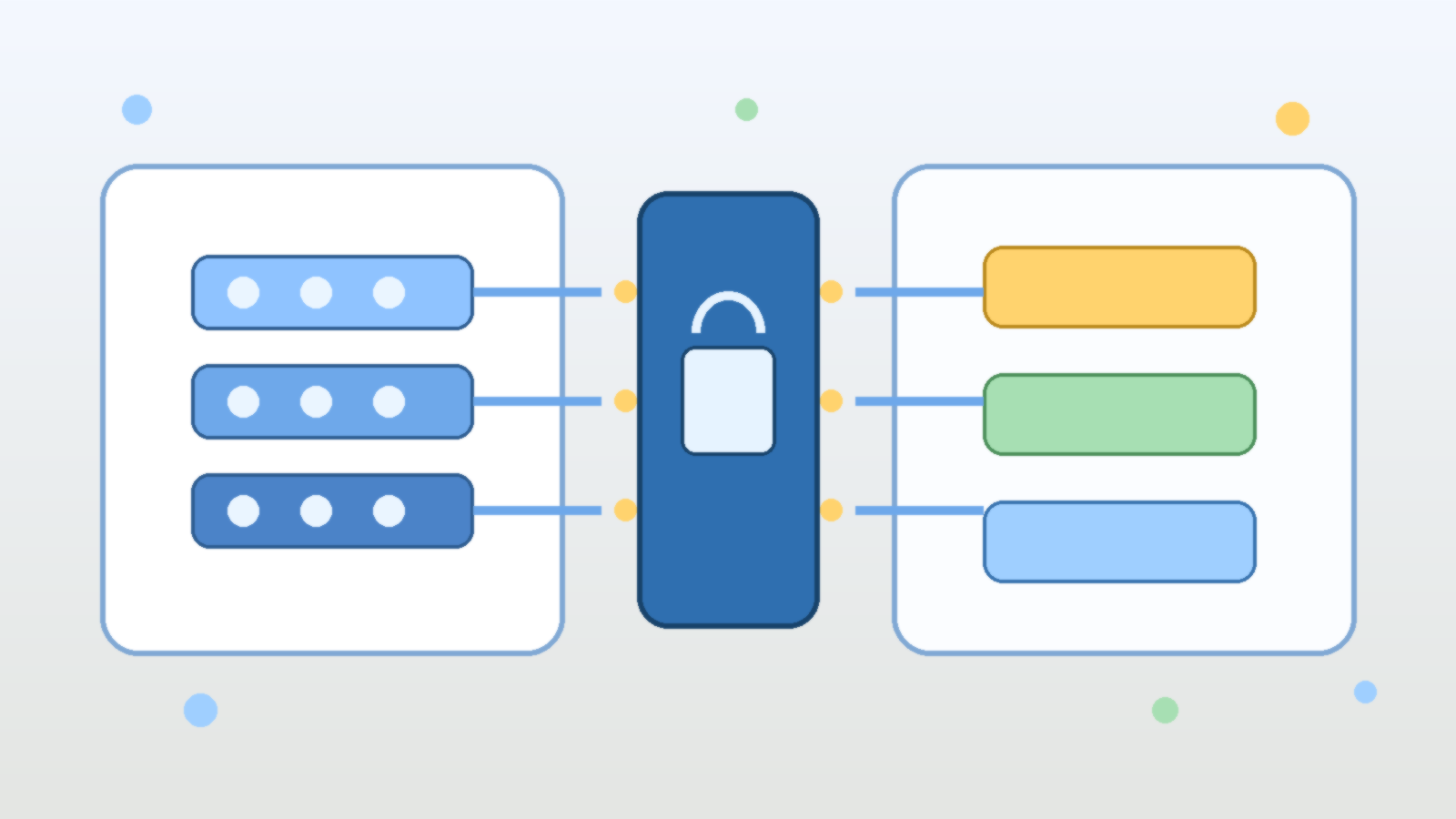

1. Identity

Identity is the foundation of Zero Trust. Every human user, service account, and API key must be authenticated before any resource access is granted. This means strong multi-factor authentication (MFA) for humans, and short-lived credentials (or workload identity) for services.

In Azure, this is where Microsoft Entra ID (formerly Azure AD) does the heavy lifting. Managed identities for Azure resources eliminate the need to store secrets in code. For cross-service calls, use workload identity federation rather than long-lived service principal secrets.

Key implementation steps: enforce MFA across all users, remove standing privileged access in favor of just-in-time (JIT) access, and audit service principal permissions regularly to eliminate over-permissioning.

2. Device

Even a fully authenticated user can present risk if their device is compromised. Zero Trust requires device health as part of the access decision. Devices should be managed, patched, and compliant with your security baseline before they are permitted to reach sensitive resources.

In practice, this means integrating your mobile device management (MDM) solution — such as Microsoft Intune — with your identity provider, so that Conditional Access policies can block unmanaged or non-compliant devices at the gate. On the server side, use endpoint detection and response (EDR) tooling and ensure your container images are scanned and signed before deployment.

3. Network Segmentation

Zero Trust does not mean “no network controls.” It means network controls alone are not sufficient. Micro-segmentation is the goal: workloads should only be able to communicate with the specific other workloads they need to reach, and nothing else.

In Kubernetes environments, implement NetworkPolicy rules to restrict pod-to-pod communication. In Azure, use Virtual Network (VNet) segmentation, Network Security Groups (NSGs), and Azure Firewall to enforce east-west traffic controls between services. Service mesh tools like Istio or Azure Service Mesh can enforce mutual TLS (mTLS) between services, ensuring traffic is authenticated and encrypted in transit even inside the cluster.

4. Application and Workload Access

Applications should not trust their callers implicitly just because the call arrives on the right internal port. Implement token-based authentication between services using short-lived tokens (OAuth 2.0 client credentials, OIDC tokens, or signed JWTs). Every API endpoint should validate the identity and permissions of the caller before processing a request.

Azure API Management can serve as a centralized enforcement point: validate tokens, rate-limit callers, strip internal headers before forwarding, and log all traffic for audit purposes. This centralizes your security policy enforcement without requiring every service team to build their own auth stack.

5. Data

The ultimate goal of Zero Trust is protecting data. Classification is the prerequisite: you cannot protect what you have not categorized. Identify your sensitive data assets, apply appropriate labels, and use those labels to drive access policy.

In Azure, Microsoft Purview provides data discovery and classification across your cloud estate. Pair it with Azure Key Vault for secrets management, Customer Managed Keys (CMK) for encryption-at-rest, and Private Endpoints to ensure data stores are not reachable from the public internet. Enforce data residency and access boundaries with Azure Policy.

Where Cloud-Native Teams Should Start

A full Zero Trust transformation is a multi-year effort. Teams trying to do everything at once usually end up doing nothing well. Here is a pragmatic starting sequence.

Start with identity. Enforce MFA, remove shared credentials, and eliminate long-lived service principal secrets. This is the highest-impact work you can do with the least architectural disruption. Most organizations that experience a cloud breach can trace it to a compromised credential or an over-privileged service account. Fixing identity first closes a huge class of risk quickly.

Then harden your network perimeter. Move sensitive workloads off public endpoints. Use Private Endpoints and VNet integration to ensure your databases, storage accounts, and internal APIs are not exposed to the internet. Apply Conditional Access policies so that access to your management plane requires a compliant, managed device.

Layer in micro-segmentation gradually. Start by auditing which services actually need to talk to which. You will often find that the answer is “far fewer than currently allowed.” Implement deny-by-default NSG or NetworkPolicy rules and add exceptions only as needed. This is operationally harder but dramatically limits blast radius when something goes wrong.

Build visibility into everything. Zero Trust without observability is blind. Enable diagnostic logs on all control plane activities, forward them to a SIEM (like Microsoft Sentinel), and build alerts on anomalous behavior — unusual sign-in locations, privilege escalations, unexpected lateral movement between services.

Common Mistakes to Avoid

Zero Trust implementations fail in predictable ways. Here are the ones worth watching for.

Treating Zero Trust as a product, not a strategy. Vendors will happily sell you a “Zero Trust solution.” No single product delivers Zero Trust. It is an architecture and a mindset applied across your entire estate. Products can help implement specific pillars, but the strategy has to come from your team.

Skipping device compliance. Many teams enforce strong identity but overlook device health. A phished user on an unmanaged personal device can bypass most of your identity controls if you have not tied device compliance into your Conditional Access policies.

Over-relying on VPN as a perimeter substitute. VPN is not Zero Trust. It grants broad network access to anyone who authenticates to the VPN. If you are using VPN as your primary access control mechanism for cloud resources, you are still operating on a perimeter model — you’ve just moved the perimeter to the VPN endpoint.

Neglecting service-to-service authentication. Human identity gets attention. Service identity gets forgotten. Review your service principal permissions, eliminate any with Owner or Contributor at the subscription level, and replace long-lived secrets with managed identities wherever the platform supports it.

Zero Trust and the Shared Responsibility Model

Cloud providers handle security of the cloud — the physical infrastructure, hypervisor, and managed service availability. You are responsible for security in the cloud — your data, your identities, your network configurations, your application code.

Zero Trust is how you meet that responsibility. The cloud makes it easier in some ways: managed identity services, built-in encryption, platform-native audit logging, and Conditional Access are all available without standing up your own infrastructure. But easier does not mean automatic. The controls have to be configured, enforced, and monitored.

Teams that treat Zero Trust as a checkbox exercise — “we enabled MFA, done” — will have a rude awakening the first time they face a serious incident. Teams that treat it as a continuous improvement practice — regularly reviewing permissions, testing controls, and tightening segmentation — build security posture that actually holds up under pressure.

The Bottom Line

Zero Trust is not a product you buy. It is a way of designing systems so that compromise of one component does not automatically mean compromise of everything. For cloud-native teams, it is the right answer to a fundamental problem: your workloads, users, and data are distributed across environments that no single firewall can contain.

Start with identity. Shrink your blast radius. Build visibility. Iterate. That is Zero Trust done practically — not as a marketing concept, but as a real reduction in risk.