Azure OpenAI projects usually do not fail because the model is unavailable. They fail because the organization never decided how shared capacity should be allocated once multiple teams want the same thing at the same time. One pilot gets plenty of headroom, a second team arrives with a deadline, a third team suddenly wants higher throughput for a demo, and finance starts asking why the new AI platform already feels unpredictable.

The technical conversation often gets reduced to tokens per minute, requests per minute, or whether provisioned capacity is justified yet. Those details matter, but they are not the whole problem. The real issue is operational ownership. If nobody defines who gets quota, how it is reviewed, and what happens when demand spikes, every model launch turns into a rushed negotiation between engineering, platform, and budget owners.

Quota Problems Usually Start as Ownership Problems

Many internal teams begin with one shared Azure OpenAI resource and one optimistic assumption: there will be time to organize quotas later. That works while usage is light. Once multiple workloads compete for throughput, the shared pool becomes political. The loudest team asks for more. The most visible launch gets protected first. Smaller internal apps absorb throttling even if they serve important employees.

That is why quota planning should be treated like service design instead of a one-time technical setting. Someone needs to own the allocation model, the exceptions process, and the review cadence. Without that, quota decisions drift into ad hoc favors, and every surprise 429 becomes an argument about whose workload matters more.

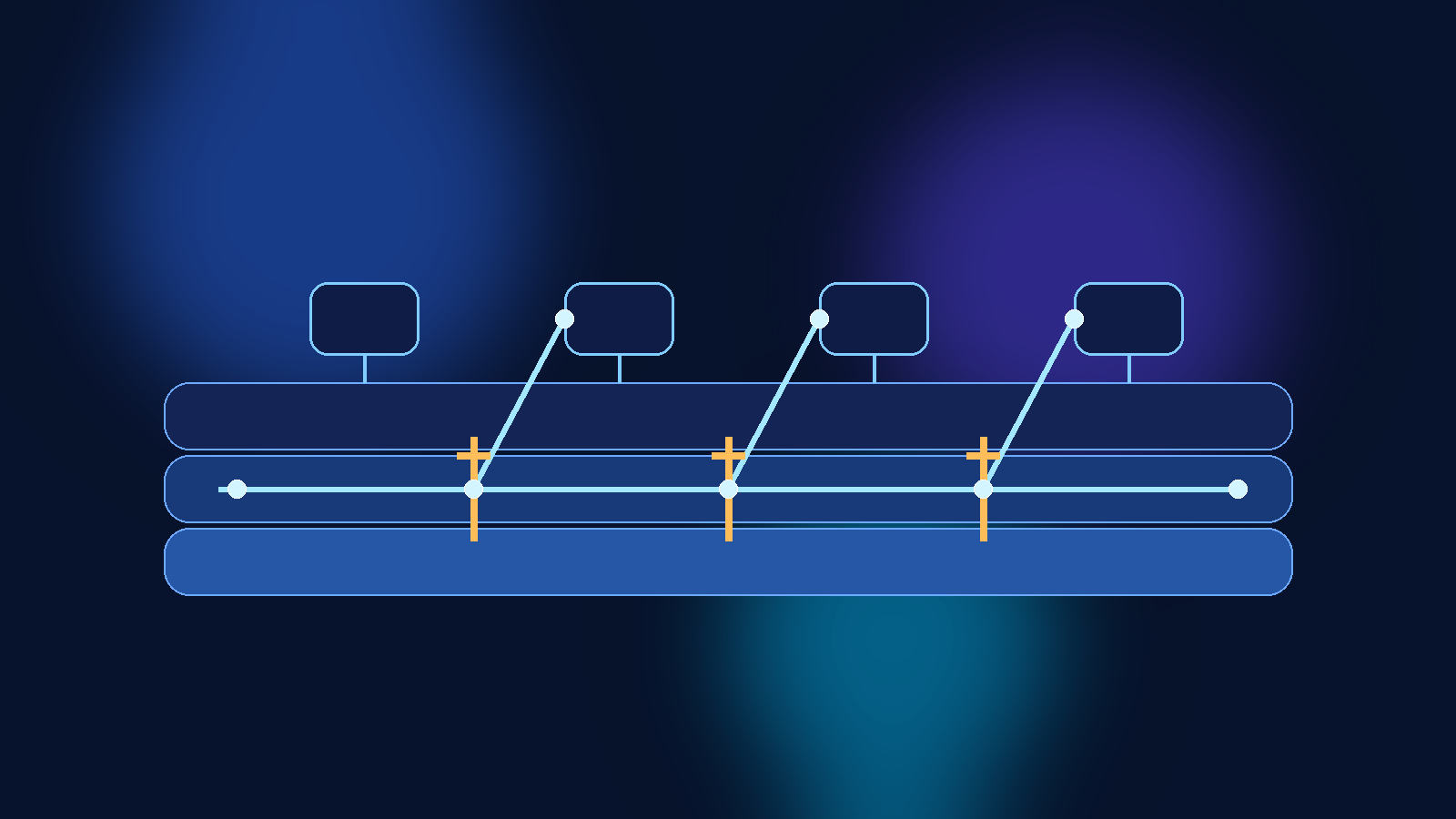

Separate Baseline Capacity From Burst Requests

A practical pattern is to define a baseline allocation for each internal team or application, then handle temporary spikes as explicit burst requests instead of pretending every workload deserves permanent peak capacity. Baseline quota should reflect normal operating demand, not launch-day nerves. Burst handling should cover events like executive demos, migration waves, training sessions, or a newly onboarded business unit.

This matters because permanent over-allocation hides waste. Teams rarely give capacity back voluntarily once they have it. If the platform group allocates quota based on hypothetical worst-case usage for everyone, the result is a bloated plan that still does not feel fair. A baseline-plus-burst model is more honest. It admits that some demand is real and recurring, while some demand is temporary and should be treated that way.

Tie Quota to a Named Service Owner and a Business Use Case

Do not assign significant Azure OpenAI quota to anonymous experimentation. If a workload needs meaningful capacity, it should have a named owner, a clear user population, and a documented business purpose. That does not need to become a heavy governance board, but it should be enough to answer a few basic questions: who runs this service, who uses it, what happens if it is throttled, and what metric proves the allocation is still justified.

This simple discipline improves both cost control and incident response. When quotas are tied to identifiable services, platform teams can see which internal products deserve priority, which are dormant, and which are still living on last quarter’s assumptions.

Use Showback Before You Need Full Chargeback

Organizations often avoid quota governance because they think the only serious option is full financial chargeback. That is overkill for many internal AI programs, especially early on. Showback is usually enough to improve behavior. If each team can see its approximate usage, reserved capacity, and the cost consequence of keeping extra headroom, conversations get much more grounded.

Showback changes the tone from “the platform is blocking us” to “we are asking the platform to reserve capacity for this workload, and here is why.” That is a healthier discussion. It also gives finance and engineering a shared language without forcing every prototype into a billing maze too early.

Design for Throttling Instead of Acting Shocked by It

Even with good allocation, some workloads will still hit limits. That should not be treated as a scandal. It should be expected behavior that applications are designed to handle gracefully. Queueing, retries with backoff, workload prioritization, caching, and fallback models all belong in the engineering plan long before production traffic arrives.

The important governance point is that application teams should not assume the platform will always solve a usage spike by handing out more quota. Sometimes the right answer is better request shaping, tighter prompt design, or a service-level decision about which users and actions deserve priority when demand exceeds the happy path.

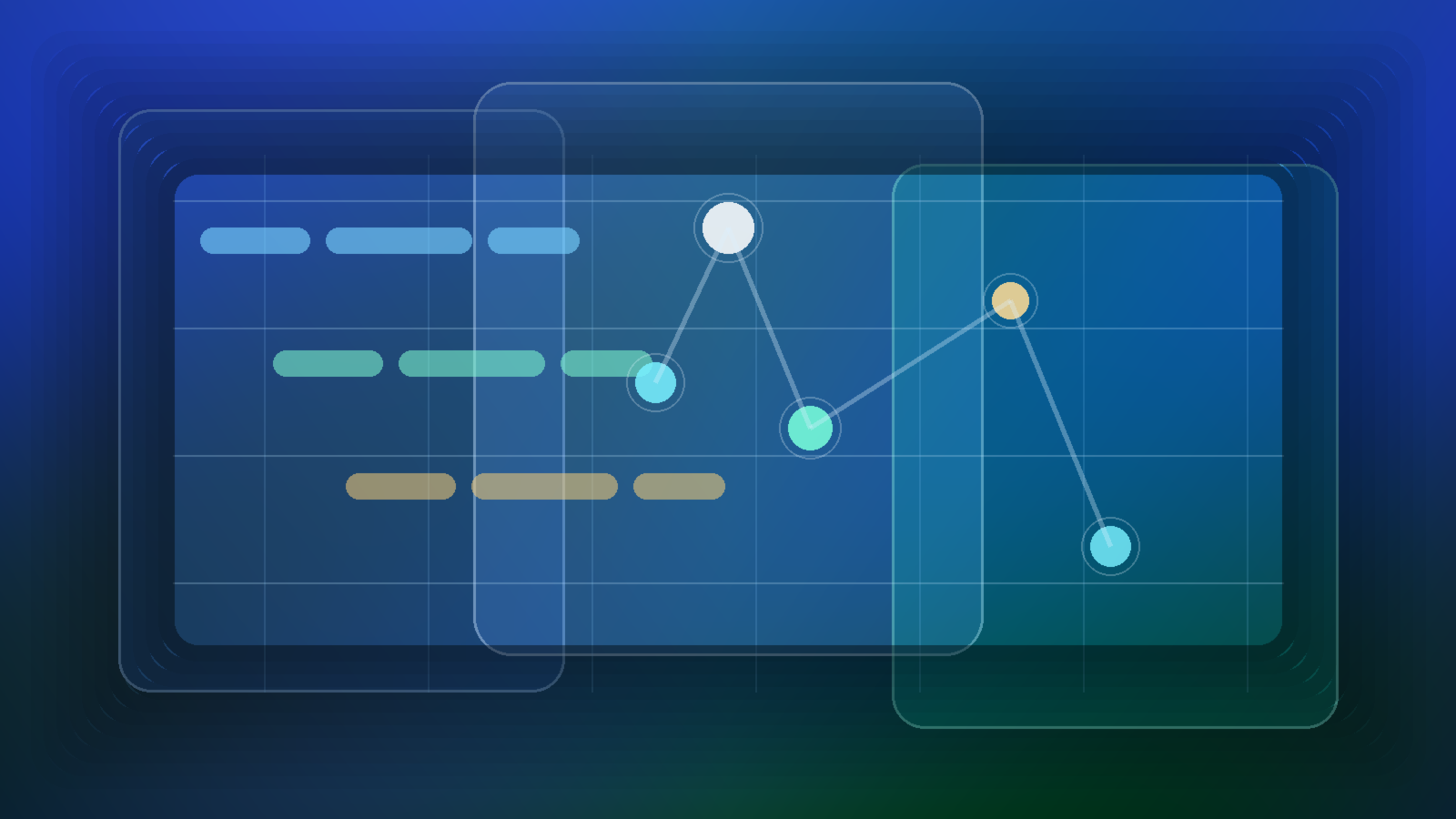

Review Quotas on a Calendar, Not Only During Complaints

If quota reviews only happen during incidents, the review process will always feel punitive. A better pattern is a simple recurring check, often monthly or quarterly depending on scale, where platform and service owners look at utilization, recent throttling, upcoming launches, and idle allocations. That makes redistribution normal instead of dramatic.

These reviews should be short and practical. The goal is not to produce another governance document nobody reads. The goal is to keep the capacity model aligned with reality before the next internal launch or leadership demo creates avoidable pressure.

Provisioned Capacity Should Follow Predictability, Not Prestige

Some teams push for provisioned capacity because it sounds more mature or more strategic. That is not a good reason. Provisioned throughput makes the most sense when a workload is steady enough, important enough, and predictable enough to justify that commitment. It is a capacity planning tool, not a trophy for the most influential internal sponsor.

If your traffic pattern is still exploratory, standard shared capacity with stronger governance may be the better fit. If a workload has a stable usage floor and meaningful business dependency, moving part of its demand to provisioned capacity can reduce drama for everyone else. The point is to decide based on workload shape and operational confidence, not on who escalates hardest.

Final Takeaway

Azure OpenAI quota governance works best when it is boring. Define baseline allocations, make burst requests explicit, tie capacity to named owners, show teams what their reservations cost, and review the model before contention becomes a firefight. That turns quota from a budget argument into a service management practice.

When internal AI platforms skip that discipline, every new launch feels urgent and every limit feels unfair. When they adopt it, teams still have hard conversations, but at least those conversations happen inside a system that makes sense.