Enterprise AI teams love to debate model quality, benchmark scores, and which vendor roadmap looks strongest this quarter. Those conversations matter, but they are rarely the first thing that causes trouble once several internal teams begin sharing the same AI platform. In practice, the cracks usually appear at the policy layer. A team gets access to an endpoint it should not have, another team sends more data than expected, a prototype starts calling an expensive model without guardrails, and nobody can explain who approved the path in the first place.

That is why AI gateways deserve more attention than they usually get. The gateway is not just a routing convenience between applications and models. It is the enforcement point where an organization decides what is allowed, what is logged, what is blocked, and what gets treated differently depending on risk. When multiple teams share models, tools, and data paths, strong gateway policy often matters more than shaving a few points off a benchmark comparison.

Model Choice Is Visible, Policy Failure Is Expensive

Model decisions are easy to discuss because they are visible. People can compare price, latency, context windows, and output quality. Gateway policy is less glamorous. It lives in rules, headers, route definitions, authentication settings, rate limits, and approval logic. Yet that is where production risk actually gets shaped. A mediocre policy design can turn an otherwise solid model rollout into a governance mess.

For example, one internal team may need access to a premium reasoning model for a narrow workflow, while another should only use a cheaper general-purpose model for low-risk tasks. If both can hit the same backend without strong gateway control, the platform loses cost discipline and technical separation immediately. The model may be excellent, but the operating model is already weak.

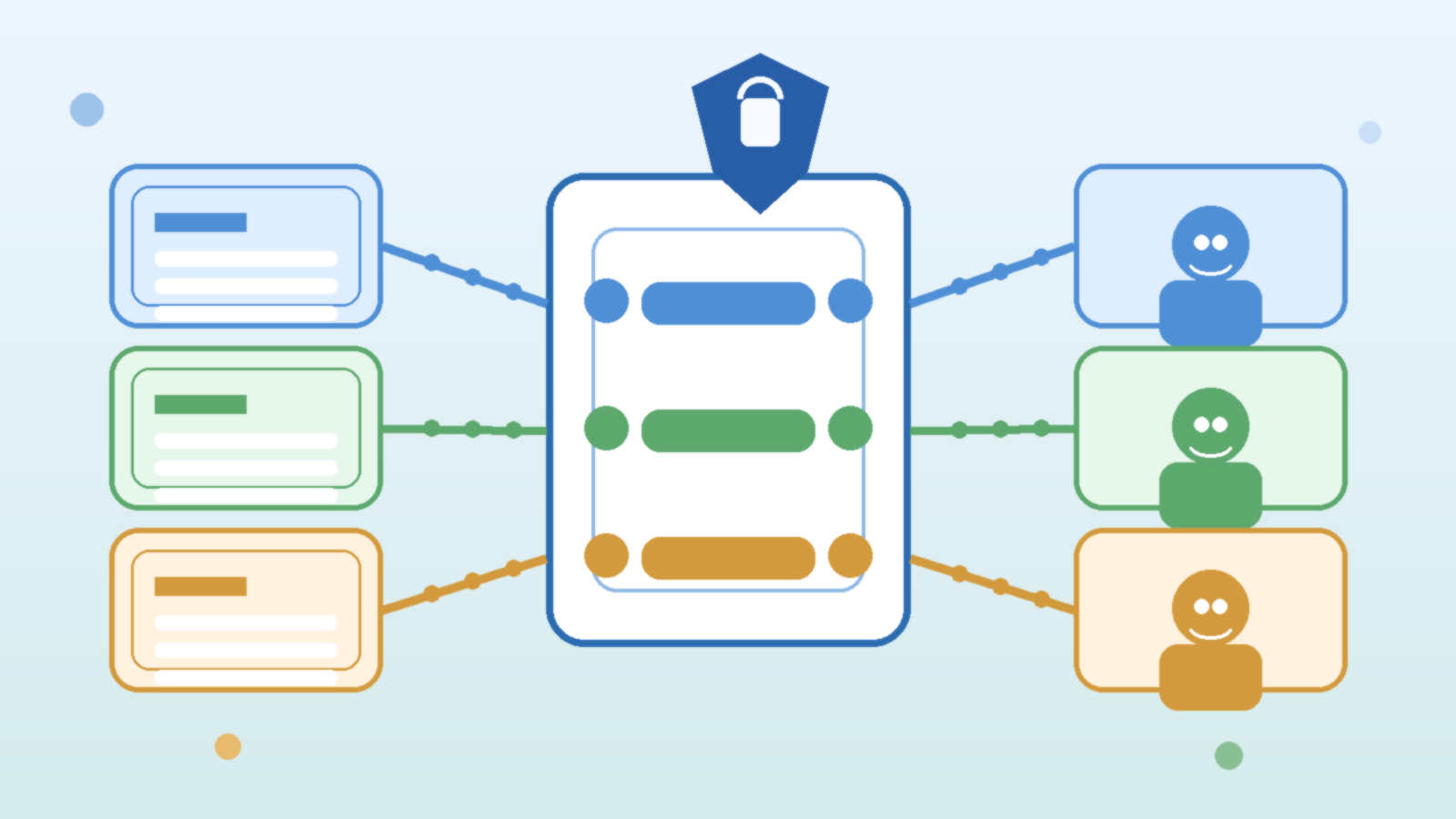

A Gateway Creates a Real Control Plane for Shared AI

Organizations usually mature once they realize they are not managing one chatbot. They are managing a growing set of internal applications, agents, copilots, evaluation jobs, and automation flows that all want model access. At that point, direct point-to-point access becomes difficult to defend. An AI gateway creates a proper control plane where policies can be applied consistently across workloads instead of being reimplemented poorly inside each app.

This is where platform teams gain leverage. They can define which models are approved for which environments, which identities can reach which routes, which prompts or payload patterns should trigger inspection, and how quotas are carved up. That is far more valuable than simply exposing a common endpoint. A shared endpoint without differentiated policy is only centralized chaos.

Route by Risk, Not Just by Vendor

Many gateway implementations start with vendor routing. Requests for one model family go here, requests for another family go there. That is a reasonable start, but it is not enough. Mature gateways route by risk profile as well. A low-risk internal knowledge assistant should not be handled the same way as a workflow that can trigger downstream actions, access sensitive enterprise data, or generate content that enters customer-facing channels.

Risk-based routing makes policy practical. It allows the platform to require stronger controls for higher-impact workloads: stricter authentication, tighter rate limits, additional inspection, approval gates for tool invocation, or more detailed audit logging. It also keeps lower-risk workloads from being slowed down by controls they do not need. One-size-fits-all policy usually ends in two bad outcomes at once: weak protection where it matters and frustrating friction where it does not.

Separate Identity, Cost, and Content Controls

A useful mental model is to treat AI gateway policy as three related layers. The first layer is identity and entitlement: who or what is allowed to call a route. The second is cost and performance governance: how often it can call, which models it can use, and what budget or quota applies. The third is content and behavior governance: what kind of input or output requires blocking, filtering, review, or extra monitoring.

These layers should be designed separately even if they are enforced together. Teams get into trouble when they solve one layer and assume the rest is handled. Strong authentication without cost policy can still produce runaway spend. Tight quotas without content controls can still create data handling problems. Output filtering without identity separation can still let the wrong application reach the wrong backend. The point of the gateway is not to host one magic control. It is to become the place where multiple controls meet in a coherent way.

Logging Needs to Explain Decisions, Not Just Traffic

One underappreciated benefit of good gateway policy is auditability. It is not enough to know that a request happened. In shared AI environments, operators also need to understand why a request was allowed, denied, throttled, or routed differently. If an executive asks why a business unit hit a slower model during a launch week, the answer should be visible in policy and logs, not reconstructed from guesses and private chat threads.

That means logs should capture policy decisions in human-usable terms. Which route matched? Which identity or application policy applied? Was a safety filter engaged? Was the request downgraded, retried, or blocked? Decision-aware logging is what turns an AI gateway from plumbing into operational governance.

Do Not Let Every Application Bring Its Own Gateway Logic

Without a strong shared gateway, development teams naturally recreate policy in application code. That feels fast at first. It is also how organizations end up with five different throttling strategies, three prompt filtering implementations, inconsistent authentication, and no clean way to update policy when the risk picture changes. App-level logic still has a place, but it should sit behind platform-level rules, not replace them.

The practical standard is simple: application teams should own business behavior, while the platform owns cross-cutting enforcement. If every team can bypass that pattern, the platform has no real policy surface. It only has suggestions.

Gateway Policy Should Change Faster Than Infrastructure

Another reason the policy layer matters so much is operational speed. Model options, regulatory expectations, and internal risk appetite all change faster than core infrastructure. A gateway gives teams a place to adapt controls without redesigning every application. New model restrictions, revised egress rules, stricter prompt handling, or tighter quotas can be rolled out at the gateway far faster than waiting for every product team to refactor.

That flexibility becomes critical during incidents and during growth. When a model starts behaving unpredictably, when a connector raises data exposure concerns, or when costs spike, the fastest safe response usually happens at the gateway. A platform without that layer is forced into slower, messier mitigation.

Final Takeaway

Once multiple teams share an AI platform, model choice is only part of the story. The gateway policy layer determines who can access models, which routes are appropriate for which workloads, how costs are constrained, how risk is separated, and whether operators can explain what happened afterward. That makes gateway policy more than an implementation detail. It becomes the operating discipline that keeps shared AI from turning into shared confusion.

If an organization wants enterprise AI to scale cleanly, it should stop treating the gateway as a simple pass-through and start treating it as a real policy control plane. The model still matters. The policy layer is what makes the model usable at scale.