Enterprise AI systems are getting better at remembering. They can retain instructions across sessions, pull prior answers into new prompts, and ground outputs in internal documents that feel close enough to memory for most users. That convenience is powerful, but it also creates a security problem that many teams underestimate. If an AI system can remember more than it should, or remember the wrong things for too long, it can quietly become a data leak with a helpful tone.

The issue is not only whether an AI model was trained on sensitive data. In most production environments, the bigger day-to-day risk sits in the memory layer around the model. That includes conversation history, retrieval caches, user profiles, connector outputs, summaries, embeddings, and application-side stores that help the system feel consistent over time. If those layers are poorly scoped, one user can inherit another user’s context, stale secrets can resurface after they should be gone, and internal records can drift into places they were never meant to appear.

AI memory is broader than chat history

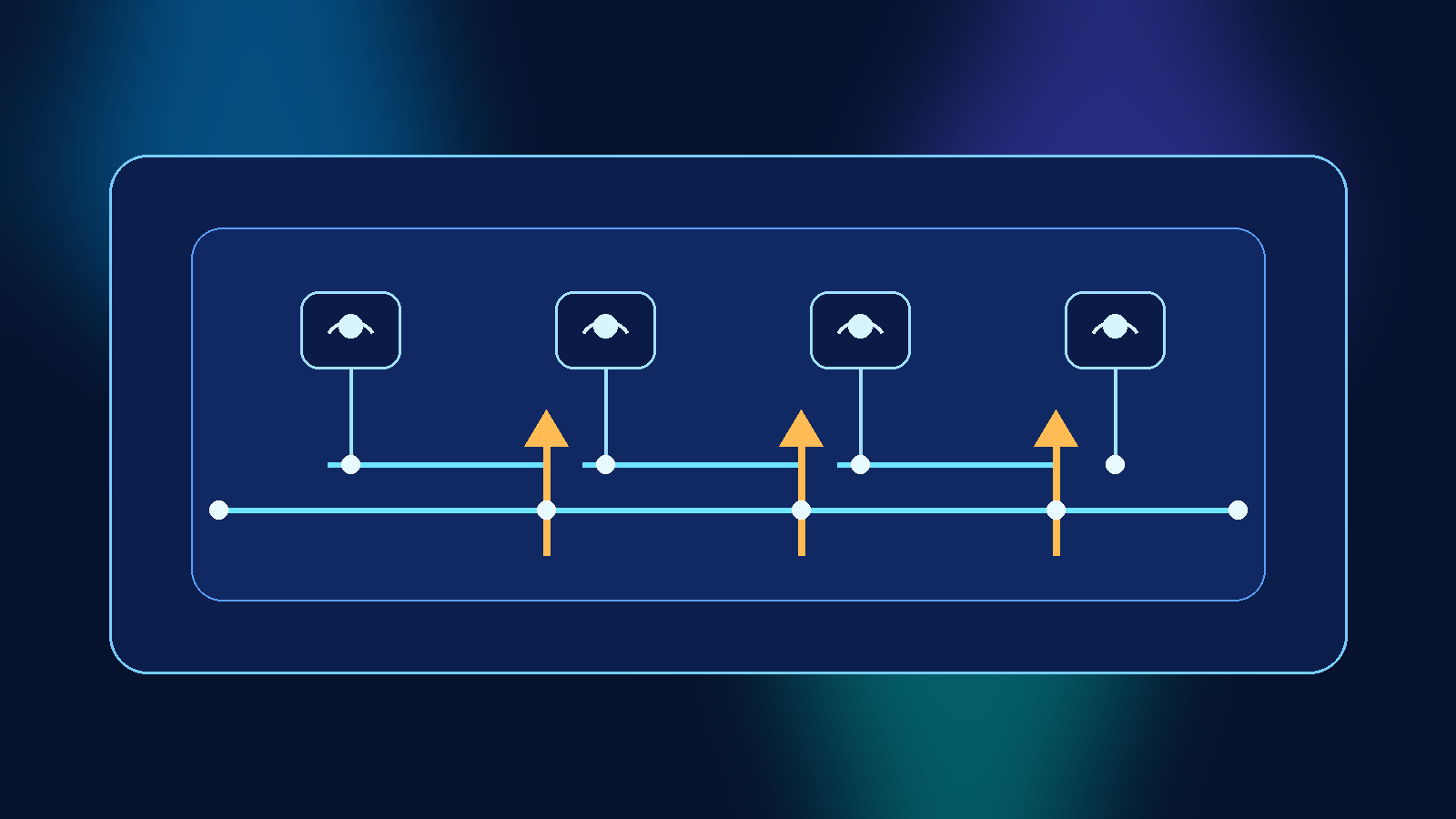

A lot of teams still talk about AI memory as if it were just a transcript database. In practice, memory is a stack of mechanisms. A chatbot may store recent exchanges for continuity, generate compact summaries for longer sessions, push selected facts into a profile store, and rely on retrieval pipelines that bring relevant documents back into the prompt at answer time. Each one of those layers can preserve sensitive information in a slightly different form.

That matters because controls that work for one layer may fail for another. Deleting a visible chat thread does not always remove a derived summary. Revoking a connector does not necessarily clear cached retrieval results. Redacting a source document does not instantly invalidate the embedding or index built from it. If security reviews only look at the user-facing transcript, they miss the places where durable exposure is more likely to hide.

Scope memory by identity, purpose, and time

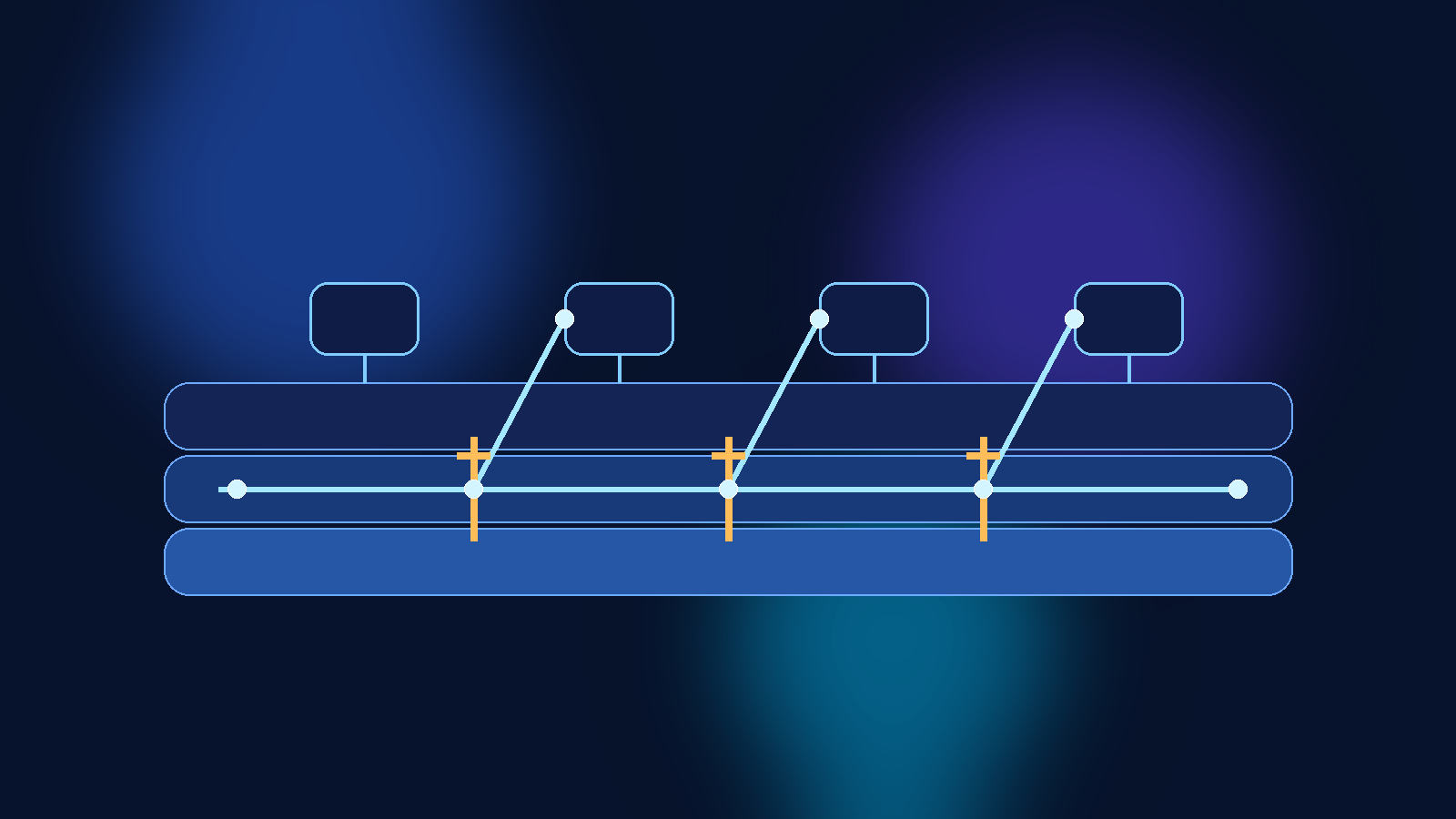

The strongest control is not a clever filter. It is narrow scope. Memory should be partitioned by who the user is, what workflow they are performing, and how long the data is actually useful. If a support agent, a finance analyst, and a developer all use the same internal AI platform, they should not be drawing from one vague pool of retained context simply because the platform makes that technically convenient.

Purpose matters as much as identity. A user working on contract review should not automatically carry that memory into a sales forecasting workflow, even if the same human triggered both sessions. Time matters too. Some context is helpful for minutes, some for days, and some should not survive a single answer. The default should be expiration, not indefinite retention disguised as personalization.

- Separate memory stores by user, workspace, or tenant boundary.

- Use task-level isolation so one workflow does not quietly bleed into another.

- Set retention windows that match business need instead of leaving durable storage turned on by default.

Treat retrieval indexes like data stores, not helper features

Retrieval is often sold as a safer pattern than training because teams can update documents without retraining the model. That is true, but it can also create a false sense of simplicity. Retrieval indexes still represent structured access to internal knowledge, and they deserve the same governance mindset as any other data system. If the wrong data enters the index, the AI can expose it with remarkable confidence.

Strong teams control what gets indexed, who can query it, and how freshness is enforced after source changes. They also decide whether certain classes of content should be summarized rather than retrieved verbatim. For highly sensitive repositories, the answer may be that the system can answer metadata questions about document existence or policy ownership without ever returning the raw content itself.

That design choice is less flashy than a giant all-knowing enterprise search layer, but it is usually the more defensible one. A retrieval pipeline should be precise enough to help users work, not broad enough to feel magical at the expense of control.

Redaction and deletion have to reach derived memory too

One of the easiest mistakes to make is assuming that deleting the original source solves the whole problem. In AI systems, derived artifacts often outlive the thing they came from. A secret copied into a chat can show up later in a summary. A sensitive document can leave traces in chunk caches, embeddings, vector indexes, or evaluation datasets. A user profile can preserve a fact that was only meant to be temporary.

That is why deletion workflows need a map of downstream memory, not just upstream storage. If the legal, security, or governance team asks for removal, the platform should be able to trace where the data may persist and clear or rebuild those derived layers in a deliberate way. Without that discipline, teams create the appearance of deletion while the AI keeps enough residue to surface the same information later.

Logging should explain why the AI knew something

When an AI answer exposes something surprising, the first question is usually simple: how did it know that? A mature platform should be able to answer with more than a shrug. Good observability ties outputs back to the memory and retrieval path that influenced them. That means recording which document set was queried, which profile or summary store was used, what policy filters were applied, and whether any redaction or ranking step changed the result.

Those logs are not just for post-incident review. They are also what help teams tune the system before an incident happens. If a supposedly narrow assistant routinely reaches into broad knowledge collections, or if short-term memory is being retained far longer than intended, the logs should make that drift visible before users discover it the hard way.

Make product decisions that reduce memory pressure

Not every problem needs a longer memory window. Sometimes the safer design is to ask the user to confirm context again, re-select a workspace, or explicitly pin the document set for a task. Product teams often view those moments as friction. In reality, they can be healthy boundaries that prevent the assistant from acting like it has broader standing knowledge than it really should.

The best enterprise AI products are not the ones that remember everything. They are the ones that remember the right things, for the right amount of time, in the right place. That balance feels less magical than unrestricted persistence, but it is far more trustworthy.

Trustworthy AI memory is intentionally forgetful

Memory makes AI systems more useful, but it also widens the surface where governance can fail quietly. Teams that treat memory as a first-class security concern are more likely to avoid that trap. They scope it tightly, expire it aggressively, govern retrieval like a real data system, and make deletion reach every derived layer that matters.

If an enterprise AI assistant feels impressive because it never seems to forget, that may be a warning sign rather than a product win. In most organizations, the better design is an assistant that remembers enough to help, forgets enough to protect people, and can always explain where its context came from.