Teams often add a second or third model provider for good reasons. They want better fallback options, lower cost for simpler tasks, regional flexibility, or the freedom to use specialized models for search, extraction, and generation. The problem is that many teams wire each new provider directly into applications, which creates a policy problem long before it creates a scaling problem.

Once every app team owns its own prompts, credentials, rate limits, logging behavior, and safety controls, the platform starts to drift. One application redacts sensitive fields before sending prompts upstream, another does not. One team enforces approved models, another quietly swaps in a new endpoint on Friday night. The architecture may still work, but governance becomes inconsistent and expensive.

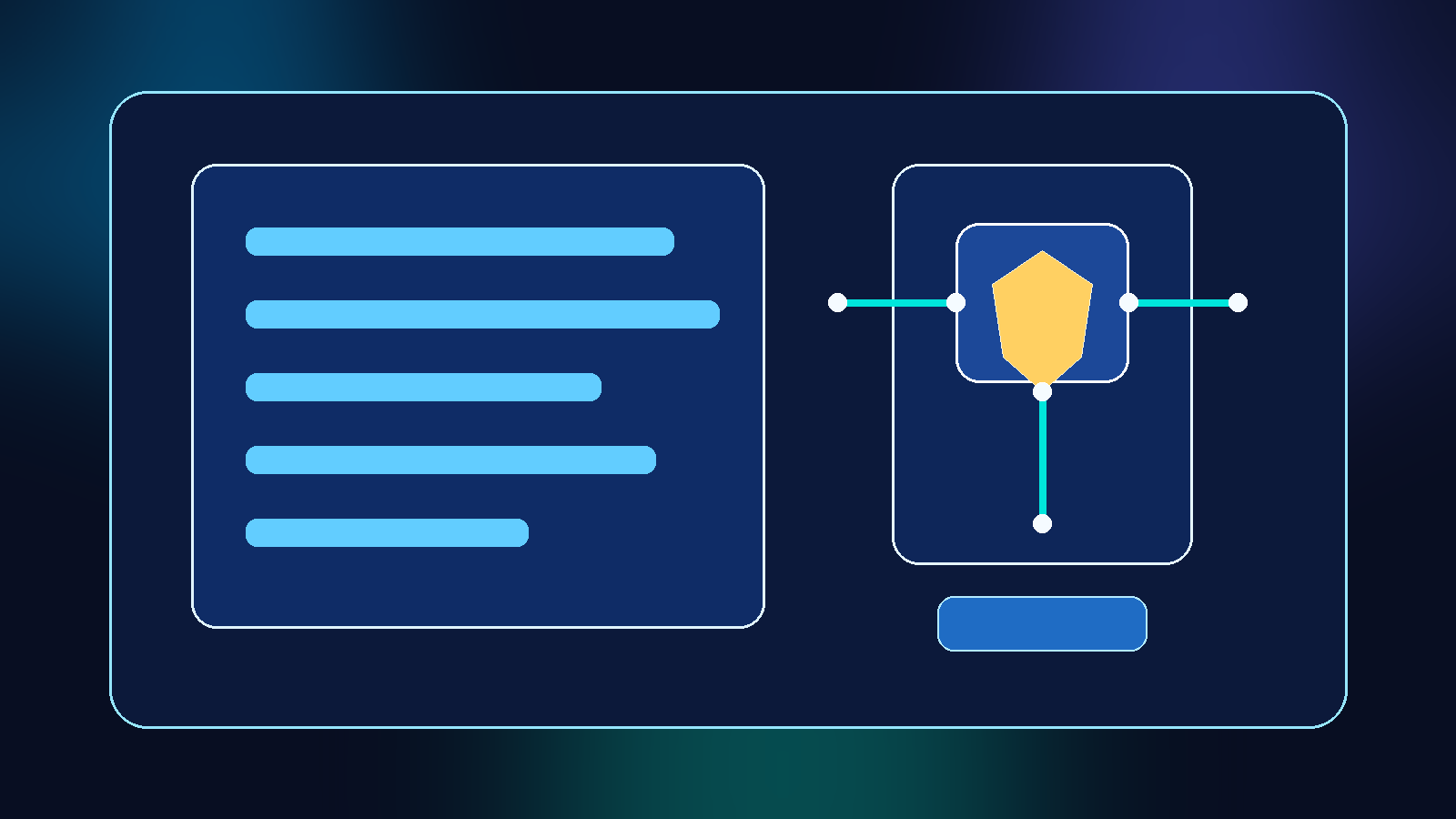

Azure API Management can help, but only if you treat it as a policy layer instead of just another proxy. Used well, APIM gives teams a place to standardize authentication, route selection, observability, and request controls across multiple AI backends. Used poorly, it becomes a fancy pass-through that adds latency without reducing risk.

Start With the Governance Problem, Not the Gateway Diagram

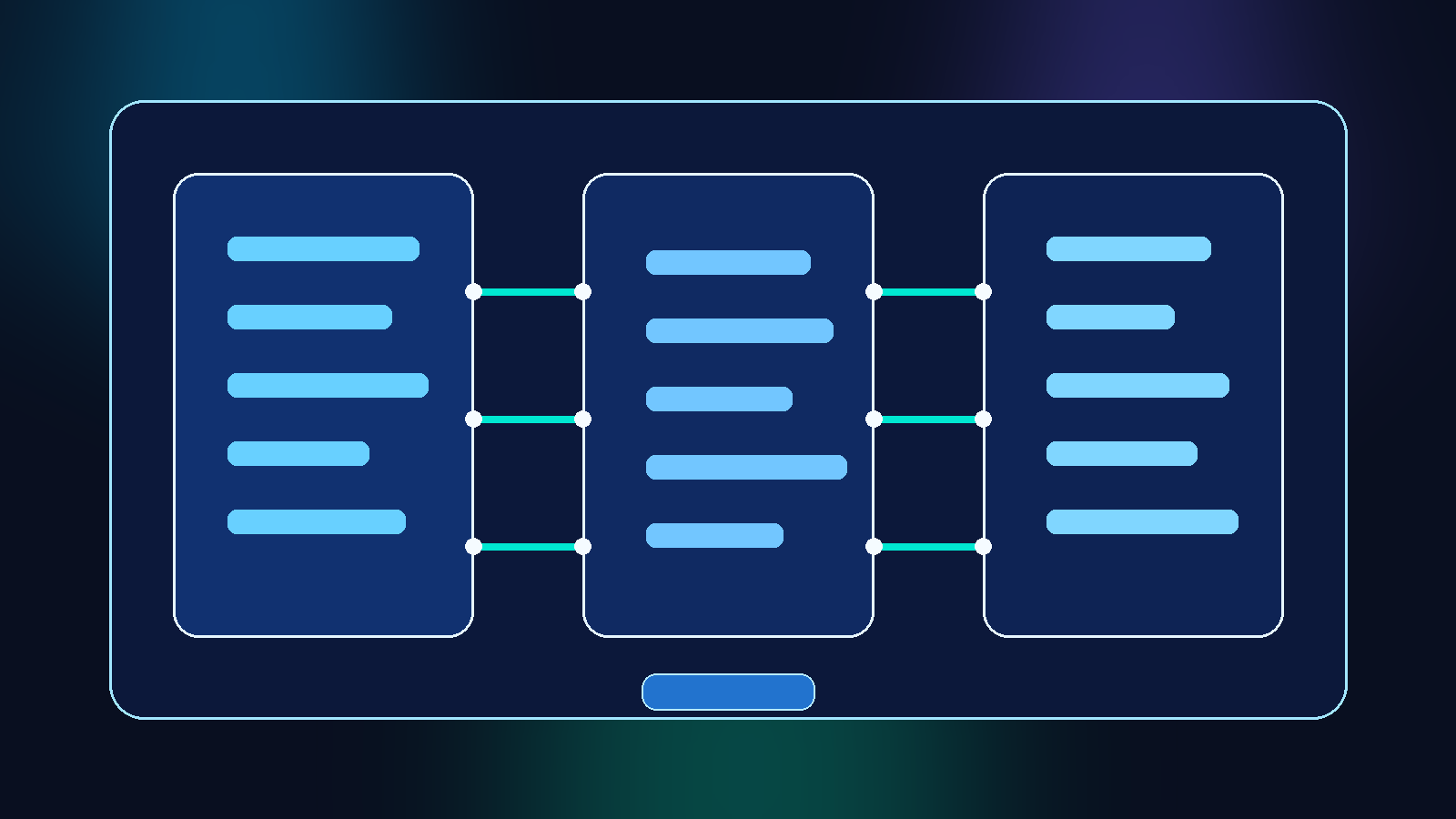

A lot of APIM conversations begin with the traffic flow. Requests enter through one hostname, policies run, and the gateway forwards traffic to Azure OpenAI or another backend. That picture is useful, but it is not the reason the pattern matters.

The real value is that a central policy layer gives platform teams a place to define what every AI call must satisfy before it leaves the organization boundary. That can include approved model catalogs, mandatory headers, abuse protection, prompt-size limits, region restrictions, and logging standards. If you skip that design work, APIM just hides complexity rather than controlling it.

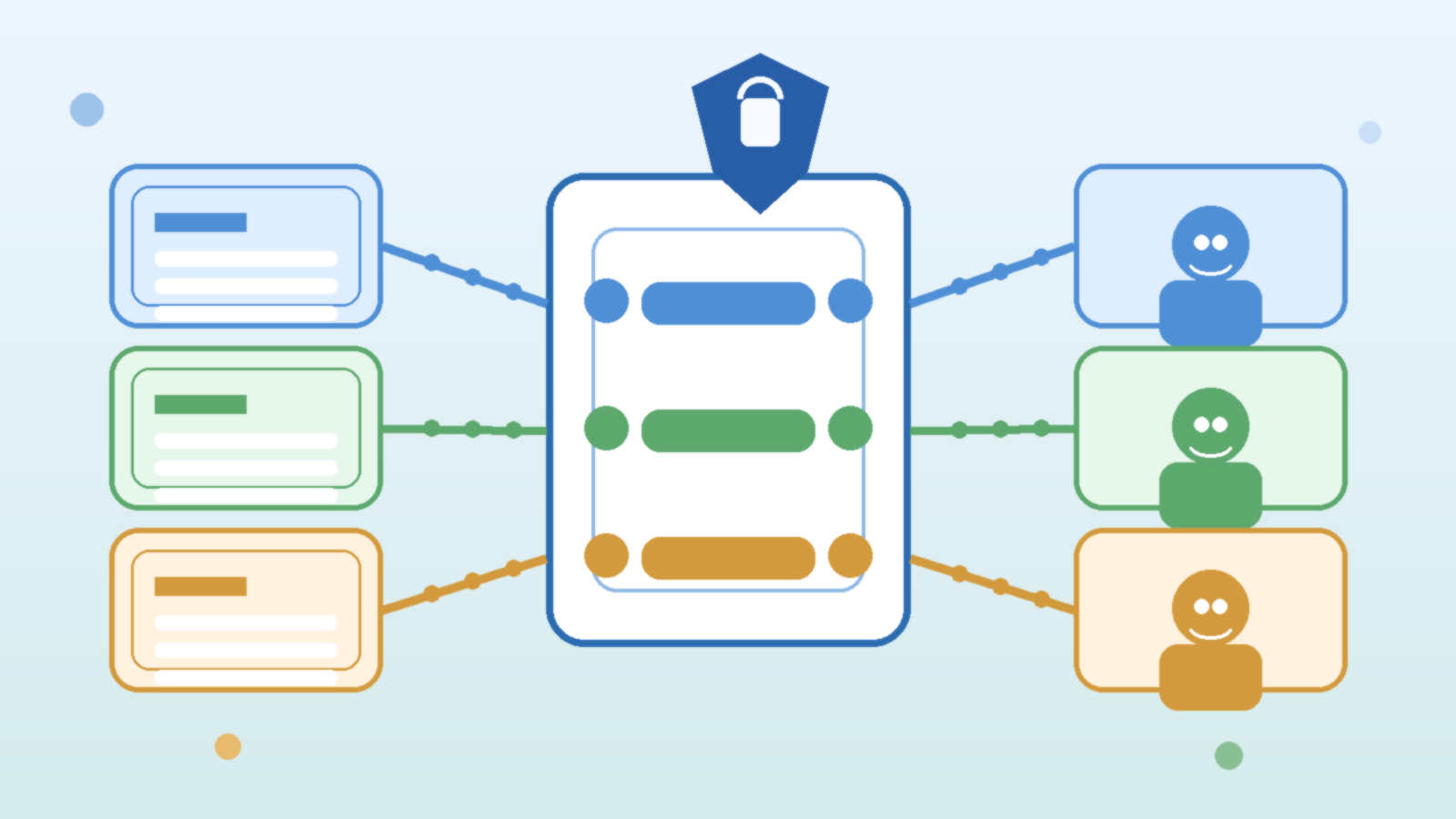

This is why strong teams define their non-negotiables first. They decide which backends are allowed, which data classes may be sent to which provider, what telemetry is required for every request, and how emergency provider failover should behave. Only after those rules are clear does the gateway become genuinely useful.

Separate Model Routing From Application Logic

One of the easiest ways to create long-term chaos is to let every application decide where each prompt goes. It feels flexible in the moment, but it hard-codes provider behavior into places that are difficult to audit and even harder to change.

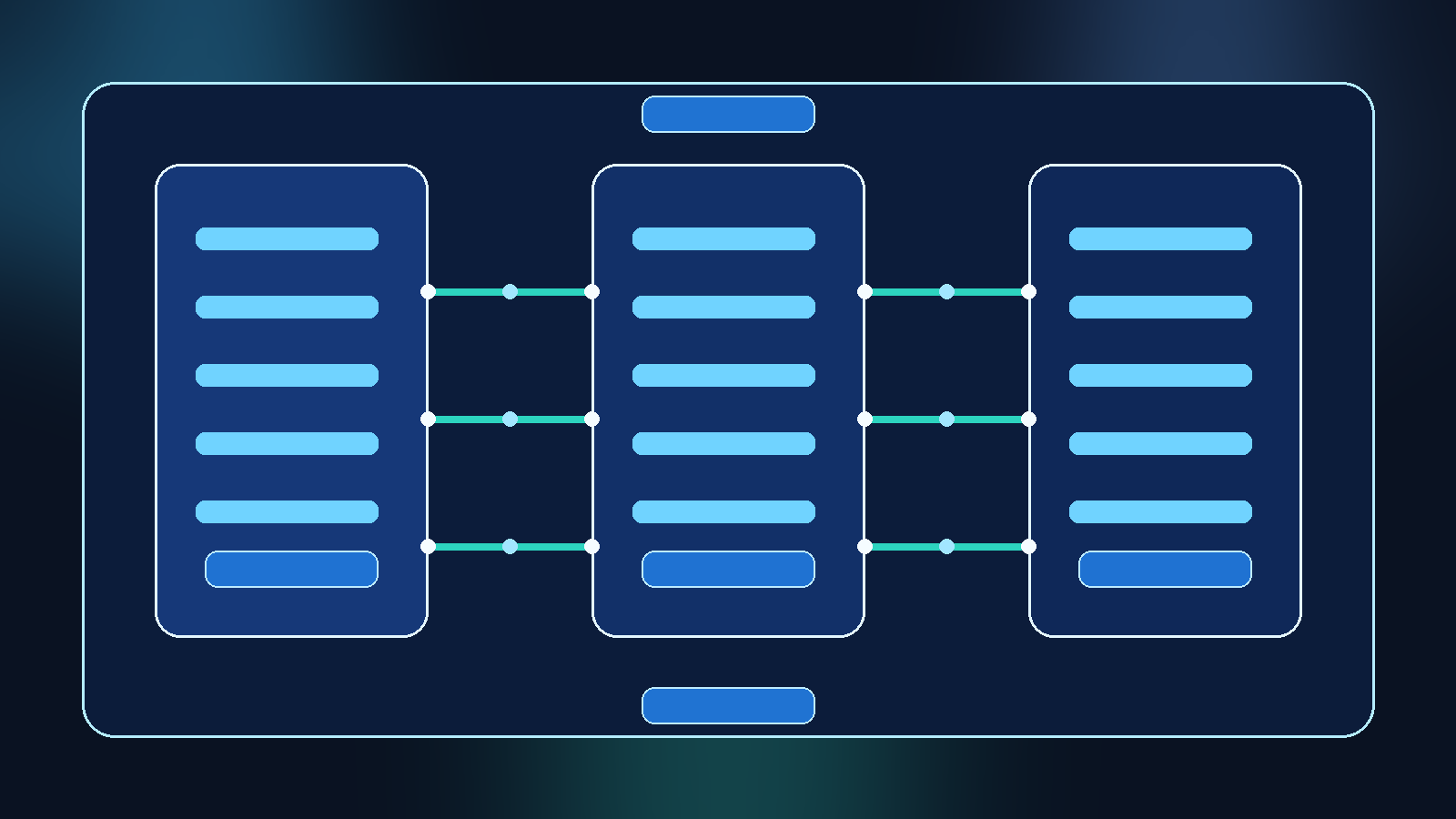

A better pattern is to let applications call a stable internal API contract while APIM handles routing decisions behind that contract. That does not mean the platform team hides all choice from developers. It means the routing choices are exposed through governed products, APIs, or policy-backed parameters rather than scattered custom code.

This separation matters when costs shift, providers degrade, or a new model becomes the preferred default for a class of workloads. If the routing logic lives in the policy layer, teams can change platform behavior once and apply it consistently. If the logic lives in twenty application repositories, every improvement turns into a migration project.

Use Policy to Enforce Minimum Safety Controls

APIM becomes valuable fast when it consistently enforces the boring controls that otherwise get skipped. For example, the gateway can require managed identity or approved subscription keys, reject oversized payloads, inject correlation IDs, and block calls to deprecated model deployments.

It can also help standardize pre-processing and post-processing rules. Some teams use policy to strip known secrets from headers, route only approved workloads to external providers, or ensure moderation and content-filter metadata are captured with each transaction. The exact implementation will vary, but the principle is simple: safety controls should not depend on whether an individual developer remembered to copy a code sample correctly.

That same discipline applies to egress boundaries. If a workload is only approved for Azure OpenAI in a specific geography, the policy layer should make the compliant path easy and the non-compliant path hard or impossible. Governance works better when it is built into the platform shape, not left as a wiki page suggestion.

Standardize Observability Before You Need an Incident Review

Multi-model environments fail in more ways than single-provider stacks. A request might succeed with the wrong latency profile, route to the wrong backend, exceed token expectations, or return content that technically looks valid but violates an internal policy. If observability is inconsistent, incident reviews become guesswork.

APIM gives teams a shared place to capture request metadata, route decisions, consumer identity, policy outcomes, and response timing in a normalized way. That makes it much easier to answer practical questions later. Which apps were using a deprecated deployment? Which provider saw the spike in failed requests? Which team exceeded the expected token budget after a prompt template change?

This data is also what turns governance from theory into management. Leaders do not need perfect dashboards on day one, but they do need a reliable way to see usage patterns, policy exceptions, and provider drift. If the gateway only forwards traffic and none of that context is retained, the control plane is missing its most useful control.

Do Not Let APIM Become a Backdoor Around Provider Governance

A common mistake is to declare victory once all traffic passes through APIM, even though the gateway still allows nearly any backend, key, or route the caller requests. In that setup, APIM may centralize access, but it does not centralize control.

The fix is to govern the products and policies as carefully as the backends themselves. Limit who can publish or change APIs, review policy changes like code, and keep provider onboarding behind an approval path. A multi-model platform should not let someone create a new external AI route with less scrutiny than a normal production integration.

This matters because gateways attract convenience exceptions. Someone wants a temporary test route, a quick bypass for a partner demo, or direct pass-through for a new SDK feature. Those requests can be reasonable, but they should be explicit exceptions with an owner and an expiration point. Otherwise the policy layer slowly turns into a collection of unofficial escape hatches.

Build for Graceful Provider Change, Not Constant Provider Switching

Teams sometimes hear “multi-model” and assume every request should dynamically choose the cheapest or fastest model in real time. That can work for some workloads, but it is usually not the first maturity milestone worth chasing.

A more practical goal is graceful provider change. The platform should make it possible to move a governed workload from one approved backend to another without rewriting every client, relearning every monitoring path, or losing auditability. That is different from building an always-on model roulette wheel.

APIM supports that calmer approach well. You can define stable entry points, approved routing policies, and controlled fallback behaviors while keeping enough abstraction to change providers when business or risk conditions change. The result is a platform that remains adaptable without becoming unpredictable.

Final Takeaway

Azure API Management can be an excellent policy layer for multi-model AI, but only if it carries real policy responsibility. The win is not that every AI call now passes through a prettier URL. The win is that identity, routing, observability, and safety controls stop fragmenting across application teams.

If you are adding more than one AI backend, do not ask only how traffic should flow. Ask where governance should live. For many teams, APIM is most valuable when it becomes the answer to that second question.