Why Observability Is Different for AI Agents

Traditional application monitoring asks a fairly narrow set of questions: Did the HTTP call succeed? How long did it take? What was the error code? For AI agents, those questions are necessary but nowhere near sufficient. An agent might complete every API call successfully, return a 200 OK, and still produce outputs that are subtly wrong, wildly expensive, or impossible to debug later.

The core challenge is that AI agents are non-deterministic. The same input can produce a different output on a different day, with a different model version, at a different temperature, or simply because the underlying model received an update from the provider. Reproducing a failure is genuinely hard. Tracing why a particular response happened — which tools were called, in what order, with what inputs, and which model produced which segment of reasoning — requires infrastructure that most teams are not shipping alongside their models.

This post covers the practical observability patterns that matter most when you move AI agents from prototype to production: what to instrument, how OpenTelemetry fits in, what metrics to track, and what questions you should be able to answer in under a minute when something goes wrong.

Start with Distributed Tracing, Not Just Logs

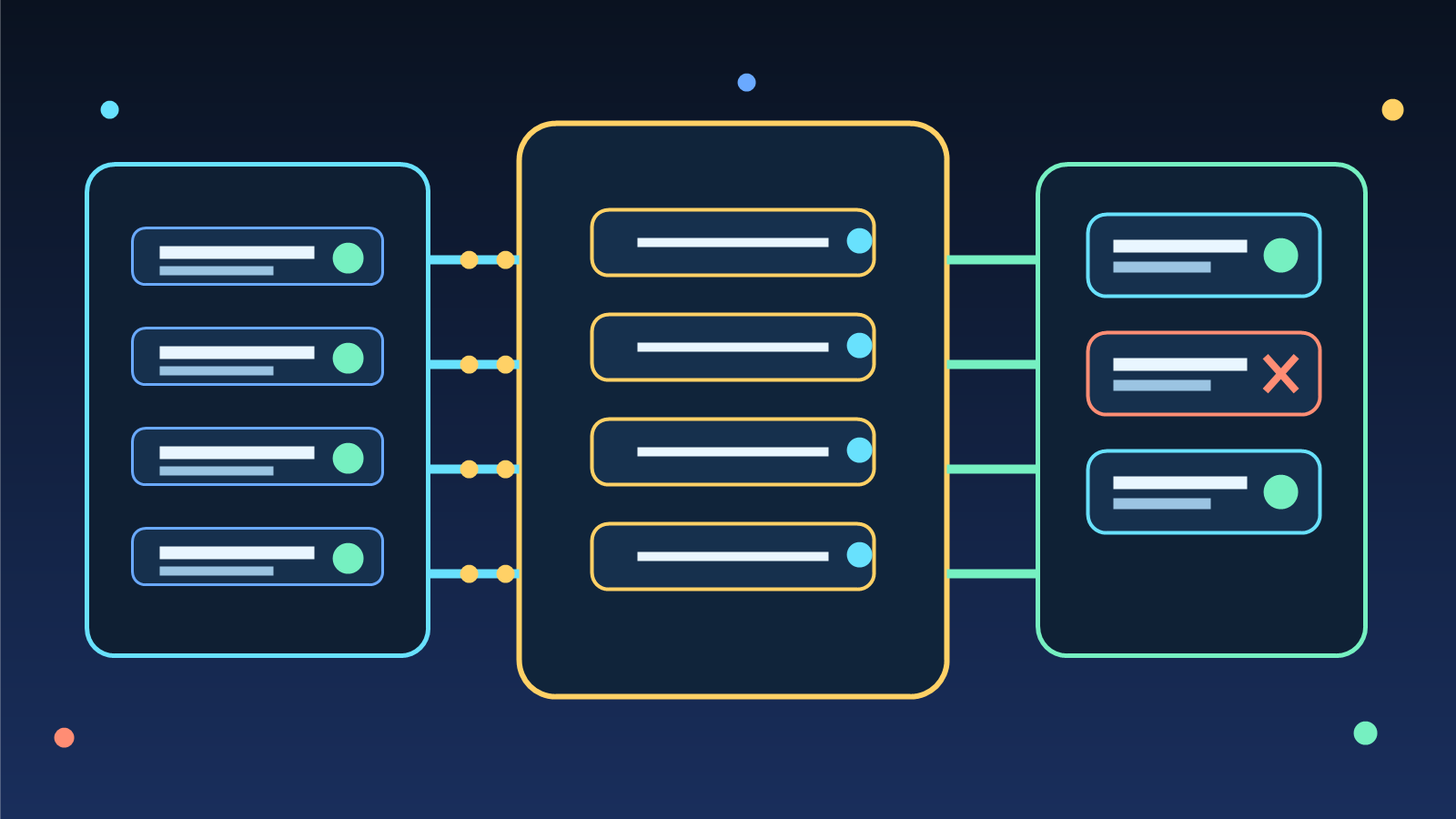

Logs are useful, but they fall apart for multi-step agent workflows. When an agent orchestrates three tool calls, makes two LLM requests, and then synthesizes a final answer, a flat log file tells you what happened in sequence but not why, and it makes correlating latency across steps tedious. Distributed tracing solves this by representing each logical step as a span with a parent-child relationship.

OpenTelemetry (OTel) is now the de facto standard for this. The OpenTelemetry GenAI semantic conventions, which reached stable status in late 2024, define consistent attribute names for LLM calls: gen_ai.system, gen_ai.request.model, gen_ai.usage.input_tokens, gen_ai.usage.output_tokens, and so on. Adopting these conventions means your traces are interoperable across observability backends — whether you ship to Grafana, Honeycomb, Datadog, or a self-hosted collector.

Each LLM call in your agent should be wrapped as a span. Each tool invocation should be a child span of the agent turn that triggered it. Retries should be separate spans, not silent swallowed events. When your provider rate-limits a request and your SDK retries automatically, that retry should be visible in your trace — because silent retries are one of the most common causes of mysterious cost spikes.

The Metrics That Actually Matter in Production

Not all metrics are equally useful for AI workloads. After instrumenting several agent systems, the following metrics tend to surface the most actionable signal.

Token Throughput and Cost Per Turn

Track input and output tokens per agent turn, not just per raw LLM call. An agent turn may involve multiple LLM calls — planning, tool selection, synthesis — and the combined token count is what translates to your monthly bill. Aggregate this by agent type, user segment, or feature area so you can identify which workflows are driving cost and make targeted optimizations rather than blunt model downgrades.

Time-to-First-Token and End-to-End Latency

Users experience latency as a whole, but debugging it requires breaking it apart. Capture time-to-first-token for streaming responses, tool execution time separately from LLM time, and the total wall-clock duration of the agent turn. When latency spikes, you want to know immediately whether the bottleneck is the model, the tool, or network overhead — not spend twenty minutes correlating timestamps across log lines.

Tool Call Success Rate and Retries

If your agent calls external APIs, databases, or search indexes, those calls will fail sometimes. Track success rate, error type, and retry count per tool. A sudden spike in tool failures often precedes a drop in response quality — the agent starts hallucinating answers because its information retrieval step silently degraded.

Model Version Attribution

Major cloud LLM providers do rolling model updates, and behavior can shift without a version bump you explicitly requested. Always capture the full model identifier — including any version suffix or deployment label — in your span attributes. When your eval scores drift or user satisfaction drops, you need to correlate that signal with which model version was serving traffic at that time.

Evaluation Signals: Beyond “Did It Return Something?”

Production observability for AI agents eventually needs to include output quality signals, not just infrastructure health. This is where most teams run into friction: evaluating LLM output at scale is genuinely hard, and full human review does not scale.

The practical approach is a layered evaluation strategy. Automated evals — things like response length checks, schema validation for structured outputs, keyword presence for expected content, and lightweight LLM-as-judge scoring — run on every response. They catch obvious regressions without human review. Sampled human eval or deeper LLM-as-judge evaluation covers a smaller percentage of traffic and flags edge cases. Periodic regression test suites run against golden datasets and fire alerts when pass rate drops below a threshold.

The key is to attach eval scores as structured attributes on your OTel spans, not as side-channel logs. This lets you correlate quality signals with infrastructure signals in the same query — for example, filtering to high-latency turns and checking whether output quality also degraded, or filtering to a specific model version and comparing average quality scores before and after a provider update.

Sampling Strategy: You Cannot Trace Everything

At meaningful production scale, tracing every span at full fidelity is expensive. A well-designed sampling strategy keeps costs manageable while preserving diagnostic coverage.

Head-based sampling — deciding at the start of a trace whether to record it — is simple but loses visibility into rare failures because you do not know they are failures when the decision is made. Tail-based sampling defers the decision until the trace is complete, allowing you to always record error traces and slow traces while sampling healthy fast traces at a lower rate. Most production teams end up with tail-based sampling configured to keep 100% of errors and slow outliers plus a fixed percentage of normal traffic.

For AI agents specifically, consider always recording traces where the agent used an unusually high token count or had more than a set number of tool calls — these are the sessions most likely to indicate prompt injection attempts, runaway loops, or unexpected behavior worth reviewing.

The One-Minute Diagnostic Test

A useful benchmark for whether your observability setup is actually working: can you answer the following questions in under sixty seconds using your dashboards and trace explorer, without digging through raw logs?

- Which agent type is generating the most cost today?

- What was the average end-to-end latency over the last hour, broken down by agent turn versus tool call?

- Which tool has the highest failure rate in the last 24 hours?

- What model version was serving traffic when last night’s error spike occurred?

- Which five individual traces from the last hour had the highest token counts?

If any of those require a Slack message to a teammate or a custom SQL query against raw logs, your instrumentation has gaps worth closing before your next incident.

Practical Starting Points

If you are starting from scratch or adding observability to an existing agent system, the following sequence tends to deliver the most value fastest.

- Instrument LLM calls with OTel GenAI attributes. This alone gives you token usage, latency, and model version in every trace. Popular frameworks like LangChain, LlamaIndex, and Semantic Kernel have community OTel instrumentation libraries that handle most of this automatically.

- Add a per-agent-turn root span. Wrap the entire agent turn in a parent span so tool calls and LLM calls nest under it. This makes cost and latency aggregation per agent turn trivial.

- Ship to a backend that supports trace-based alerting. Grafana Tempo, Honeycomb, Datadog APM, and Azure Monitor Application Insights all support this. Pick one based on where the rest of your infrastructure lives.

- Build a cost dashboard. Token count times model price per token, grouped by agent type and date. This is the first thing leadership will ask for and the most actionable signal for optimization decisions.

- Add at least one automated quality check per response. Even a simple schema check or response length outlier alert is better than flying blind on quality.

Getting Ahead of the Curve

Observability is not a feature you add after launch — it is a prerequisite for operating AI agents responsibly at scale. The teams that build solid tracing, cost tracking, and evaluation pipelines early are the ones who can confidently iterate on their agents without fear that a small prompt change quietly degraded the user experience for two weeks before anyone noticed.

The tooling is now mature enough that there is no good reason to skip this work. OpenTelemetry GenAI conventions are stable, community instrumentation libraries exist for major frameworks, and every major observability vendor supports LLM workloads. The gap between teams that have production AI observability and teams that do not is increasingly a gap in operational confidence — and that gap shows up clearly when something unexpected happens at 2 AM.