Most teams understand the security pitch for private endpoints. Keep AI traffic off the public internet, restrict access to approved networks, and reduce the chance that a rushed proof of concept becomes a broadly reachable production dependency. The problem is that many rollouts stop at the network diagram. The private endpoint gets turned on, developers lose access, automation breaks, and the platform team ends up making informal exceptions that quietly weaken the original control.

A better approach is to treat private connectivity as a platform design problem, not just a checkbox. Azure OpenAI can absolutely live behind private endpoints, but the deployment has to account for development paths, CI/CD flows, identity boundaries, DNS resolution, and the difference between experimentation and production. If those pieces are ignored, private networking becomes the kind of security control people work around instead of trust.

Start by separating who needs access from where access should originate

The first mistake is thinking about private endpoints only in terms of users. In practice, the more important question is where requests should come from. An interactive developer using a corporate laptop is one access pattern. A GitHub Actions runner, Azure DevOps agent, internal application, or managed service calling Azure OpenAI is a different one. If you treat them all the same, you either create unnecessary friction or open wider network paths than you intended.

Start by defining the approved sources of traffic. Production applications should come from tightly controlled subnets or managed hosting environments. Build agents should come from known runner locations or self-hosted infrastructure that can resolve the private endpoint correctly. Human testing should use a separate path, such as a virtual desktop, jump host, or developer sandbox network, instead of pushing every laptop onto the same production-style route.

That source-based view helps keep the architecture honest. It also makes later reviews easier because you can explain why a specific network path exists instead of relying on vague statements about team convenience.

Private DNS is usually where the rollout succeeds or fails

The private endpoint itself is often the easy part. DNS is where real outages begin. Once Azure OpenAI is tied to a private endpoint, the service name needs to resolve to the private IP from approved networks. If your private DNS zone links are incomplete, if conditional forwarders are missing, or if hybrid name resolution is inconsistent, one team can reach the service while another gets confusing connection failures.

That is why platform teams should test name resolution before they announce the control as finished. Validate the lookup path from production subnets, from developer environments that are supposed to work, and from networks that are intentionally blocked. The goal is not merely to confirm that the good path works. The goal is to confirm that the wrong path fails in a predictable way.

A clean DNS design also prevents a common policy mistake: leaving the public endpoint reachable because the private route was never fully reliable. Once teams start using that fallback, the security boundary becomes optional in practice.

Build a developer access path on purpose

Developers still need to test prompts, evaluate model behavior, and troubleshoot application calls. If the only answer is "use production networking," you end up normalizing too much access. If the answer is "file a ticket every time," people will search for alternate tools or use public AI services outside governance.

A better pattern is to create a deliberate developer path with narrower permissions and better observability. That may be a sandbox virtual network with access to nonproduction Azure OpenAI resources, a bastion-style remote workstation, or an internal portal that proxies requests to the service on behalf of authenticated users. The exact design can vary, but the principle is the same: developers need a path that is supported, documented, and easier than bypassing the control.

This is also where environment separation matters. Production private endpoints should not become the default testing target for every proof of concept. Give teams a safe place to experiment, then require stronger change control when something is promoted into a production network boundary.

Use identity and network controls together, not as substitutes

Private endpoints reduce exposure, but they do not replace identity. If a workload can reach the private IP and still uses overbroad credentials, you have only narrowed the route, not the authority. Azure OpenAI deployments should still be tied to managed identities, scoped secrets, or other clearly bounded authentication patterns depending on the application design.

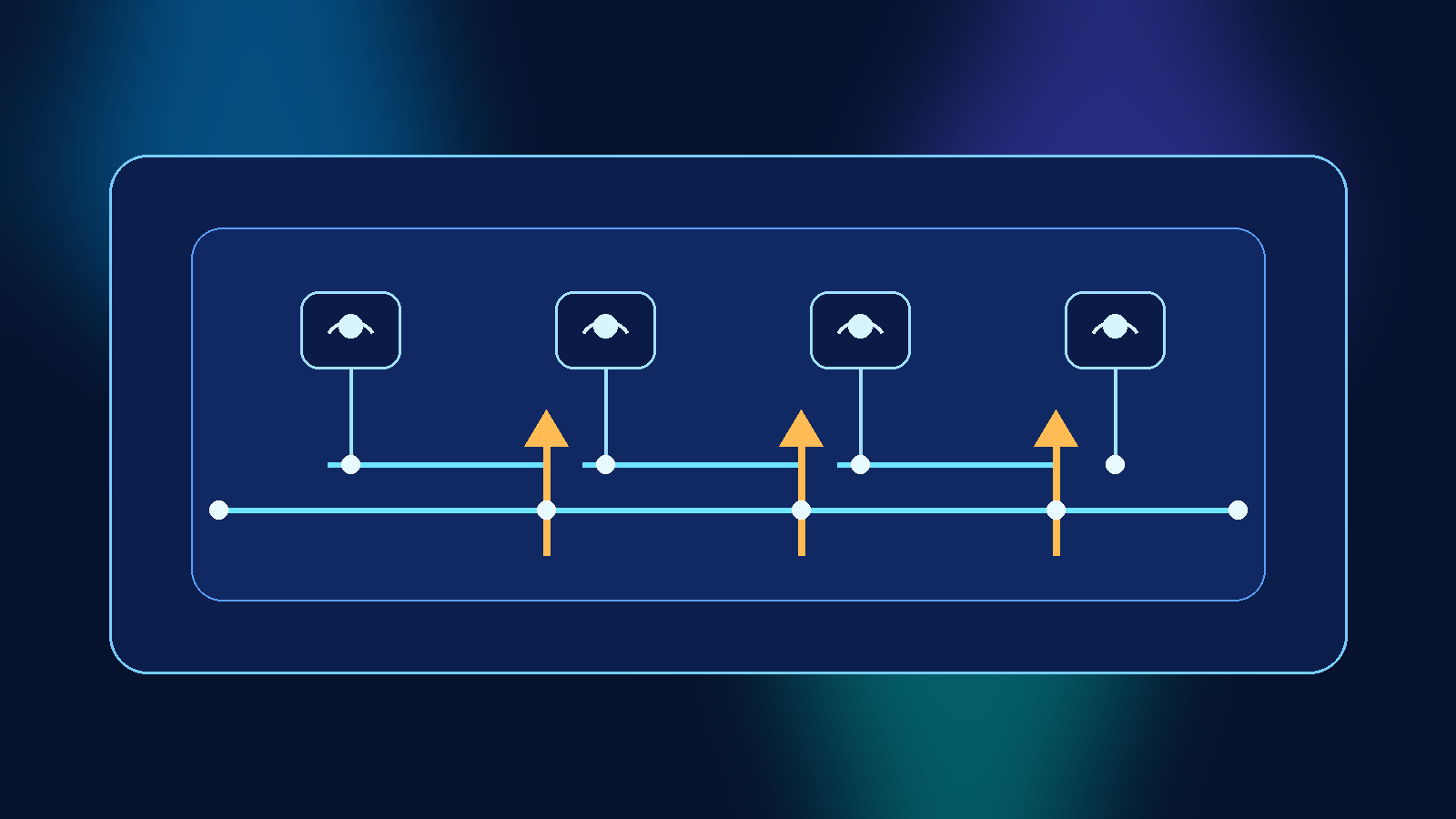

The same logic applies to human access. If a small number of engineers need diagnostic access, that should be role-based, time-bounded where possible, and easy to review later. Security teams sometimes overestimate what network isolation can solve by itself. In reality, the strongest design is a layered one where identity decides who may call the service and private networking decides from where that call may originate.

That layered model is especially important for AI workloads because the data being sent to the model often matters as much as the model resource itself. A private endpoint does not automatically prevent sensitive prompts from being mishandled elsewhere in the workflow.

Plan for CI/CD and automation before the first outage

A surprising number of private endpoint rollouts fail because deployment automation was treated as an afterthought. Template validation jobs, smoke tests, prompt evaluation pipelines, and application release checks often need to reach the service. If those jobs run from hosted agents on the public internet, they will fail the moment private access is enforced.

There are workable answers, but they need to be chosen explicitly. You can run self-hosted agents inside approved networks, move test execution into Azure-hosted environments with private connectivity, or redesign the pipeline so only selected stages need live model access. What does not work well is pretending that deployment tooling will somehow adapt on its own.

This is also a governance issue. If the only way to keep releases moving is to temporarily reopen public access during deployment windows, the control is not mature yet. Stable security controls should fit into the delivery process instead of forcing repeated exceptions.

Make exception handling visible and temporary

Even well-designed environments need exceptions sometimes. A migration may need short-term dual access. A vendor-operated tool may need a controlled validation window. A developer may need break-glass troubleshooting during an incident. The mistake is allowing those exceptions to become permanent because nobody owns their cleanup.

Treat private endpoint exceptions like privileged access. Give them an owner, a reason, an approval path, and an expiration point. Log which systems were opened, for whom, and for how long. If an exception survives multiple review cycles, that usually means the baseline architecture still has a gap that needs to be fixed properly.

Visible exceptions are healthier than invisible workarounds. They show where the platform still creates friction, and they give the team a chance to improve the standard path instead of arguing about policy in the abstract.

Measure whether the design is reducing risk or just relocating pain

The real test of a private endpoint strategy is not whether a diagram looks secure. It is whether the platform reduces unnecessary exposure without teaching teams bad habits. Watch for signals such as repeated requests to re-enable public access, DNS troubleshooting spikes, shadow use of unmanaged AI tools, or pipelines that keep failing after network changes.

Good platform security should make the right path sustainable. If developers have a documented test route, automation has an approved execution path, DNS works consistently, and exceptions are rare and temporary, then private endpoints are doing their job. If not, the environment may be secure on paper but fragile in daily use.

Private endpoints for Azure OpenAI are worth using, especially for sensitive workloads. Just do not mistake private connectivity for a complete operating model. The teams that succeed are the ones that pair network isolation with identity discipline, reliable DNS, workable developer access, and automation that was designed for the boundary from day one.

Leave a Reply