Internal AI tools usually start with good intentions. A team wants faster summaries, better search, or a lightweight assistant that understands company documents. Someone builds a prototype, people like it, and adoption jumps before governance catches up.

That is where the risk shows up. An internal AI tool can feel small because it lives inside the company, but it still touches sensitive data, operational workflows, and employee trust. If nobody owns the boundaries, the tool can become shadow IT with better marketing.

Speed Without Ownership Creates Quiet Risk

Fast internal adoption often hides basic unanswered questions. Who approves new data sources? Who decides whether the system can take action instead of just answering questions? Who is on the hook when the assistant gives a bad answer about policy, architecture, or customer information?

If those answers are vague, the tool is already drifting into shadow IT territory. Teams may trust it because it feels useful, while leadership assumes someone else is handling the risk. That gap is how small experiments grow into operational dependencies with weak accountability.

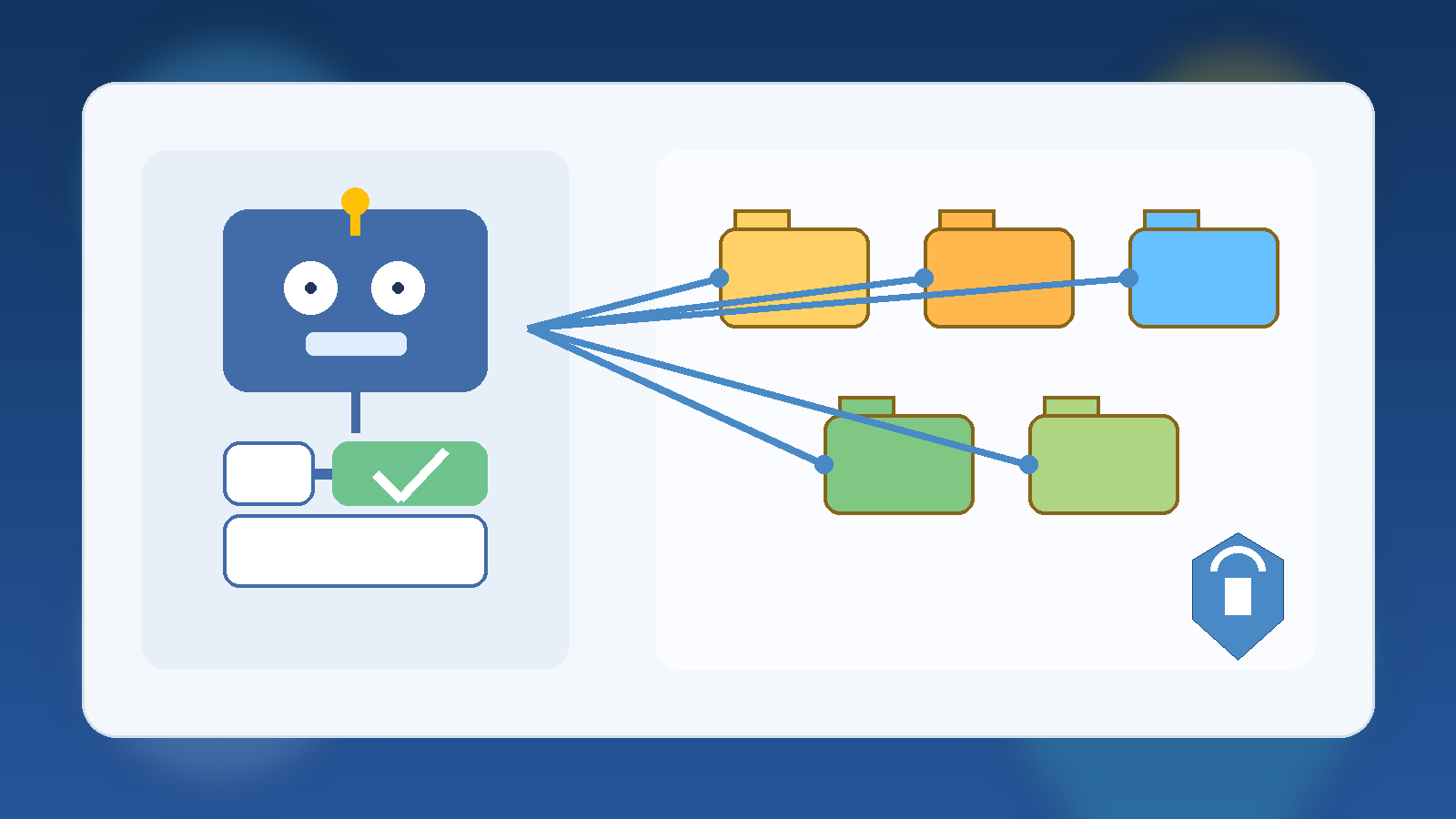

Start With a Clear Operating Boundary

The strongest internal AI programs define a narrow first job. Maybe the assistant can search approved documentation, summarize support notes, or draft low-risk internal content. That is a much healthier launch point than giving it broad access to private systems on day one.

A clear boundary makes review easier because people can evaluate a real use case instead of a vague promise. It also gives the team a chance to measure quality and failure modes before the system starts touching higher-risk workflows.

Decide Which Data Is In Bounds Before People Ask

Most governance trouble shows up around data, not prompts. Employees will naturally ask the tool about contracts, HR issues, customer incidents, pricing notes, and half-finished strategy documents if the interface allows it. If the system has access, people will test the edge.

That means teams should define approved data sources before broad rollout. It helps to write the rule in plain language: what the assistant may read, what it must never ingest, and what requires an explicit review path first. Ambiguity here creates avoidable exposure.

Give the Tool a Human Escalation Path

Internal AI should not pretend it can safely answer everything. When confidence is low, policy is unclear, or a request would trigger a sensitive action, the system needs a graceful handoff. That might be a support queue, a documented owner, or a clear instruction to stop and ask a human reviewer.

This matters because trust is easier to preserve than repair. People can accept a tool that says, “I am not the right authority for this.” They lose trust quickly when it sounds confident and wrong in a place where accuracy matters.

Measure More Than Usage

Adoption charts are not enough. A healthy internal AI program also watches for error patterns, risky requests, stale knowledge, and the amount of human review still required. Those signals reveal whether the tool is maturing into infrastructure or just accumulating unseen liabilities.

- Track which sources the assistant relies on most often.

- Review failed or escalated requests for patterns.

- Check whether critical guidance stays current after policy changes.

- Watch for teams using the tool outside its original scope.

That kind of measurement keeps leaders grounded in operational reality. It shifts the conversation from “people are using it” to “people are using it safely, and we know where it still breaks.”

Final Takeaway

Internal AI tools do not become shadow IT because teams are reckless. They become shadow IT because usefulness outruns ownership. The cure is not endless bureaucracy. It is clear scope, defined data boundaries, accountable operators, and a visible path for human review when the tool reaches its limits.

If an internal assistant is becoming important enough that people depend on it, it is important enough to govern like a real system.

Leave a Reply